Why the Best AI Tools for Data Integration Are Transforming Business in 2026

The best AI tools for data integration in 2026 are no longer just for large enterprises with dedicated data engineering teams. Small and mid-sized businesses now have access to platforms that automate the hard parts — mapping fields, detecting errors, and keeping pipelines running — without requiring a team of specialists.

Quick answer: Here are the top AI data integration tools to know right now:

| Tool | Best For | Key AI Feature |

|---|---|---|

| Fivetran | Automated cloud pipelines | Schema drift handling, 700+ connectors |

| Informatica | Enterprise governance | CLAIRE AI engine for mapping & cleansing |

| Airbyte | Open-source flexibility | Custom connector builder, vector DB support |

| Matillion | Cloud warehouse transforms | Visual pipelines, 10x faster SQL development |

| SnapLogic | iPaaS & enterprise apps | AI-assisted integration design |

| Skyvia | Small teams & no-code use | G2 4.8/5, visual point-and-click setup |

| AWS Glue | AWS-native workloads | Serverless, usage-based, auto schema detection |

| Nexla | AI-ready data products | Agentic AI, 550+ connectors, RAG support |

Most businesses today pull data from dozens of sources — CRMs, spreadsheets, SaaS apps, payment systems, and more. The problem is that none of these systems talk to each other naturally. Data sits in silos. Reports are out of date. Decisions get made on incomplete information.

Traditional ETL (Extract, Transform, Load) tools were built to solve this — but they required heavy engineering effort. Schema changes broke pipelines. New data sources meant new custom code. Maintenance never ended.

AI-powered data integration changes this equation. These tools use machine learning to automatically map fields between systems, detect anomalies before they become problems, and adapt when data structures change — all with far less manual work.

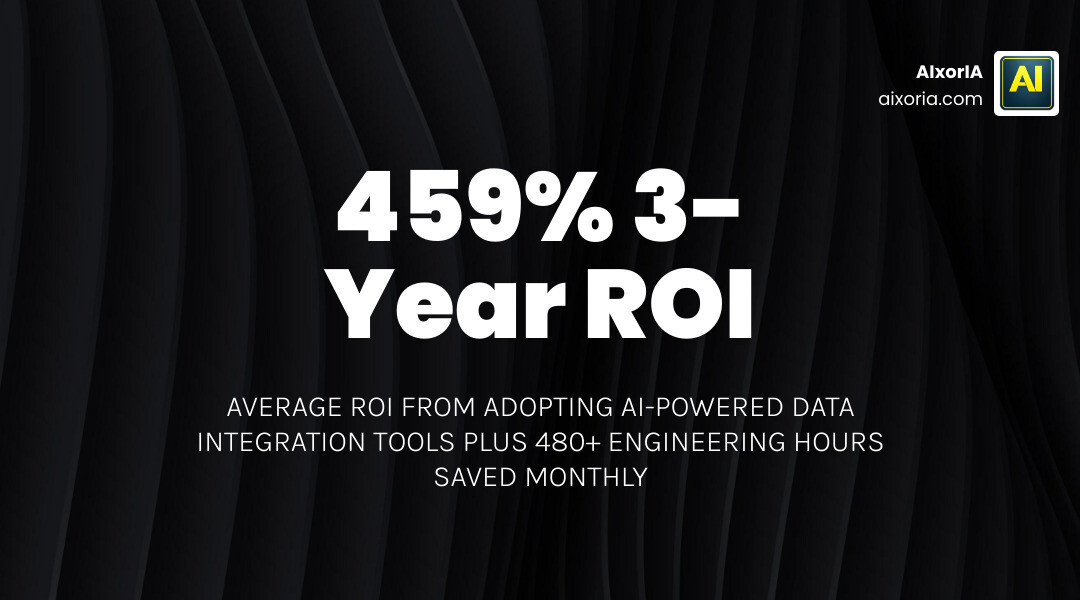

One real-world example makes this concrete: a fast-growing retail company saved over 480 hours of engineering work every month by switching to an AI-assisted integration platform. That is the equivalent of four full-time engineers freed up to work on higher-value tasks.

This guide walks you through the top tools, how to pick the right one, and what to watch out for — in plain language, without the jargon.

Defining the Best AI Tools for Data Integration in 2026

In 2026, the definition of data integration has shifted from manual “plumbing” to intelligent orchestration. The Best AI Tools for Data Integration serve as the nervous system of your business, connecting disparate apps like Salesforce, NetSuite, and Snowflake into a unified whole.

Unlike traditional methods that rely on rigid, hand-coded scripts, modern AI tools use “probabilistic” logic. This means the software doesn’t just wait for a human to tell it that “CustID” in one system matches “ClientNumber” in another; it infers these relationships based on the data itself.

One of the biggest headaches in data engineering is “schema drift.” This happens when a software provider adds a new column or changes a data type in their API. In the old days, this would break your entire pipeline. Today, the Best AI Tools for Data Integration in 2026 automatically detect these changes and adjust the pipeline without human intervention.

For teams that still prefer coding, ETL in Python remains a powerful option, especially when combined with AI libraries that can suggest code snippets or automate documentation.

Traditional ETL vs AI-Driven ELT

| Feature | Traditional ETL | AI-Driven ELT |

|---|---|---|

| Architecture | Transform before loading | Load raw data, transform in warehouse |

| Schema Handling | Manual updates required | Automated schema drift detection |

| Mapping | Hard-coded rules | Intelligent, inferred mapping |

| Scalability | Limited by server hardware | Elastic cloud compute |

Key Capabilities of the Best AI Tools for Data Integration

When we evaluate these tools, we look for several key “intelligent” features that separate the leaders from the laggards:

- Anomaly Detection: AI monitors your data flow and flags outliers—like a sudden spike in “zero-dollar” invoices—that might indicate a source system error.

- Predictive Optimization: Historical data is used to adjust batch sizes and parallelization, ensuring your pipelines run at peak efficiency.

- Intelligent Mapping: Tools like those being developed by teams like Lume is joining Harvey focus on using LLMs to map complex data structures with high confidence scores.

- Natural Language Interfaces: Imagine asking your data tool, “Sync my Shopify orders to my accounting software and mask all customer names,” and having it build the pipeline for you.

- Automated Data Cleansing: AI identifies and merges duplicate records (deduplication) and fixes formatting errors (like inconsistent phone number formats) automatically.

Real-Time Streaming and Change Data Capture

For many businesses, waiting 24 hours for a data refresh is no longer acceptable. Real-time streaming allows for “sub-second latency,” which is vital for fraud detection or live inventory management.

The 9 Best Data Integration Tools for Cloud Services in 2026 often utilize Change Data Capture (CDC). Instead of scanning your entire database every hour, CDC reads the “transaction logs” to see exactly what changed. This approach ensures maximum data freshness without putting a heavy load on your production systems.

Strategic Selection Framework for Modern Data Teams

Choosing a tool isn’t just about features; it’s about fit. We recommend looking at three main pillars: data volume, compliance, and your team’s existing expertise.

If you are a smaller team, you might prioritize ease of use. If you are in a regulated industry like healthcare or finance, your focus will be on “field-level masking” and SOC2 compliance. You can explore a broader list in our guide to the 20 Best ETL Tools for Data Integration.

For those managing massive datasets across global regions, the Most Reliable ETL Tools for Enterprise Data in 2026 offer the governance and audit logs required to keep regulators happy.

Choosing the Best AI Tools for Data Integration Based on Volume

Scalability is the silent killer of data budgets. Many tools use “consumption-based pricing,” where you pay for the number of rows moved. While this is great for getting started, it can lead to “invoice shock” as your business grows.

- Small Volumes: Look for tools with a generous free tier or flat-rate pricing.

- Enterprise Volumes: Look for Best ETL Tools in Data Warehouse in 2026 that offer predictable, credit-based models.

- Databricks Users: If you are heavily invested in the Lakehouse architecture, check out The 8 Best ETL Tools for Databricks in 2026 for native integrations that maximize performance.

Low-Code and No-Code Accessibility for Business Units

In 2026, data isn’t just an “IT thing.” Marketing, Sales, and Operations teams often need to move data themselves. This is where No-Code ETL Tools in 2026 shine.

By providing visual interfaces, these tools allow a RevOps manager to sync Salesforce data to a warehouse without writing a single line of SQL. This provides a massive engineering ROI because your expensive data engineers can focus on building AI models rather than fixing broken CSV imports. For companies living entirely in the cloud, the Best ETL Tools for SaaS Companies in 2026 offer pre-built connectors that can be set up in minutes.

Emerging Trends: Agentic AI and Composable Architectures

The most exciting development in 2026 is the rise of Agentic AI in data pipelines. These aren’t just static tools; they are “AI Agents” that can autonomously manage the data lifecycle.

Using the Model Context Protocol (MCP), these agents can understand the context of the data they are moving. For example, an agent might notice that a “discount” field in your sales data is being applied incorrectly and proactively suggest a fix to the transformation logic.

We are also seeing the rise of Composable Architectures, where you don’t buy one giant “do-it-all” platform. Instead, you pick the best-of-breed tools for ingestion, transformation, and governance. Developers can even find Best Free ETL Tools for Developers in 2026 to build custom, open-source components that plug into these larger systems.

Frequently Asked Questions about AI Data Integration

Will AI fully replace traditional data engineers?

No, but it will change their job. AI handles the repetitive “grunt work” like mapping and error checking. Human expertise is still vital for high-level workflow design, ensuring business alignment, and making final decisions on complex data governance. Think of AI as an assistant that makes a single engineer ten times more productive.

What is the average ROI of adopting AI-powered integration?

The numbers are impressive. Research shows an average three-year ROI of 459% for top-tier automated platforms. Beyond the money, the time-to-market for new data products is often slashed from weeks to hours. One logistics firm reported saving $1.2M annually by using AI to harmonize their supply chain data.

How does AI handle unstructured data sources?

This is a huge leap forward. Modern tools can now ingest PDFs, images, and text files, using LLMs to extract relevant data points and load them into structured databases. By using vector databases and semantic mapping, these tools make unstructured data “searchable” and ready for use in RAG (Retrieval-Augmented Generation) flows for internal AI chatbots.

Conclusion

At AIxorIA, we believe that the Best AI Tools for Data Integration should be accessible to everyone, not just tech giants. Whether you are looking for custom AI solutions, tool training workshops to upskill your team, or performance audits to see where your current pipelines are leaking money, we are here to help.

Our mission is to provide simple language help, affordable services, and fast support to ensure your data is working for you, not the other way around. Ready to stop fighting with spreadsheets and start scaling? Empower your business with custom AI solutions and let us help you build a data foundation that is ready for the future.

6 thoughts on “Best AI Tools for Data Integration in 2026 | Top Picks”