Artificial intelligence is moving from the margins of finance into the core of lending, trading, monitoring, and customer service. That is exactly why the Bank of England has become more vocal about AI risk: the issue is no longer whether AI improves efficiency, but whether it introduces new vulnerabilities into the financial system. In April 2025, the Bank’s Financial Policy Committee said AI matters to financial stability because it can create both benefits and potential risks, including systemic consequences when common weaknesses appear across widely used models.

For a US audience, this is not a UK-only story. The Bank of England’s 2026 priorities for international banks active in the UK say firms need robust risk management, governance, operational resilience, and data controls, while also noting that advances in AI bring opportunities but can amplify inaccurate data, reliance on a small number of third-party providers, and cyber risk. In other words, if your institution touches London markets, UK regulation can shape your global AI playbook.

Table of Contents

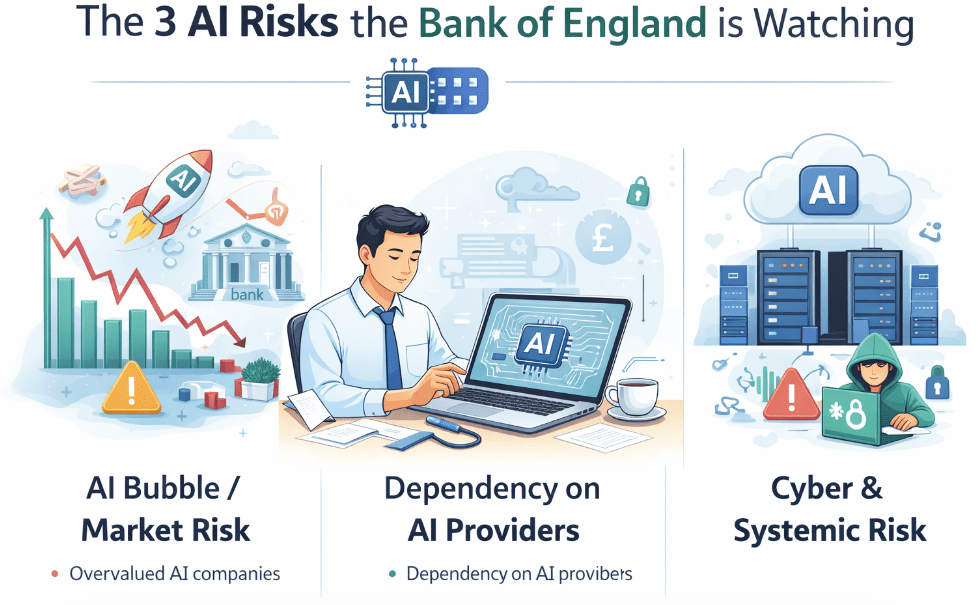

The three AI risks the Bank of England is really watching

The Bank’s concern is not just that an individual model may fail. It is that many firms may fail in the same way at the same time.

First is concentration risk. The Bank says AI-related services, especially vendor-provided models, can become concentrated in a small number of providers, and that growing concentration in AI services could increase risks to the financial system. It also warns that reliance on a small set of providers can create systemic issues if firms cannot migrate quickly during disruption.

Second is herding and procyclicality. The Bank notes that AI-driven trading and investment decisions could lead firms to take correlated positions and act in similar ways during stress, which can amplify shocks. It explicitly says herding in markets can contribute to procyclical fire-sales, and that risks of this kind are not new, but AI may make them faster and harder to spot.

Third is black-box decisioning. The Bank’s financial stability paper discusses advanced “black box” models, including neural networks, used by principal trading firms and systematic hedge funds. The problem is not only accuracy; it is explainability. If firms cannot understand why a model made a decision, they may struggle to monitor it, validate it, or defend it after the fact.

Why US readers should care

The US connection is direct. The Federal Reserve’s SR 11-7 guidance remains the core reference point for model risk management in the US, and it says effective model risk management depends on sound development, implementation, and use of models, along with board and senior management oversight and rigorous validation. That framework maps closely to the issues the Bank of England is raising now around AI governance and control.

The Securities and Exchange Commission has also been focused on the risks created by predictive and AI-like technologies in investor interactions. In its 2023 proposal, the SEC said such technologies may optimize, predict, guide, forecast, or direct investment-related behaviors or outcomes, and it proposed rules to neutralize conflicts of interest linked to those technologies. In 2024, SEC Chair Gary Gensler said the agency was focused on how artificial intelligence affects investors, issuers, and the markets connecting them.

That matters because the same broad pattern is showing up on both sides of the Atlantic: regulators are not trying to kill AI; they are trying to stop hidden model risk from becoming a financial-stability problem.

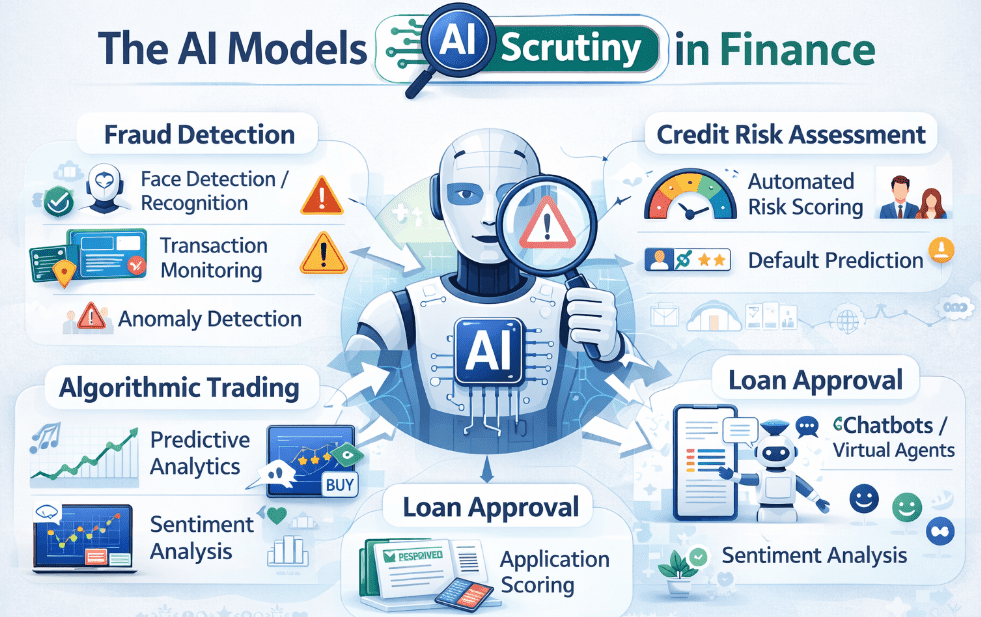

The AI models under scrutiny in finance

This is the part most generic articles miss. The central issue is not “AI tools” in the consumer-software sense. The real question is which financial models are being used, how they are governed, and what happens when they fail.

Algorithmic trading and systematic strategies are the most obvious concern. The Bank of England says more advanced AI-based trading models could lead firms to take increasingly correlated positions during stress, which can amplify shocks. It also notes that such models may exploit weaknesses in other firms’ strategies, or even learn behaviors that worsen stress events. For a compliance team, this means trading AI cannot be assessed only for performance; it has to be tested for market behavior, stress response, and unintended collective effects.

Credit scoring and underwriting models are another pressure point. The Bank warns that common weaknesses in widely used models could cause firms to misestimate risks, misprice credit, and misallocate capital. That is not a minor modeling error; it can translate into broader credit tightening, complaints, conduct issues, and potential redress if customers are harmed by decisions they cannot understand. For lenders, the governance question is whether the model is explainable enough to justify the decision path, not just statistically strong in a backtest.

Productivity and internal LLM deployments also matter, even if they are not customer-facing. The Bank notes that financial institutions increasingly rely on vendor-provided AI models, especially large language models, to boost productivity such as code generation and information retrieval. It also points out that even in-house model building can depend on cloud computing and external data aggregators. That means the risk surface includes procurement, outsourcing, data lineage, cyber resilience, and the ability to switch providers without breaking critical services.

What the Bank of England actually wants firms to do

The Bank’s posture is not “stop using AI.” It is “use AI without sacrificing safety and soundness.” In its 2026 letter to international banks active in the UK, the PRA said advances in AI present opportunities, but firms must adopt them without compromising safety and soundness. The same letter adds that the supervisory statement on model risk management came into effect in May 2024 and that firms should remediate shortcomings as part of broader risk management improvements.

The PRA’s SS1/23 is especially important because it sets expectations for banks’ model risk management across all model types, regardless of technology. It says the framework applies to models developed in-house or externally, including vendor models, and it explicitly says firms should identify and manage the risks associated with artificial intelligence in modeling techniques such as machine learning. In short, the Bank has already written AI into its model-risk expectations; it is not waiting for a separate AI rulebook.

That is also why the Bank’s 2026 guidance keeps returning to the same themes: accurate data, third-party concentration, cyber risk, governance, and resilience. The throughline is simple. AI is acceptable only if a firm can explain, validate, monitor, and recover from it.

A practical compliance checklist for banks and fintechs

A strong AI program in financial services should be able to answer six questions clearly:

Who owns the model?

What decision does it influence?

What data does it depend on?

How is it validated before and after deployment?

What happens if the vendor fails or the model behaves unexpectedly?

How can the firm explain the output to regulators and customers?

If the answers are vague, the institution is not ready.

Firms should also maintain a live inventory of AI use cases, especially where models affect credit, pricing, trading, fraud, or customer treatment. The Bank’s 2026 priorities emphasize robust risk management, data quality, and the need to understand the full chain of dependencies, including sub-outsourcing. That means a compliance team should know not just which model is used, but which cloud provider, which data vendor, and which fallback path sits behind it.

The real US takeaway

For US banks, fintechs, and asset managers, the smartest way to read the Bank of England is as an early warning system. The Bank is signaling that AI risk is now a governance issue, a market-structure issue, and an operational-resilience issue at the same time. The institutions that will be safest in 2026 are not the ones using the most AI; they are the ones that can prove control, explainability, and resilience under stress.

That is also where the US regulatory landscape is heading. The Federal Reserve’s model-risk framework and the SEC’s AI-related conflict concerns both point in the same direction: if a system makes or shapes financial decisions, it needs governance strong enough to survive scrutiny.

Conclusion

The phrase bank of england ai risk is really shorthand for a bigger question: how do you let financial institutions use powerful AI without turning that power into a systemic vulnerability? The Bank of England’s answer is becoming clearer in 2026. It wants firms to use AI, but only with disciplined model risk management, strong data controls, third-party resilience, and enough explainability to defend the result when something goes wrong.

For US readers, the lesson is not to wait for a crisis. The firms that treat AI governance as a core control function now will be the ones best positioned to scale it later.

1 thought on “Bank of England AI Risk & UK Financial Stability 2026”