Modern data teams don’t just move data—they transform, orchestrate, and operationalize it across complex environments. When working with Databricks, ETL (Extract, Transform, Load) tools play a critical role in building scalable pipelines, integrating multiple data sources, and enabling real-time analytics.

Databricks itself provides strong native capabilities with Delta Lake and Spark, but most enterprises still rely on external ETL tools to handle orchestration, ingestion, transformation logic, and governance at scale.

The challenge is not finding an ETL tool—it’s finding the right one that integrates seamlessly with Databricks, supports modern data architectures, and increasingly, leverages AI to automate workflows and reduce manual effort.

This guide covers the best ETL tools for Databricks in 2026, with a focus on scalability, ease of use, integrations, and AI-driven capabilities.

What Makes the Best ETL Tool for Databricks?

Not all ETL platforms are optimized for Databricks environments. The best tools typically offer:

Native or deep integration with Databricks

Support for Delta Lake, Apache Spark, and seamless data transfer between cloud storage and Databricks clusters.

Scalability and performance

Ability to handle large datasets, distributed processing, and real-time or near real-time pipelines.

Ease of use with flexibility

Low-code or no-code interfaces for faster development, without limiting advanced customization.

Orchestration and automation

Built-in scheduling, monitoring, and workflow management.

AI-powered pipeline generation

Modern ETL tools now use AI to generate transformations, detect schema changes, and optimize pipelines automatically.

READ MORE – Best ETL Tools for SaaS Companies

The Best ETL Tools for Databricks at a Glance

| Tool | Best For | Key Strength |

|---|---|---|

| Fivetran | Automated data ingestion | Fully managed pipelines |

| Matillion | ELT for cloud warehouses | Native Databricks integration |

| Apache Airflow | Workflow orchestration | Open-source flexibility |

| Talend | Enterprise ETL | Data governance + integration |

| Informatica | Enterprise-scale pipelines | AI-driven data management |

| Azure Data Factory | Microsoft ecosystem | Deep Azure + Databricks integration |

| Hevo Data | Real-time pipelines | No-code simplicity |

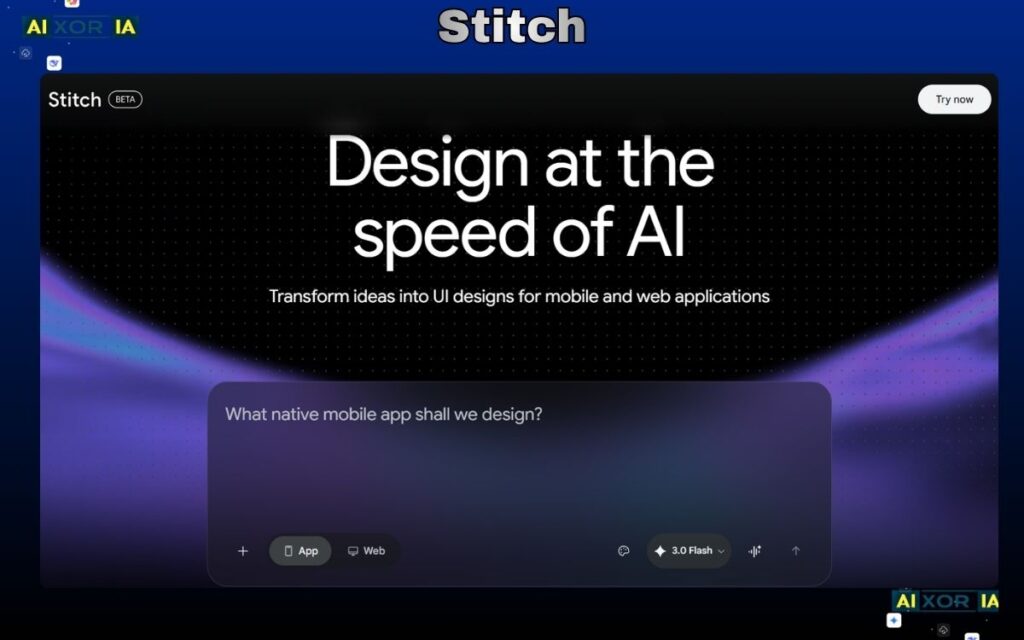

| Stitch | Lightweight ETL | Fast setup |

Best ETL Tool for Automated Data Pipelines

Fivetran

Fivetran is one of the most widely used ETL tools for modern data stacks, especially when working with Databricks.

It focuses on automated data ingestion, allowing teams to connect hundreds of data sources—databases, SaaS tools, APIs—and sync them directly into cloud storage or warehouses used with Databricks. The platform handles schema changes automatically, reducing maintenance overhead.

What makes Fivetran particularly valuable is its reliability and minimal setup. Once configured, pipelines run continuously with little manual intervention.

From an AI perspective, Fivetran incorporates intelligent automation to detect schema drift, optimize sync frequency, and reduce pipeline failures. While it’s not a fully AI-driven transformation tool, its automation layer significantly reduces the need for manual pipeline management.

Best for: Fully managed ingestion pipelines

Limitation: Limited transformation capabilities compared to ELT-focused tools

Best ETL Tool for Cloud ELT Workflows

Matillion

Matillion is designed specifically for cloud-based data platforms and integrates well with Databricks for ELT (Extract, Load, Transform) workflows.

It provides a visual interface where users can design data pipelines using drag-and-drop components, making it accessible for both engineers and analysts. Matillion pushes transformations down to Databricks using Spark, ensuring high performance.

AI capabilities in Matillion are evolving, with features that assist in pipeline recommendations, anomaly detection, and workflow optimization. These AI enhancements help teams reduce development time and improve pipeline efficiency over time.

Best for: Cloud-native ELT workflows

Limitation: Requires some technical understanding for advanced use cases

Best Open-Source Orchestration Tool

Apache Airflow

Apache Airflow is not a traditional ETL tool but a powerful orchestration platform widely used alongside Databricks.

It allows teams to define, schedule, and monitor workflows using Python, making it highly flexible for complex data pipelines. Airflow integrates with Databricks through operators that trigger jobs, manage clusters, and monitor execution.

AI integration in Airflow is mostly indirect, but teams often combine it with AI-driven tools or custom machine learning models to optimize scheduling, detect failures, and improve pipeline efficiency.

Best for: Custom orchestration and complex workflows

Limitation: Requires engineering expertise and setup

READ MORE – Best Python-Based ETL Tools

Best Enterprise ETL Platform

Talend

Talend is a comprehensive ETL platform used by enterprises for data integration, governance, and quality management.

It supports Databricks environments and offers a wide range of connectors, transformation tools, and data quality features. Talend is particularly strong in handling compliance and governance requirements.

Its AI capabilities focus on data quality and pipeline optimization. Talend uses machine learning to detect anomalies, suggest data transformations, and improve data accuracy over time.

Best for: Enterprise data integration and governance

Limitation: Can be complex and resource-intensive

Best AI-Powered Enterprise ETL Platform

Informatica

Informatica is one of the most advanced ETL platforms, especially when it comes to AI-driven data management.

Its CLAIRE AI engine is designed to automate many aspects of ETL, including data discovery, mapping, transformation recommendations, and anomaly detection. This makes it particularly useful for large organizations managing complex data ecosystems.

Informatica integrates with Databricks to enable scalable data pipelines while maintaining governance and compliance. Its AI capabilities reduce manual work and improve pipeline reliability.

Best for: Large-scale, AI-driven data pipelines

Limitation: High cost and complexity

Best ETL Tool for Microsoft-Based Data Stacks

Azure Data Factory

Azure Data Factory (ADF) is a natural choice for organizations using Microsoft Azure alongside Databricks.

ADF provides a visual interface for building data pipelines, along with strong integration with Azure services such as Data Lake, Synapse, and Databricks. It supports both ETL and ELT workflows.

AI features in ADF include automated data mapping, anomaly detection, and integration with Azure AI services for advanced analytics. These capabilities help streamline pipeline creation and monitoring.

Best for: Azure + Databricks environments

Limitation: Less flexible outside the Microsoft ecosystem

Best No-Code ETL Tool for Real-Time Pipelines

Hevo Data

Hevo Data is a no-code ETL platform designed for speed and simplicity.

It allows teams to set up real-time data pipelines without writing code, making it ideal for startups and growing teams. Hevo integrates with Databricks and supports a wide range of data sources.

AI features in Hevo focus on automation, including schema detection, error handling, and pipeline optimization. These capabilities reduce manual effort and improve reliability.

Best for: No-code, real-time data pipelines

Limitation: Limited customization for advanced use cases

READ MORE – Best Free ETL Tools for Developers

Best Lightweight ETL Tool for Quick Setup

Stitch

Stitch is a simple ETL tool focused on fast setup and ease of use.

It’s ideal for small teams or projects that need to quickly move data into Databricks without complex configurations. Stitch supports a variety of data sources and offers straightforward pipeline management.

AI capabilities are minimal compared to other tools, but its simplicity and reliability make it a practical choice for lightweight use cases.

Best for: Quick and simple ETL pipelines

Limitation: Limited advanced features and scalability

How to Choose the Right ETL Tool for Databricks

The best tool depends on your use case:

- For automated ingestion → Fivetran

- For cloud ELT workflows → Matillion

- For orchestration → Apache Airflow

- For enterprise governance → Talend or Informatica

- For Azure environments → Azure Data Factory

- For no-code pipelines → Hevo Data

- For simple setups → Stitch

Many organizations combine tools—for example, using Fivetran for ingestion and Airflow for orchestration.

The Role of AI in ETL for Databricks (2026)

AI is transforming ETL workflows by reducing manual effort and improving efficiency.

Modern ETL tools now offer:

- Automated schema detection

- Intelligent pipeline recommendations

- Error prediction and anomaly detection

- AI-assisted transformation generation

This shift allows data teams to focus more on analysis and less on pipeline maintenance.

Tools like Informatica, Matillion, and Azure Data Factory are leading this transformation with built-in AI capabilities that enhance productivity and scalability.

Final Thoughts

ETL tools are essential for unlocking the full potential of Databricks. While Databricks provides powerful processing capabilities, the right ETL platform ensures data flows smoothly, reliably, and efficiently across your ecosystem.

The best ETL tool for your organization depends on your architecture, team expertise, and scale:

- Start with your data sources and pipeline complexity

- Consider integration requirements and ecosystem compatibility

- Evaluate AI capabilities for long-term efficiency

In many cases, the optimal solution is not a single tool, but a combination that supports ingestion, transformation, orchestration, and governance across your data stack.

1 thought on “The 8 Best ETL Tools for Databricks in 2026”