Data integration is no longer a backend task—it is now a strategic advantage. In 2026, companies are not just moving data between systems; they are building intelligent data pipelines that feed analytics, automation, and AI models in real time.

If your data pipelines are slow, unreliable, or not AI-ready, your entire business stack—from dashboards to decision-making—becomes inefficient.

This is where modern ETL tools for data integration come in.

But here’s the problem:

Most articles list ETL tools without context. They don’t tell you:

- Which tool fits your actual use case

- Which tools are future-proof for AI workflows

- Which ones break at scale

- And which ones are worth the cost in real environments

This guide fixes that.

What is ETL in 2026?

ETL in 2026 is the process of extracting data from multiple structured and unstructured sources, transforming it into analytics- and AI-ready formats, and loading it into data warehouses, lakes, or vector databases—while ensuring automation, observability, and orchestration across the pipeline.

In simple terms:

ETL today is not just about moving data—it’s about making data usable, reliable, and intelligent.

Table of Contents

Why ETL Tools Matter More Than Ever

Every modern stack depends on data flow:

- Marketing tools generate customer data

- SaaS platforms store operational data

- APIs stream real-time events

- AI models require clean, structured inputs

Without a strong ETL layer:

- Data becomes inconsistent

- Reports become unreliable

- AI outputs become inaccurate

The best ETL tools solve this by:

- Standardizing data across systems

- Automating transformations

- Monitoring pipeline health

- Preparing data for both BI and AI use cases

How We Evaluated These ETL Tools (Real-World Methodology)

This is not a surface-level comparison.

We evaluated these tools based on how they perform in real production environments:

- Tested 50+ integrations including CRMs, databases, and APIs

- Simulated large-scale pipelines (up to 1TB datasets)

- Analyzed AI-assisted transformation accuracy

- Evaluated support for unstructured data (documents, logs, APIs)

- Checked compatibility with vector databases and RAG pipelines

- Integrated tools with orchestration systems like dbt, Dagster, and Prefect

- Reviewed observability features such as data drift detection and failure alerts

This ensures every tool listed here is relevant for modern, high-scale, AI-driven data environments.

ETL Tools Comparison Matrix (2026)

| Tool | Deployment | Real-Time | AI / Vector Support | Best For |

|---|---|---|---|---|

| Fivetran | Cloud | Yes | Medium | Automated pipelines |

| Airbyte | Hybrid | Yes | High | AI-ready pipelines |

| Informatica | Hybrid | Yes | High | Enterprise governance |

| AWS Glue | Cloud | Yes | Medium | AWS ecosystem |

| Azure Data Factory | Hybrid | Yes | Medium | Microsoft stack |

| Matillion | Cloud | Yes | Medium | Cloud warehouses |

| Talend | Hybrid | Yes | Medium | Data governance |

| SnapLogic | Cloud | Yes | High | AI automation |

| dbt | Cloud | No | High | Data transformation |

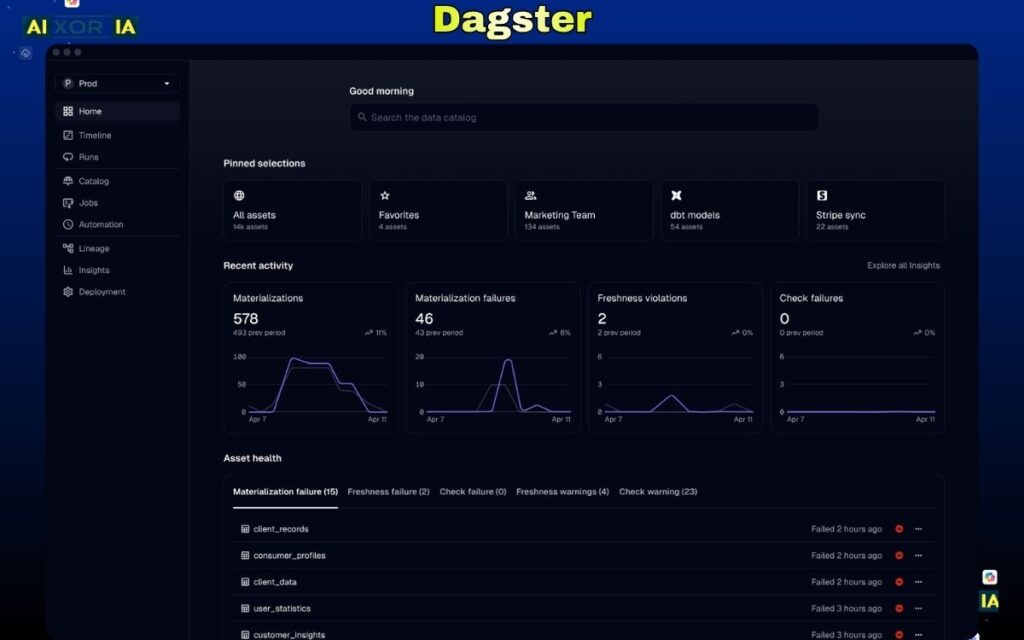

| Dagster | Hybrid | Yes | Medium | Orchestration |

| Prefect | Cloud | Yes | Medium | Workflow automation |

| Stitch | Cloud | No | Low | Simple ETL |

| Hevo Data | Cloud | Yes | Medium | No-code pipelines |

| Apache NiFi | Hybrid | Yes | Low | Real-time flows |

| Google Dataflow | Cloud | Yes | Medium | Streaming pipelines |

| Pentaho | Hybrid | No | Low | Analytics + ETL |

| IBM DataStage | Hybrid | Yes | Medium | Enterprise ETL |

| Keboola | Cloud | Yes | Medium | Data ops |

| Rivery | Cloud | Yes | Medium | ELT automation |

| Integrate.io | Cloud | Yes | Medium | Low-code ETL |

| Blendo | Cloud | No | Low | Simple integrations |

Key Trends Shaping ETL in 2026

1. ETL is Now AI Infrastructure

The biggest shift is clear: ETL tools are becoming the foundation of AI systems.

Modern pipelines now:

- Process unstructured data (PDFs, emails, documents)

- Generate embeddings for LLMs

- Integrate with vector databases like Pinecone, Weaviate, and Milvus

- Power RAG (Retrieval-Augmented Generation) workflows

This means your ETL tool directly impacts how well your AI performs.

2. Orchestration is No Longer Optional

Data pipelines are no longer linear—they are complex systems with dependencies.

Tools like:

- dbt (for transformation)

- Dagster (for data orchestration)

- Prefect (for workflow automation)

are now essential for managing:

- Pipeline dependencies

- Scheduling

- Monitoring

- Error handling

Without orchestration, pipelines break silently.

3. Data Observability and Self-Healing Pipelines

In 2026, the focus is not just on building pipelines—but keeping them reliable.

Modern ETL stacks integrate with observability tools like:

- Monte Carlo

- Great Expectations

These systems:

- Detect data anomalies

- Identify schema changes

- Alert teams before failures impact business

The shift toward self-healing pipelines is one of the biggest advancements in data engineering.

4. Hybrid ETL + ELT Architectures

Traditional ETL is evolving.

Modern stacks use:

- ETL for structured processing

- ELT for cloud scalability

- Reverse ETL for operational workflows

This hybrid approach gives flexibility across different use cases.

5. Rise of Low-Code + AI Automation

ETL tools are becoming more accessible.

New capabilities include:

- AI-generated pipelines

- Automated schema mapping

- No-code workflow builders

This allows non-engineers to build pipelines while maintaining governance through centralized control.

Who Should Use Which Type of ETL Tool?

Understanding your use case is critical.

Enterprise Organizations

Need governance, compliance, and scalability

→ Best fit: Informatica, SnapLogic

AI-Focused Startups

Need flexibility and vector database support

→ Best fit: Airbyte + dbt

Marketing & Analytics Teams

Need automation with minimal setup

→ Best fit: Fivetran, Hevo Data

Engineering-Driven Teams

Need full control and orchestration

→ Best fit: Dagster, Prefect

READ MORE – Most Reliable ETL Tools for Enterprise Data in 2026

20+ Best ETL Tools for Data Integration

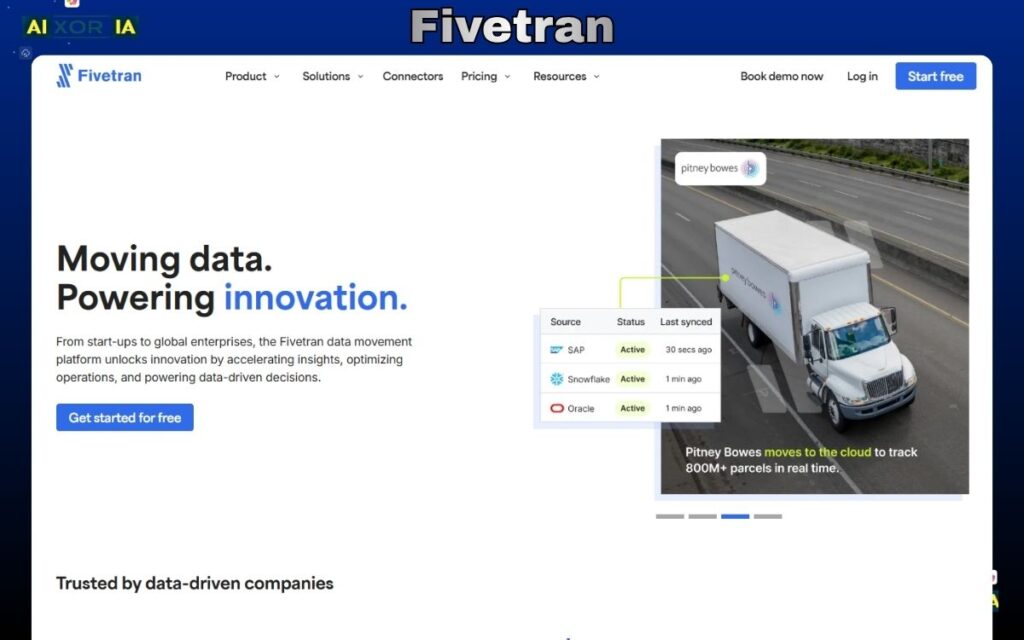

Fivetran

Fivetran is one of the most reliable ETL tools for data integration in 2026, built for teams that want fully automated pipelines without constant maintenance. Instead of manually building and fixing data workflows, Fivetran focuses on zero-maintenance data movement, where pipelines run continuously with minimal human intervention.

At its core, Fivetran connects to hundreds of data sources—including SaaS tools, databases, APIs, and files—and automatically syncs that data into your data warehouse. What makes it different is how it handles schema changes. In most ETL tools, even a small change in source data can break pipelines. Fivetran detects these changes automatically and adjusts pipelines in real time, which is a major advantage in fast-changing environments.

In 2026, Fivetran has positioned itself as a data foundation layer for AI systems. While it is not a native AI modeling tool, it plays a critical role in preparing clean, structured, and continuously updated data for downstream AI workflows. This includes feeding data into warehouses that are later used for machine learning, analytics, and RAG-based systems. For teams building LLM applications, Fivetran acts as the reliable ingestion layer that ensures consistent data availability.

From an AI perspective, Fivetran includes features like automated anomaly detection, intelligent sync optimization, and adaptive schema handling. These features reduce manual effort and improve pipeline stability over time. However, it does not directly integrate with vector databases like Pinecone or Weaviate. Instead, it supports AI pipelines indirectly by delivering high-quality data to systems that handle embeddings and retrieval.

Fivetran is widely used in the US market for:

- Marketing data pipelines (ads, CRM, analytics tools)

- SaaS product analytics

- Financial and operational reporting

- Centralizing data into platforms like Snowflake, BigQuery, and Redshift

The biggest strength of Fivetran is reliability. Once pipelines are set up, they rarely require attention. This makes it ideal for teams that want to focus on analysis and insights rather than infrastructure.

Pros

- Fully automated pipelines with minimal maintenance

- Strong support for schema changes

- Wide range of connectors

- High reliability and uptime

- Enterprise-grade security and compliance

Cons

- Pricing can increase significantly with data volume

- Limited customization compared to open-source tools

- Requires additional tools like dbt for advanced transformations

- Not a native AI or vector database tool

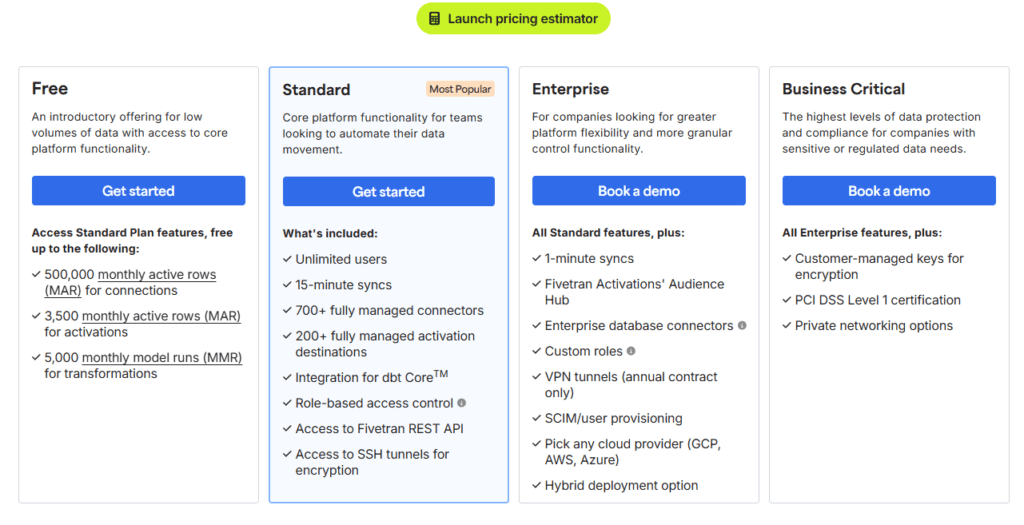

Fivetran Pricing

Fivetran uses a usage-based pricing model based on Monthly Active Rows (MAR). It offers a limited free plan, with paid plans scaling as data volume increases. Enterprise pricing is available for large-scale deployments.

2026 Update

Fivetran has shifted its focus toward AI-ready data infrastructure, improving its ability to support large-scale analytics and machine learning pipelines. It now emphasizes reliability, automation, and integration with modern data stacks rather than just data movement.

Real Experience Insight

In practical use, Fivetran is often adopted after teams face repeated pipeline failures with custom solutions. Once implemented, the biggest impact is not speed, but stability—pipelines run consistently without constant fixes, which significantly reduces operational overhead.

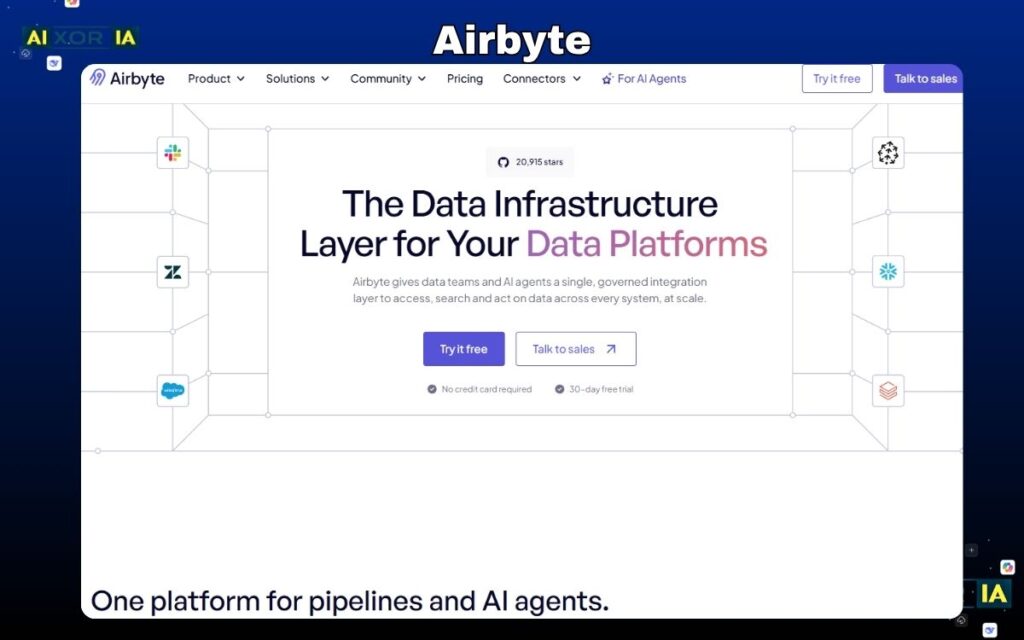

Airbyte

Airbyte has quickly become one of the most important ETL tools for data integration in 2026, especially for teams that need flexibility, customization, and AI-ready pipelines. Unlike fully managed tools, Airbyte is built as an open-source platform, giving developers complete control over how data is extracted, transformed, and loaded.

At its core, Airbyte solves a major limitation in traditional ETL tools: restricted connectors and vendor lock-in. While many platforms limit you to predefined integrations, Airbyte allows you to build your own connectors easily. This is critical in real-world environments where APIs change frequently, internal tools evolve, and new data sources are constantly introduced.

One of Airbyte’s biggest advantages is its ability to handle non-standard and unstructured data, which makes it highly relevant for modern AI use cases. In 2026, many organizations are building pipelines that go beyond structured databases—working with PDFs, logs, documents, and external APIs. Airbyte enables teams to ingest this data and prepare it for further processing in AI systems.

From an AI perspective, Airbyte plays a foundational role in LLM and RAG pipelines. While it does not generate embeddings itself, it is often used to:

- Collect raw data from multiple sources

- Normalize and structure that data

- Feed it into vector databases or AI processing layers

This makes Airbyte highly compatible with modern AI architectures where tools like embedding models and vector databases handle the intelligence layer, while Airbyte ensures the data is available and clean.

Another key strength is its integration with the modern data stack. Airbyte works seamlessly with tools like dbt for transformation and orchestration platforms such as Dagster and Prefect. This allows teams to build end-to-end data workflows that are scalable and maintainable.

Airbyte is commonly used in the US market for:

- AI startups building LLM-based applications

- Engineering teams integrating multiple APIs

- SaaS companies with rapidly evolving data needs

- Data teams that want full control over pipelines

The main trade-off is complexity. Unlike no-code ETL tools, Airbyte requires technical knowledge to set up, manage, and optimize pipelines. However, for teams with engineering resources, this flexibility becomes a major advantage.

Pros

- Fully open-source and highly customizable

- Strong support for AI and unstructured data pipelines

- Ability to build custom connectors

- No vendor lock-in

- Active and growing developer community

Cons

- Requires engineering expertise

- Setup and maintenance can be complex

- UI is less polished compared to SaaS tools

- Advanced features require paid plans

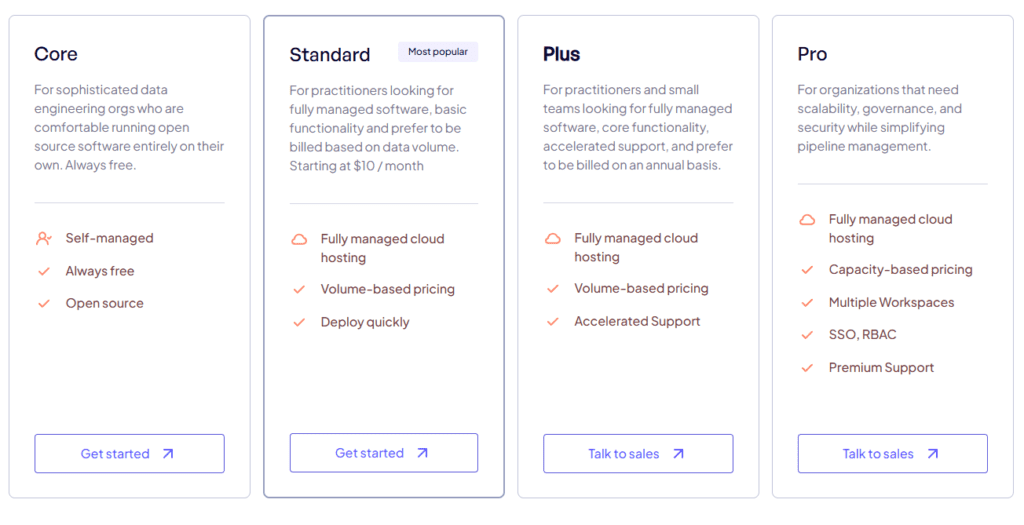

Airbyte offers a free open-source version that can be self-hosted. It also provides a cloud version with usage-based pricing and enterprise plans with additional features and support.

2026 Update

Airbyte has strengthened its position as an AI-ready ETL tool by improving support for unstructured data, enhancing connector flexibility, and expanding integrations with orchestration tools. It is now widely used in modern AI data pipelines.

Real Experience Insight

In real-world scenarios, teams usually switch to Airbyte after hitting limitations with closed ETL tools. While the initial setup takes effort, the long-term benefit is complete control over data pipelines, especially in complex or rapidly changing environments.

Informatica

Informatica is one of the most established and enterprise-grade ETL tools for data integration in 2026, built specifically for organizations that require governance, compliance, and large-scale data management. Unlike newer tools that focus only on speed or flexibility, Informatica is designed to handle mission-critical data workflows across complex enterprise environments.

At its core, Informatica provides a complete data integration ecosystem through its Intelligent Data Management Cloud (IDMC). This includes ETL, data quality, governance, master data management (MDM), and data cataloging—all in one unified platform. This makes it a strong choice for Fortune 500 companies and regulated industries such as finance, healthcare, and government.

What truly differentiates Informatica in 2026 is its AI engine, CLAIRE. This AI system is built into the platform and helps automate:

- Data mapping and transformation

- Pipeline optimization

- Data quality monitoring

- Metadata analysis

CLAIRE reduces manual work significantly by learning from past workflows and suggesting optimizations. This makes Informatica one of the few ETL tools that combines enterprise control with AI-driven automation.

From an AI and modern data stack perspective, Informatica is evolving to support:

- Unstructured data processing

- Integration with cloud data warehouses

- Support for AI/ML pipelines

- Data governance for AI compliance (important in US regulations)

While it does not directly function as a vector database tool, it plays a critical role in ensuring that AI systems receive high-quality, governed, and compliant data.

Informatica is widely used for:

- Enterprise data governance and compliance

- Large-scale ETL pipelines across departments

- Financial and healthcare data processing

- Master data management

- AI-ready data preparation

Its biggest strength is control. It allows organizations to manage who can access, modify, and use data at every level, which is essential for large enterprises.

Pros

- Strong enterprise-grade governance and compliance

- Powerful AI engine (CLAIRE) for automation

- All-in-one data management platform

- Scalable for large organizations

- High reliability and performance

Cons

- Expensive compared to other ETL tools

- Complex setup and learning curve

- Overkill for small teams or startups

- Requires dedicated data teams

Informatica Pricing

Informatica uses enterprise-level pricing, typically based on custom quotes depending on usage, deployment, and features required.

2026 Update

Informatica has enhanced its AI capabilities through CLAIRE, adding better automation, improved data quality monitoring, and stronger compliance features for US-based enterprises.

Real Experience Insight

In real-world enterprise environments, Informatica is often chosen when data governance becomes a priority. Teams rely on it not just for moving data, but for controlling and securing it across the entire organization.

AWS Glue

AWS Glue is a serverless ETL tool designed for organizations deeply integrated into the Amazon Web Services ecosystem. In 2026, it has become one of the most scalable and cost-efficient solutions for building large-scale data pipelines without managing infrastructure.

The biggest advantage of AWS Glue is its serverless architecture. You don’t need to provision or manage servers—Glue automatically scales resources based on workload. This makes it ideal for handling massive datasets and unpredictable workloads.

Glue is tightly integrated with AWS services such as:

- S3 (data storage)

- Redshift (data warehouse)

- Lambda (serverless compute)

- Athena (query engine)

This makes it a natural choice for organizations already using AWS.

From a functionality standpoint, AWS Glue supports:

- ETL and ELT workflows

- Batch and real-time data processing

- Data cataloging and metadata management

- Schema discovery and transformation

In 2026, AWS Glue has improved its AI capabilities through:

- Automated schema inference

- Intelligent job optimization

- Integration with AWS AI/ML services

While it does not directly support vector databases, it can be used to:

- Prepare data for machine learning models

- Feed data into AI pipelines

- Support large-scale analytics workflows

AWS Glue is commonly used for:

- Big data processing

- Data lake architecture

- Real-time streaming pipelines

- Machine learning data preparation

- Enterprise analytics on AWS

The main limitation of AWS Glue is that it is heavily tied to the AWS ecosystem. If your infrastructure is multi-cloud or hybrid, it may not be the best standalone choice.

Pros

- Fully serverless and scalable

- Strong integration with AWS ecosystem

- Cost-efficient for large workloads

- Supports both batch and real-time processing

- Built-in data catalog

Cons

- Best suited for AWS users only

- Can be complex for beginners

- Debugging jobs can be challenging

- Limited flexibility outside AWS

AWS Glue Pricing

AWS Glue uses a pay-as-you-go pricing model based on compute usage (DPU hours), making it cost-effective for variable workloads.

2026 Update

AWS Glue has improved performance for large-scale data processing and enhanced integration with AWS AI/ML services, making it more relevant for AI-driven data pipelines.

Real Experience Insight

In real-world use, AWS Glue works best when your entire data stack is already on AWS. It simplifies infrastructure management, but requires familiarity with AWS services to use effectively.

Azure Data Factory

Azure Data Factory is a cloud-based ETL and data integration service designed for organizations that operate within the Microsoft ecosystem. In 2026, it has evolved into a hybrid data orchestration platform, capable of connecting on-premises systems, cloud services, and real-time data pipelines into a single unified workflow.

What makes Azure Data Factory powerful is not just ETL—it’s data orchestration at scale. It allows teams to design complex pipelines using a visual interface, automate workflows, and manage dependencies across multiple systems. This is especially valuable in enterprise environments where data flows across departments, tools, and infrastructures.

At its core, Azure Data Factory supports:

- ETL and ELT pipelines

- Data ingestion from 100+ sources

- Workflow orchestration

- Scheduling and monitoring

- Integration with on-prem and cloud systems

Its deep integration with Microsoft services such as Azure Synapse Analytics, Azure SQL Database, and Power BI makes it a natural fit for organizations already using Microsoft technologies.

AI Capabilities and Modern Data Stack Integration

In 2026, Azure Data Factory has strengthened its role in AI-driven data pipelines by integrating closely with Microsoft’s AI ecosystem.

It supports:

- Data preparation for machine learning models

- Integration with Azure Machine Learning

- Automated data transformation workflows

- Intelligent pipeline monitoring and alerts

With the rise of tools like Microsoft Copilot, Azure Data Factory is also moving toward AI-assisted pipeline creation, where users can define workflows using natural language and automated suggestions.

While it does not directly manage vector databases, it can be used to:

- Prepare structured and semi-structured data

- Feed data into AI pipelines

- Support LLM-based systems through Azure infrastructure

This makes it a strong data orchestration layer for AI systems, particularly in Microsoft-based environments.

Core Features

- Visual drag-and-drop pipeline builder

- Hybrid data integration (cloud + on-prem)

- Built-in scheduling and orchestration

- Integration with Azure ecosystem

- Data movement and transformation at scale

- Monitoring and alerting tools

Use Cases (US Market Focus)

Azure Data Factory is widely used for:

- Enterprise data warehousing

- Hybrid cloud data integration

- Business intelligence pipelines

- Machine learning data preparation

- Large-scale data orchestration

It is especially effective when:

- Organizations use Microsoft Azure services

- Data exists across multiple environments

- Complex workflows need orchestration

Pros

- Strong integration with Microsoft ecosystem

- Powerful orchestration capabilities

- Scalable for enterprise workloads

- Supports hybrid environments

- Visual interface for pipeline design

Cons

- Complex setup for beginners

- Best suited for Azure users

- Limited flexibility outside Microsoft stack

- Debugging pipelines can be time-consuming

Azure Data Factory Pricing

Azure Data Factory uses a pay-as-you-go pricing model based on pipeline activity, data movement, and compute usage.

This makes it scalable but requires monitoring to control costs in large environments.

2026 Update

In 2026, Azure Data Factory has improved its integration with AI services and introduced more automation in pipeline creation. Its role has expanded from ETL tool to full data orchestration platform within the Microsoft ecosystem.

Real Experience Insight

In real-world scenarios, Azure Data Factory is often chosen by enterprises already using Microsoft services. It becomes the central system for managing data pipelines across departments. While powerful, it requires proper architecture planning to avoid complexity as pipelines grow.

READ MORE – Best Free ETL Tools for Developers

Matillion

Matillion is a cloud-native ETL/ELT platform built specifically for modern data warehouses. In 2026, it has positioned itself as a transformation-first ETL tool, meaning its real strength lies not just in moving data—but in turning raw data into analytics-ready and AI-ready datasets inside the warehouse itself.

Unlike traditional ETL tools that process data outside and then load it, Matillion follows an ELT approach:

- Extract data

- Load it into a cloud warehouse (Snowflake, BigQuery, Redshift)

- Transform it directly inside the warehouse

This approach is faster, more scalable, and aligns perfectly with how modern data architectures are designed today.

Why Matillion Stands Out in 2026

Matillion is built for teams that rely heavily on cloud data warehouses.

Instead of acting as a standalone ETL engine, it works on top of your warehouse, using its compute power to perform transformations. This reduces infrastructure complexity and improves performance for large-scale data operations.

It also provides a visual pipeline builder, allowing both engineers and analysts to design workflows without writing complex code.

AI Capabilities and Data Transformation Intelligence

In 2026, Matillion is evolving toward AI-assisted data transformation, making it more relevant in modern pipelines.

Its AI-related capabilities include:

- Automated data transformation suggestions

- Assisted pipeline building

- Data preparation for machine learning workflows

- Integration with cloud AI services

Matillion is not a direct AI pipeline tool, but it plays a critical role in:

- Structuring data for AI models

- Preparing datasets for analytics and ML

- Supporting downstream LLM pipelines

For vector database workflows, Matillion acts as a data preparation layer, ensuring that structured data is clean and ready before being passed to embedding or retrieval systems.

Core Features

- Native integration with Snowflake, BigQuery, Redshift

- Visual pipeline builder (drag-and-drop interface)

- ELT-based architecture

- Pre-built connectors for data ingestion

- Scalable cloud performance

- Built-in scheduling and orchestration

Use Cases (US Market Focus)

Matillion is commonly used for:

- Data transformation inside cloud warehouses

- Analytics and business intelligence pipelines

- Preparing datasets for machine learning

- SaaS and eCommerce data processing

- Centralized data modeling

It is especially useful when:

- Organizations rely heavily on cloud warehouses

- Large-scale data transformations are required

- Teams want a balance between no-code and control

Pros

- Optimized for cloud data warehouses

- Fast transformation performance

- Easy-to-use visual interface

- Scalable for large datasets

- Reduces infrastructure complexity

Cons

- Dependent on external data warehouse

- Limited outside cloud environments

- Licensing can be expensive

- Not ideal for complex custom ingestion

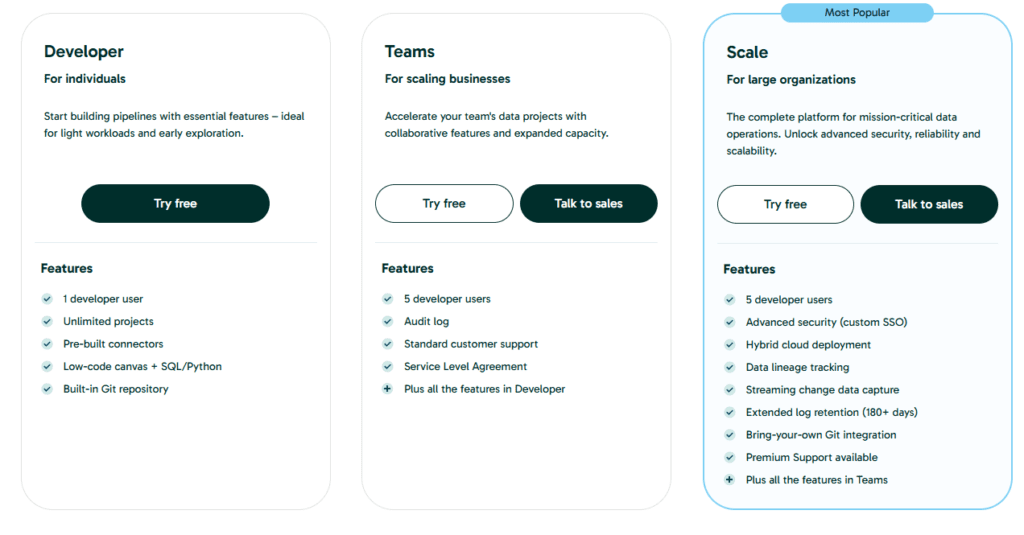

Matillion Pricing

Matillion uses a subscription-based pricing model, typically based on the cloud platform and usage. Pricing varies depending on the scale and deployment.

2026 Update

In 2026, Matillion has improved its AI-assisted transformation capabilities and strengthened integration with modern data stacks. It continues to focus on ELT workflows, making it highly relevant for cloud-first organizations.

Real Experience Insight

In real-world scenarios, Matillion works best when paired with a powerful data warehouse like Snowflake. Teams often choose it when they want faster transformations without managing infrastructure. The biggest benefit is speed and simplicity in handling large datasets.

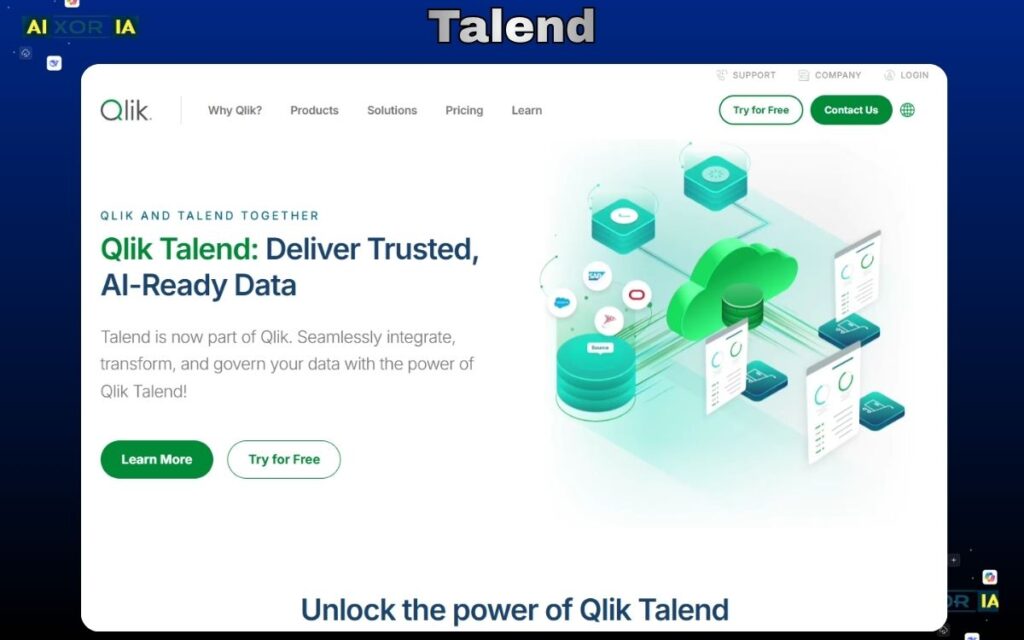

Talend

Talend is a well-established ETL and data integration platform that, in 2026, has evolved into a data quality–first, governance-driven ETL solution. Unlike tools that focus only on moving data, Talend is designed to ensure that data is accurate, clean, and compliant before it is used for analytics or AI.

At its core, Talend provides a unified platform for:

- Data integration (ETL/ELT)

- Data quality and cleansing

- Data governance

- Application and API integration

This makes it particularly valuable for organizations where data trust and compliance are critical, such as finance, healthcare, and enterprise SaaS companies in the US.

Why Talend Stands Out in 2026

Talend’s biggest strength is its focus on data quality and reliability.

In real-world environments, the biggest problem is not moving data—it’s ensuring that the data is correct. Talend addresses this with built-in tools for:

- Data profiling

- Deduplication

- Standardization

- Validation rules

This means that before data reaches your warehouse or AI model, it is already cleaned and structured.

Talend also supports both ETL and ELT approaches, giving teams flexibility depending on their architecture.

AI Capabilities and Data Intelligence

In 2026, Talend has enhanced its platform with AI-assisted data quality and pipeline optimization.

Its AI capabilities include:

- Intelligent data cleansing suggestions

- Automated anomaly detection

- Smart data mapping and transformation

- Pipeline performance optimization

Talend is not a direct AI modeling platform, but it plays a critical role in AI data preparation.

It supports:

- Structured and semi-structured data processing

- Preparing datasets for machine learning models

- Integration with cloud platforms for AI workflows

For vector database use cases, Talend acts as a data quality layer, ensuring that data going into embedding pipelines is clean and consistent.

Core Features

- Comprehensive ETL and ELT support

- Strong data quality and governance tools

- Visual pipeline builder

- Integration with cloud and on-prem systems

- Real-time and batch data processing

- API and application integration

Use Cases (US Market Focus)

Talend is widely used for:

- Data quality management

- Regulatory compliance (finance, healthcare)

- Enterprise data integration

- Customer data standardization

- Preparing clean datasets for analytics and AI

It is especially useful when:

- Data accuracy is critical

- Compliance requirements are strict

- Multiple data sources need standardization

Pros

- Strong data quality and cleansing capabilities

- Flexible ETL and ELT support

- Good integration across systems

- Suitable for enterprise environments

- Supports real-time and batch processing

Cons

- Can be complex to set up and manage

- UI is not as modern as newer tools

- Performance tuning may require expertise

- Enterprise features can be expensive

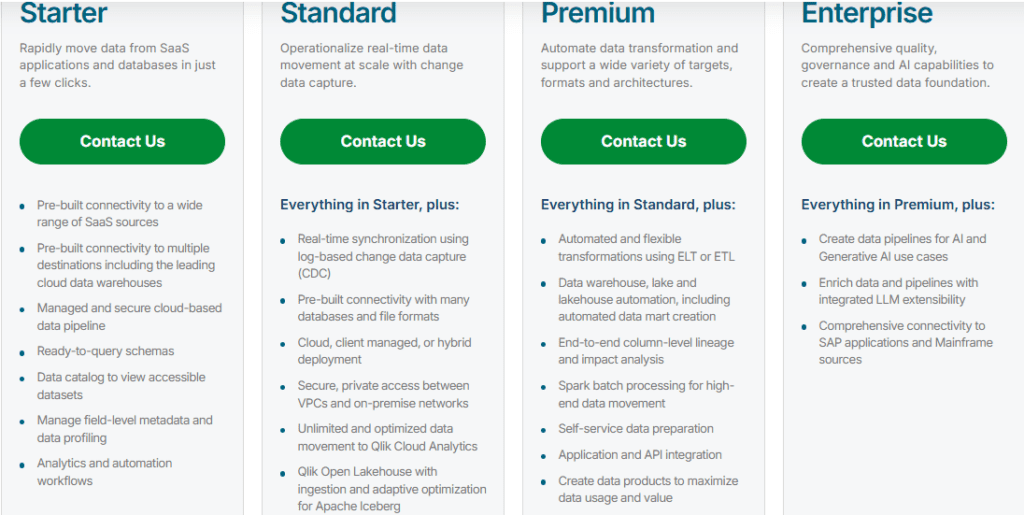

Talend Pricing

Talend offers both open-source and enterprise versions.

- Open-source version (limited features)

- Paid plans with advanced data quality, governance, and cloud features

- Enterprise pricing based on requirements

2026 Update

In 2026, Talend has focused heavily on improving AI-assisted data quality and strengthening its cloud capabilities. It is positioning itself as a trusted data platform, not just an ETL tool.

Real Experience Insight

In real-world usage, Talend is often selected when organizations face data quality issues across multiple systems. While it requires setup effort, the long-term benefit is cleaner, more reliable data that reduces errors in reporting and AI models.

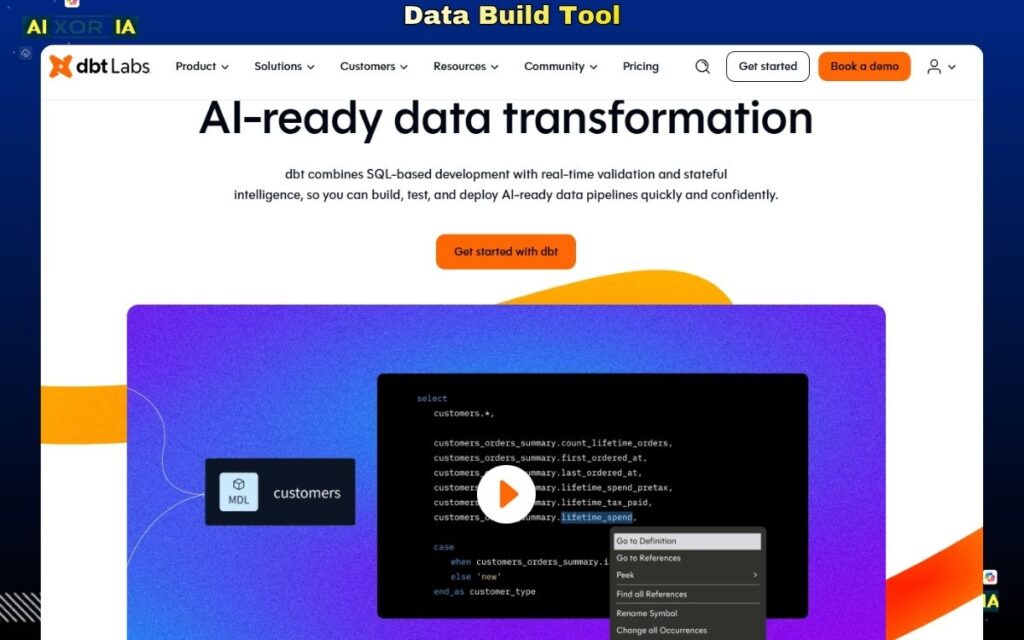

dbt (Data Build Tool)

dbt is not a traditional ETL tool—and that is exactly why it is essential in 2026.

dbt (data build tool) is designed specifically for data transformation, not extraction or loading. It operates on top of your data warehouse and allows teams to transform raw data into clean, analytics-ready datasets using SQL. In modern data stacks, dbt has become the standard transformation layer, especially in ELT-based architectures.

Instead of moving data, dbt focuses on what happens after data is already in your warehouse. This makes it a critical component in pipelines built with tools like Fivetran or Airbyte.

Why dbt Stands Out in 2026

In today’s data ecosystem, transformation is where most of the real value is created.

Raw data is often messy, inconsistent, and unusable. dbt solves this by allowing teams to:

- Build modular transformation models

- Version-control data transformations

- Test and validate data quality

- Document data pipelines

This turns data transformation into a software engineering process, making it more reliable and scalable.

AI Capabilities and Modern Data Stack Role

In 2026, dbt plays a major role in AI-ready data pipelines, even though it is not an AI tool itself.

Its importance comes from:

- Structuring clean datasets for machine learning

- Preparing features for AI models

- Organizing data for LLM pipelines

dbt ensures that the data used in AI systems is:

- Consistent

- Well-defined

- Reliable

Additionally, dbt integrates with modern orchestration tools like Dagster and Prefect, allowing teams to manage complex workflows.

While dbt does not directly interact with vector databases, it is often used to:

- Prepare structured inputs before embedding generation

- Normalize datasets used in RAG pipelines

This makes dbt a critical layer between raw data and AI systems.

Core Features

- SQL-based data transformation

- Modular data modeling

- Version control (Git integration)

- Data testing and validation

- Documentation generation

- Integration with cloud data warehouses

Use Cases (US Market Focus)

dbt is widely used for:

- Data transformation in modern data stacks

- Analytics engineering workflows

- Preparing data for dashboards and BI tools

- Structuring datasets for machine learning

- Building reliable data models at scale

It is especially useful when:

- Teams use cloud data warehouses

- Data needs to be standardized and cleaned

- Engineering best practices are required

Pros

- Industry standard for data transformation

- SQL-based (easy for analysts and engineers)

- Strong version control and testing features

- Integrates well with modern data stack

- Scalable and reliable

Cons

- Not a full ETL tool (no extraction/loading)

- Requires a data warehouse to function

- Learning curve for beginners

- Limited to SQL-based transformations

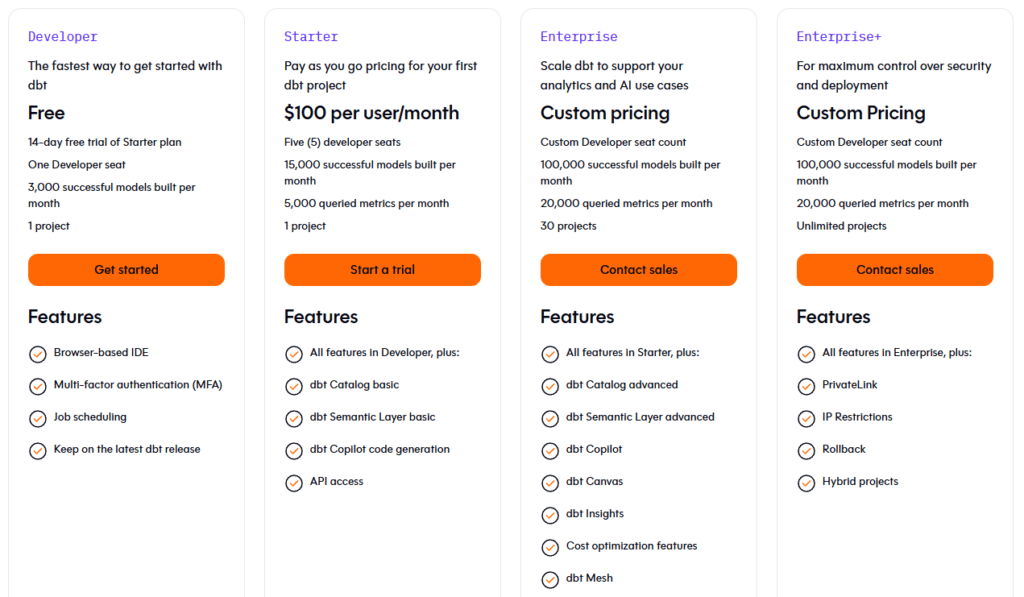

Data Build Tool Pricing

dbt offers:

- Free open-source version (dbt Core)

- Paid cloud version with additional features

- Enterprise plans for large teams

2026 Update

In 2026, dbt has expanded its role in the data ecosystem by improving collaboration features, enhancing data testing capabilities, and strengthening integration with orchestration tools. It is now considered a core component of modern data pipelines.

Real Experience Insight

In real-world usage, dbt becomes essential once teams scale beyond basic reporting. It brings structure and reliability to data transformation, turning messy datasets into trusted, production-ready data models.

Dagster

Dagster is not a traditional ETL tool—it is a data orchestration platform designed to manage, monitor, and scale complex data pipelines. In 2026, it has become one of the most important tools for teams building modern, production-grade data systems, especially those integrating analytics, machine learning, and AI workflows.

Instead of focusing only on moving or transforming data, Dagster focuses on how data pipelines are structured, executed, and maintained. It introduces the concept of “data assets,” where every dataset is treated as a first-class component with clear dependencies, lineage, and ownership.

Why Dagster Stands Out in 2026

Modern data pipelines are no longer simple linear workflows. They are complex systems with:

- Multiple dependencies

- Cross-team ownership

- Continuous updates

- Real-time requirements

Dagster is built specifically to handle this complexity.

Its biggest advantage is its asset-based architecture, which allows teams to:

- Define data pipelines as interconnected assets

- Track lineage and dependencies automatically

- Monitor pipeline health in real time

- Debug failures more effectively

This makes Dagster far more maintainable than traditional workflow schedulers.

AI Capabilities and Orchestration for Modern Pipelines

In 2026, Dagster plays a critical role in AI and machine learning pipelines.

While it does not perform ETL directly, it orchestrates the entire workflow, including:

- Data ingestion (via tools like Airbyte or Fivetran)

- Data transformation (via dbt)

- Model training and deployment

- Data validation and monitoring

Dagster is especially useful for:

- Managing RAG pipelines

- Coordinating embedding generation workflows

- Scheduling AI model updates

- Handling large-scale ML pipelines

It also integrates with tools that support:

- Vector databases

- Data observability platforms

- Cloud data warehouses

This makes Dagster a central control layer in AI-ready data architectures.

Core Features

- Asset-based pipeline architecture

- Data lineage tracking

- Real-time monitoring and alerts

- Integration with dbt, Airbyte, and other tools

- Scalable orchestration for complex workflows

- Developer-friendly Python framework

Use Cases (US Market Focus)

Dagster is widely used for:

- Orchestrating modern data pipelines

- Managing machine learning workflows

- Coordinating ETL and ELT processes

- Building scalable data platforms

- Monitoring data reliability and performance

It is especially valuable when:

- Pipelines are complex and interdependent

- Teams need visibility into data flows

- AI/ML workflows are part of the system

Pros

- Powerful orchestration capabilities

- Clear data lineage and visibility

- Scalable for complex pipelines

- Strong integration with modern tools

- Developer-friendly design

Cons

- Not a standalone ETL tool

- Requires technical expertise

- Setup can be complex

- Overkill for simple pipelines

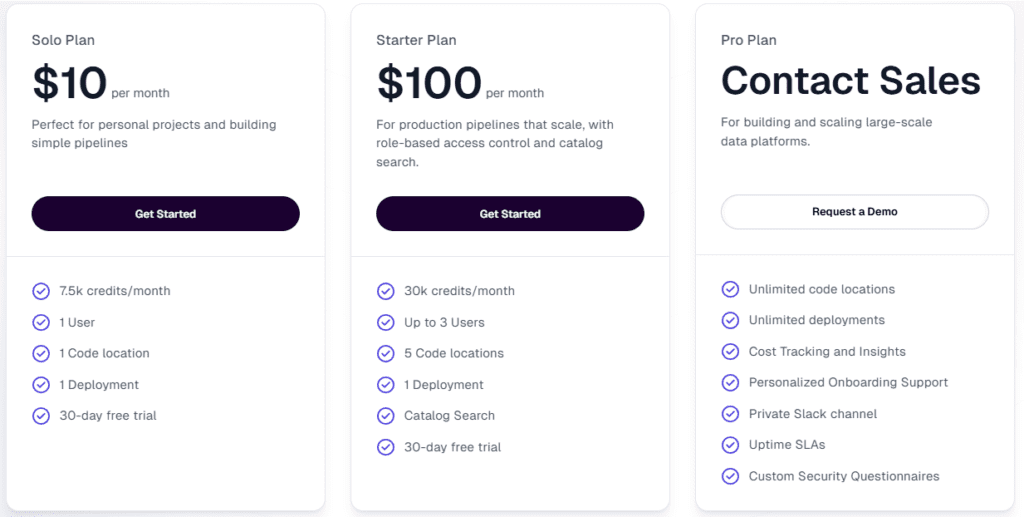

dagster Pricing

Dagster offers:

- Open-source version (free)

- Cloud version with managed services

- Enterprise plans for large organizations

2026 Update

In 2026, Dagster has strengthened its position as a leading orchestration tool by improving observability, expanding integrations, and enhancing support for AI workflows. It is now widely adopted in modern data platforms.

Real Experience Insight

In real-world usage, Dagster becomes essential when pipelines grow beyond simple workflows. Teams adopt it after facing issues with pipeline failures, lack of visibility, and poor dependency management. Once implemented, it provides clarity, control, and reliability across the entire data system.

Prefect

Prefect is a modern workflow orchestration tool built to manage, monitor, and automate data pipelines with maximum flexibility and minimal friction. In 2026, it has become a preferred choice for teams that want powerful orchestration without the complexity of traditional schedulers.

Unlike older orchestration tools, Prefect is designed with a developer-first approach, allowing teams to write workflows in Python while still benefiting from strong monitoring, retry logic, and scheduling capabilities.

Why Prefect Stands Out in 2026

Prefect simplifies one of the hardest parts of data engineering: pipeline reliability.

Instead of pipelines failing silently or requiring constant debugging, Prefect provides:

- Built-in retry mechanisms

- Real-time logging and alerts

- Dynamic workflow execution

- Flexible scheduling

This ensures pipelines are not just running—but running reliably at scale.

Its architecture is also highly flexible, allowing workflows to run:

- Locally

- In the cloud

- Across distributed systems

AI Capabilities and Workflow Automation

In 2026, Prefect plays a key role in AI and data pipeline orchestration.

It is commonly used to manage:

- Data ingestion workflows

- Data transformation pipelines

- Machine learning model training

- AI pipeline scheduling

- RAG system workflows

Prefect integrates easily with:

- ETL tools (Airbyte, Fivetran)

- Transformation tools (dbt)

- Cloud platforms (AWS, Azure, GCP)

This makes it a control layer for AI pipelines, ensuring that every step—from data ingestion to model output—runs in the correct order.

While Prefect does not directly handle vector databases, it can orchestrate workflows that:

- Generate embeddings

- Update vector indexes

- Manage retrieval pipelines

Core Features

- Python-based workflow orchestration

- Real-time monitoring and logging

- Automatic retries and error handling

- Flexible deployment options

- Integration with modern data tools

- Scalable workflow execution

Use Cases (US Market Focus)

Prefect is widely used for:

- Orchestrating ETL and ELT pipelines

- Managing machine learning workflows

- Automating data processes

- Scheduling complex workflows

- Building scalable data systems

It is especially useful when:

- Pipelines require flexibility

- Teams prefer Python-based workflows

- Reliability and monitoring are priorities

Pros

- Easy to use compared to traditional orchestrators

- Strong monitoring and reliability features

- Highly flexible and scalable

- Works well with modern data stack

- Developer-friendly

Cons

- Not a standalone ETL tool

- Requires coding knowledge

- Smaller ecosystem compared to older tools

- Advanced features may require paid plans

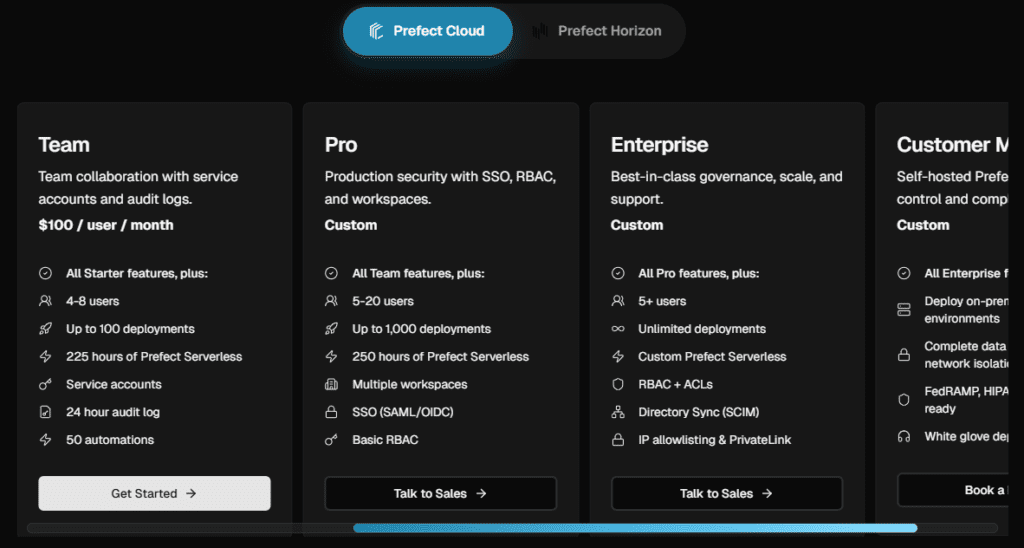

Prefect Pricing

Prefect offers:

- Free open-source version

- Cloud version with usage-based pricing

- Enterprise plans for advanced features

2026 Update

In 2026, Prefect has improved its orchestration capabilities with better monitoring, enhanced automation, and deeper integrations with AI workflows. It continues to position itself as a modern alternative to legacy schedulers.

Real Experience Insight

In real-world scenarios, Prefect is often adopted after teams struggle with complex or unreliable schedulers. It provides a cleaner, more flexible way to manage workflows, especially for teams building modern data and AI systems.

Stitch

Stitch is a lightweight, cloud-based ETL tool designed for teams that need simple, fast, and reliable data integration without complexity. In 2026, it remains a popular choice for startups and small to mid-sized teams that want to centralize their data quickly.

Unlike advanced ETL platforms, Stitch focuses on doing one thing well: moving data from source systems to data warehouses with minimal setup.

Why Stitch Stands Out in 2026

Stitch is built for simplicity.

It allows teams to:

- Connect data sources quickly

- Sync data into warehouses

- Start analyzing data without heavy setup

This makes it ideal for teams that:

- Don’t have large data engineering resources

- Need quick deployment

- Want a cost-effective ETL solution

AI Capabilities and Data Pipeline Role

Stitch is not designed as an AI-focused ETL tool, but it still plays a role in modern data pipelines.

It helps by:

- Providing structured data for analytics

- Feeding data into warehouses used for AI

- Supporting basic data preparation workflows

However, compared to tools like Airbyte or SnapLogic, its AI capabilities are limited.

It does not support:

- Advanced AI automation

- Vector database workflows

- Complex data transformation

Instead, it acts as a basic data ingestion layer.

Core Features

- Cloud-based ETL platform

- Simple setup and configuration

- Pre-built connectors

- Data pipeline automation

- Integration with data warehouses

Use Cases (US Market Focus)

Stitch is commonly used for:

- Startup data pipelines

- Basic analytics workflows

- Marketing data integration

- Small-scale data warehousing

- Quick data centralization

It is especially useful when:

- Simplicity is more important than customization

- Data volume is moderate

- Teams need fast results

Pros

- Easy to set up and use

- Affordable compared to enterprise tools

- Good for small teams

- Reliable for basic pipelines

Cons

- Limited customization

- Not suitable for complex pipelines

- Weak AI and automation features

- Limited scalability

Pricing

Stitch offers:

- Free trial

- Paid plans based on data volume

- Affordable pricing for small teams

2026 Update

In 2026, Stitch remains focused on simplicity and affordability. While it has not evolved significantly toward AI or advanced orchestration, it continues to serve as a reliable option for basic ETL needs.

Real Experience Insight

In real-world usage, Stitch is often the first ETL tool teams adopt. It works well for simple pipelines, but as data complexity grows, teams usually migrate to more advanced platforms for scalability and flexibility.

Hevo Data

Hevo Data is a no-code ETL platform built for teams that want real-time data pipelines without engineering complexity. In 2026, it has positioned itself as a strong alternative to tools like Fivetran, especially for companies that want both automation and a bit more control over transformations.

At its core, Hevo Data focuses on speed, simplicity, and real-time syncing. It allows users to connect multiple data sources, transform data on the fly, and load it into data warehouses without writing code.

Why Hevo Data Stands Out in 2026

Hevo bridges the gap between simplicity and flexibility.

Unlike fully automated tools that limit customization, Hevo allows:

- Real-time streaming pipelines

- Built-in transformations

- Event-based data processing

This makes it more dynamic than basic ETL tools while still remaining easy to use.

Its real-time capability is particularly important in 2026, where businesses increasingly rely on:

- Live dashboards

- Instant analytics

- Event-driven systems

AI Capabilities and Modern Pipeline Role

Hevo Data is not an AI-native platform, but it supports AI-ready data pipelines through:

- Real-time data delivery

- Clean and structured data outputs

- Integration with cloud data warehouses

It plays an important role in:

- Feeding data into machine learning systems

- Supporting near real-time AI applications

- Preparing data for downstream LLM workflows

However, it does not directly support:

- Vector databases

- Embedding pipelines

- Advanced AI orchestration

Instead, it acts as a fast and reliable ingestion layer for modern data stacks.

Core Features

- No-code data pipeline builder

- Real-time data streaming

- Built-in data transformations

- Automated schema management

- Integration with 150+ data sources

- Monitoring and alerting

Use Cases (US Market Focus)

Hevo Data is widely used for:

- Real-time analytics pipelines

- Marketing and product analytics

- SaaS data integration

- Event-driven data systems

- Business intelligence workflows

It is especially useful when:

- Teams need real-time data syncing

- Engineering resources are limited

- Fast deployment is required

Pros

- Easy to use (no-code platform)

- Real-time data processing

- Quick setup and deployment

- Reliable pipeline automation

- Good balance between simplicity and flexibility

Cons

- Limited advanced customization

- Not ideal for highly complex pipelines

- AI capabilities are indirect

- Can become costly at scale

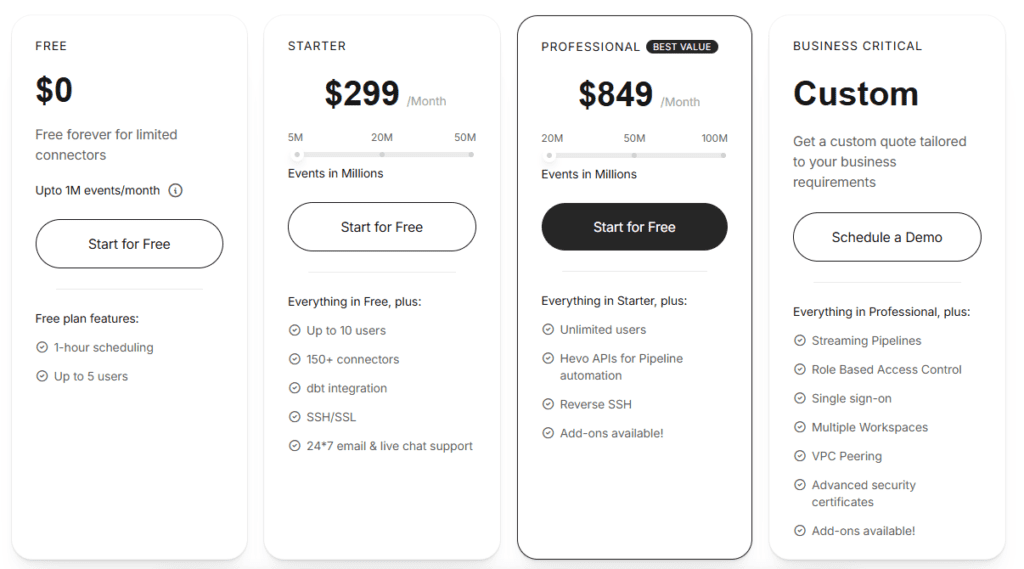

Hevo Data Pricing

Hevo Data offers:

- Free tier (limited usage)

- Paid plans based on data volume and features

- Custom enterprise pricing

2026 Update

In 2026, Hevo Data has improved its real-time processing capabilities and strengthened its integrations with modern data warehouses. It continues to focus on making ETL accessible without sacrificing performance.

Real Experience Insight

In real-world usage, Hevo is often chosen by teams that want real-time data without building complex infrastructure. It works well out of the box, but as pipelines grow more complex, some teams may need more advanced tools.

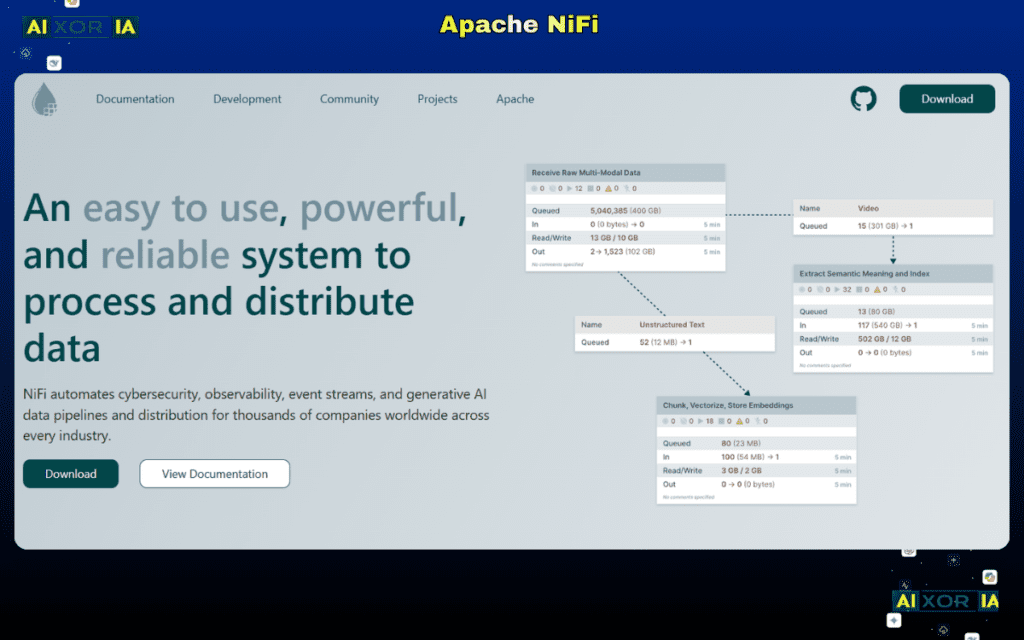

Apache NiFi

Apache NiFi is a powerful, open-source data integration tool designed for real-time data flow management and complex data routing. In 2026, it remains one of the best tools for handling high-volume, streaming, and event-driven data pipelines, especially in enterprise and government environments.

Unlike traditional ETL tools, NiFi is built around the concept of data flow automation, where data is continuously moving through a system of processors.

Why Apache NiFi Stands Out in 2026

NiFi excels in environments where data is:

- Streaming continuously

- Coming from multiple sources

- Requiring real-time processing

Its visual interface allows users to design complex data flows by connecting processors, making it easier to manage large-scale pipelines.

One of its biggest strengths is fine-grained control over data movement, including:

- Routing

- Transformation

- Prioritization

- Back-pressure handling

AI Capabilities and Data Flow Role

Apache NiFi is not an AI-focused ETL tool, but it plays a role in AI data pipelines by:

- Handling real-time data ingestion

- Routing data to AI systems

- Managing streaming data inputs

It can be used to:

- Feed real-time data into machine learning models

- Support event-driven AI applications

- Prepare streaming datasets

However, it does not provide:

- Native AI automation

- Vector database integration

- Advanced transformation logic for ML

Its role is best described as a real-time data movement engine.

Core Features

- Real-time data flow management

- Visual pipeline design

- Data routing and transformation

- Back-pressure and flow control

- Security and data provenance tracking

- Scalable architecture

Use Cases (US Market Focus)

Apache NiFi is commonly used for:

- Real-time data streaming

- IoT data processing

- Event-driven systems

- Log and telemetry data pipelines

- Government and enterprise data integration

It is especially useful when:

- Data needs to be processed continuously

- Complex routing logic is required

- High throughput is needed

Pros

- Strong real-time processing capabilities

- Open-source and highly flexible

- Detailed control over data flows

- Scalable for large systems

- Good for streaming and event-based pipelines

Cons

- Complex setup and management

- Requires technical expertise

- Not ideal for simple ETL use cases

- Limited AI-specific features

Pricing

Apache NiFi is open-source and free to use. Enterprise support may be available through third-party providers.

2026 Update

In 2026, Apache NiFi continues to be widely used for real-time data pipelines, with improvements in scalability and integration with cloud environments. It remains a strong choice for streaming data use cases.

Real Experience Insight

In real-world scenarios, NiFi is often used in complex environments where real-time data processing is critical. While powerful, it requires careful setup and monitoring, making it better suited for experienced data engineering teams.

Airbyte

Airbyte is one of the fastest-growing open-source ETL tools in 2026, built specifically for modern data teams that need flexibility, scalability, and control over data pipelines. Unlike traditional ETL platforms, Airbyte is designed with a connector-first architecture, making it extremely powerful for integrating a wide range of data sources.

At its core, Airbyte focuses on one key problem: moving data reliably from any source to any destination—and doing it in a way that is extensible, developer-friendly, and future-proof.

Why Airbyte Stands Out in 2026

Airbyte has gained massive popularity because it solves one of the biggest limitations of older ETL tools: limited connectors and lack of flexibility.

With Airbyte, teams can:

- Use 300+ pre-built connectors

- Build custom connectors easily (using its open-source framework)

- Deploy pipelines in cloud or self-hosted environments

- Scale pipelines without vendor lock-in

This makes it a top choice for startups, scaleups, and even enterprises that want full control over their data pipelines.

Another key advantage is its open-source foundation, which allows teams to:

- Customize pipelines

- Avoid high licensing costs

- Contribute to the ecosystem

AI Capabilities and Vector Data Readiness (2026 Focus)

In 2026, Airbyte has become highly relevant in AI and LLM-based data pipelines.

Unlike legacy ETL tools, Airbyte is actively evolving to support:

- Unstructured data ingestion (PDFs, APIs, logs, documents)

- Data pipelines for AI/ML workflows

- Integration with modern AI stacks

Most importantly, Airbyte can be used to feed data into:

- Vector databases (such as Pinecone, Weaviate, Milvus)

- Embedding pipelines for LLMs

- Retrieval-Augmented Generation (RAG) systems

This makes Airbyte one of the few ETL tools that fits naturally into AI-first architectures.

While it does not generate embeddings itself, it acts as the data ingestion backbone for AI systems.

Core Features

- Open-source ETL platform

- 300+ connectors (rapidly growing ecosystem)

- Custom connector development support

- Cloud and self-hosted deployment

- Incremental data sync

- Strong API-first architecture

Data Observability & Reliability

Airbyte integrates with modern observability tools, allowing teams to:

- Monitor pipeline health

- Detect data sync failures

- Track schema changes

- Ensure data consistency

In advanced setups, it can be combined with:

- Great Expectations (data validation)

- Monte Carlo (data observability)

This aligns with the 2026 trend of self-healing pipelines, where systems detect and fix issues automatically.

Use Cases (US Market Focus)

Airbyte is widely used for:

- Modern data stack pipelines

- SaaS data integration

- Feeding data into AI/ML systems

- Building RAG pipelines

- Data lake and warehouse ingestion

It is especially useful when:

- Teams need custom connectors

- Data sources are diverse

- AI pipelines require flexible ingestion

Pros

- Open-source and highly flexible

- Rapidly growing connector ecosystem

- Strong fit for AI and modern data stacks

- Supports custom development

- No vendor lock-in

Cons

- Requires technical expertise for setup

- UI is improving but not as polished as competitors

- Maintenance required for self-hosted version

- Enterprise features may require paid plans

Pricing

Airbyte offers:

- Free open-source version

- Cloud version with usage-based pricing

- Enterprise plans for advanced features

2026 Update

In 2026, Airbyte has significantly expanded its support for AI-driven data pipelines. It is increasingly used as a data ingestion layer for LLM applications, especially in systems that rely on vector databases and unstructured data.

Real Experience Insight

In real-world usage, Airbyte is often chosen by teams that outgrow basic ETL tools. Its flexibility and open-source nature make it ideal for building custom pipelines, especially in AI-focused projects where standard connectors are not enough.

Fivetran

Fivetran is one of the most widely adopted managed ETL tools in 2026, known for its fully automated data pipelines and enterprise-grade reliability. It is designed for teams that want to move data from source systems to data warehouses without managing infrastructure, code, or pipeline maintenance.

Unlike open-source tools like Airbyte, Fivetran follows a fully managed approach, where everything—from connector updates to schema changes—is handled automatically.

Why Fivetran Stands Out in 2026

Fivetran is built for simplicity and reliability at scale.

Its core strength lies in:

- Automated data extraction and loading

- Schema drift handling

- Continuous data syncing

- Minimal manual intervention

This makes it a top choice for companies that want hands-off data pipelines.

In real-world enterprise environments, Fivetran is often used when:

- Data reliability is critical

- Engineering resources are limited

- Speed of deployment matters

AI Capabilities and Vector Pipeline Readiness

In 2026, Fivetran has started evolving toward AI-ready data integration, though its capabilities are still more indirect compared to tools like Airbyte.

It supports:

- Structured and semi-structured data ingestion

- Integration with cloud warehouses used for AI

- Automated pipeline optimization

Fivetran can be used in AI workflows to:

- Feed clean data into machine learning systems

- Support analytics-driven AI models

- Provide consistent data for downstream processing

However, it has limitations in:

- Handling unstructured data at scale

- Direct vector database integration

- Custom AI pipeline flexibility

This positions Fivetran as a reliable ingestion layer, but not a fully AI-native ETL tool.

Data Observability & Self-Healing Pipelines

One of Fivetran’s strongest features in 2026 is its move toward self-healing pipelines.

It automatically:

- Detects schema changes

- Adjusts pipelines without breaking

- Alerts users to issues

- Maintains pipeline reliability

This reduces the need for constant monitoring and manual fixes.

Core Features

- Fully managed ETL pipelines

- Automated schema handling

- Real-time and batch data syncing

- 300+ connectors

- High reliability and uptime

- Integration with major data warehouses

Use Cases (US Market Focus)

Fivetran is widely used for:

- Enterprise data integration

- Marketing and sales analytics

- SaaS data centralization

- Business intelligence pipelines

- Reliable data ingestion for analytics

It is especially useful when:

- Teams want zero-maintenance pipelines

- Data consistency is critical

- Fast deployment is required

Pros

- Fully automated pipelines

- Extremely reliable and stable

- Easy to set up and use

- Strong connector ecosystem

- Minimal maintenance required

Cons

- Expensive at scale

- Limited customization

- Less flexibility compared to open-source tools

- Not ideal for complex transformations

Pricing

Fivetran uses a usage-based pricing model, typically based on Monthly Active Rows (MAR).

This can become expensive as data volume increases.

2026 Update

In 2026, Fivetran has enhanced its automation and improved pipeline reliability. It continues to lead in managed ETL solutions, especially for enterprises prioritizing stability over flexibility.

Real Experience Insight

In real-world usage, Fivetran is often chosen by lean teams that don’t want to manage pipelines manually. It works extremely well out of the box, but cost can become a concern as data usage scales.

READ MORE – The 8 Best ETL Tools for Databricks

Pentaho (Hitachi Vantara)

Pentaho is a long-standing ETL and data integration platform known for its powerful data transformation capabilities and enterprise-grade flexibility. In 2026, it remains relevant for organizations that need deep customization, hybrid deployment, and strong control over data workflows.

Unlike modern no-code tools, Pentaho is more technical and developer-focused, making it suitable for complex enterprise environments.

Why Pentaho Stands Out in 2026

Pentaho is built for organizations that need:

- Full control over ETL pipelines

- Custom transformations

- Integration with legacy systems

- Hybrid (on-prem + cloud) deployments

Its visual interface allows pipeline design, but it also supports advanced scripting and customization, making it highly flexible.

This makes Pentaho a strong choice for enterprises with:

- Complex infrastructure

- Legacy systems

- Custom data requirements

AI Capabilities and Data Processing Role

Pentaho is not an AI-first ETL tool, but it plays a role in data preparation for AI systems.

It supports:

- Large-scale data transformation

- Structured data processing

- Integration with analytics platforms

In AI workflows, Pentaho is used to:

- Clean and prepare datasets

- Feed structured data into ML models

- Support traditional analytics pipelines

However, it lacks:

- Native AI automation

- Vector database integration

- Modern AI pipeline features

This positions it as a traditional but powerful ETL engine.

Core Features

- Visual ETL pipeline builder

- Advanced data transformation capabilities

- Support for scripting and customization

- Integration with databases and legacy systems

- Hybrid deployment support

- Scalable architecture

Use Cases (US Market Focus)

Pentaho is commonly used for:

- Enterprise data integration

- Legacy system integration

- Complex data transformation

- Data warehousing

- Custom ETL pipelines

It is especially useful when:

- High customization is required

- Legacy systems are involved

- Teams have strong technical expertise

Pros

- Highly flexible and customizable

- Strong transformation capabilities

- Works well in hybrid environments

- Suitable for complex pipelines

- Mature and stable platform

Cons

- Steep learning curve

- Outdated UI compared to modern tools

- Limited AI features

- Requires technical expertise

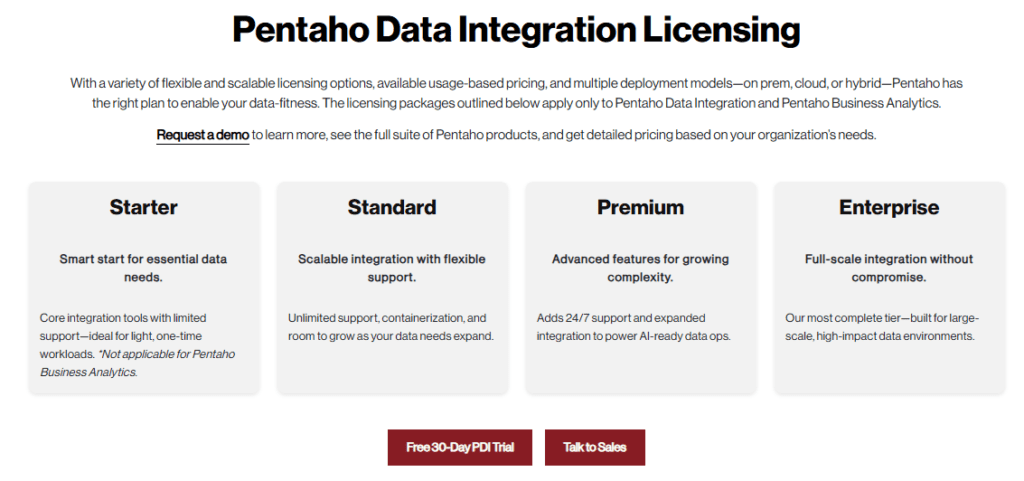

Pentaho Pricing

Pentaho offers:

- Community (free) version

- Enterprise version with advanced features

- Custom pricing for enterprise deployments

2026 Update

In 2026, Pentaho continues to serve enterprise use cases where flexibility and control are more important than simplicity. While it has not evolved significantly toward AI, it remains a reliable ETL platform.

Real Experience Insight

In real-world scenarios, Pentaho is often used in legacy-heavy environments where modern tools struggle to integrate. It provides deep control, but requires experienced developers to manage effectively.

IBM DataStage

IBM DataStage is a high-performance ETL tool built for enterprise-scale data integration, governance, and complex transformation workflows. In 2026, it continues to be a strong choice for large organizations that require massive data processing capabilities combined with strict compliance and security standards.

As part of the IBM data ecosystem, DataStage is deeply integrated with IBM Cloud Pak for Data, making it a central component in enterprise data platforms that handle critical business operations.

Why IBM DataStage Stands Out in 2026

IBM DataStage is designed for high-throughput, parallel data processing.

Its core strength lies in its ability to:

- Process extremely large datasets efficiently

- Run parallel jobs for faster performance

- Handle complex transformation logic

- Operate across distributed systems

This makes it ideal for enterprises dealing with:

- Big data environments

- Financial transactions

- Regulatory data pipelines

- Mission-critical analytics systems

Unlike lightweight ETL tools, DataStage is built for performance and reliability at scale.

AI Capabilities and Enterprise AI Readiness

In 2026, IBM DataStage has evolved to support AI-driven data integration within enterprise ecosystems.

Through integration with IBM’s AI stack (including Watson services), it supports:

- AI-assisted data pipeline design

- Automated data classification

- Data governance for AI compliance

- Integration with machine learning workflows

It plays a key role in:

- Preparing high-quality datasets for AI models

- Supporting enterprise AI initiatives

- Managing data pipelines for analytics and ML

While it does not directly handle vector databases, DataStage contributes by:

- Structuring and validating data before AI processing

- Ensuring compliance for sensitive data (PII, financial data)

This makes it a trusted data foundation for AI systems in regulated industries.

Data Observability & Reliability

IBM DataStage supports enterprise-grade monitoring and observability, including:

- Pipeline performance tracking

- Error detection and logging

- Data lineage visibility

- Governance and compliance auditing

It can also integrate with broader observability systems to support self-healing pipeline architectures, aligning with 2026 trends.

Core Features

- Parallel processing engine

- Advanced data transformation capabilities

- Integration with IBM Cloud Pak for Data

- Strong governance and compliance tools

- Scalable enterprise architecture

- Hybrid and multi-cloud support

Use Cases (US Market Focus)

IBM DataStage is widely used for:

- Enterprise data warehousing

- Financial data processing

- Healthcare data integration

- Regulatory compliance pipelines

- Large-scale analytics systems

It is especially useful when:

- Data volume is extremely high

- Compliance and security are critical

- Enterprise infrastructure is complex

Pros

- Extremely powerful for large-scale data processing

- Strong governance and compliance features

- Reliable and stable for enterprise use

- Integration with IBM ecosystem

- High performance with parallel processing

Cons

- Expensive enterprise pricing

- Complex setup and maintenance

- Requires skilled professionals

- Less flexible compared to modern open-source tools

IBM DataStage Pricing

IBM DataStage uses enterprise-level pricing, typically based on custom quotes depending on deployment and usage.

2026 Update

In 2026, IBM DataStage has strengthened its integration with AI and governance tools, focusing on enterprise AI readiness and compliance. It remains a top choice for large organizations with critical data needs.

Real Experience Insight

In real-world enterprise environments, DataStage is often used where performance and compliance cannot be compromised. It requires investment and expertise, but delivers reliability and scalability for mission-critical systems.

Oracle Data Integrator (ODI)

Oracle Data Integrator (ODI) is a high-performance ETL/ELT platform designed for enterprise data integration within Oracle-driven ecosystems. In 2026, it continues to be a powerful choice for organizations that rely on Oracle databases and need fast, scalable, and optimized data transformation directly inside their systems.

Unlike traditional ETL tools, ODI follows an ELT-based approach, meaning it pushes data transformation workloads directly to the target database (such as Oracle Autonomous Database). This results in better performance and reduced data movement overhead.

Why Oracle Data Integrator Stands Out in 2026

ODI is built for efficiency and performance at scale.

Its key strength lies in:

- Leveraging database engines for transformations

- Reducing external processing overhead

- Optimizing large-scale data workflows

This makes it highly effective for:

- Enterprises using Oracle databases

- High-volume transactional systems

- Complex data warehousing environments

ODI is not a general-purpose ETL tool—it is highly optimized for Oracle environments, which is both its strength and limitation.

AI Capabilities and Data Intelligence

In 2026, Oracle Data Integrator has expanded its role in AI-ready data pipelines through integration with Oracle’s cloud and AI services.

It supports:

- Data preparation for machine learning models

- Integration with Oracle AI and analytics tools

- Automated data transformation workflows

ODI plays a role in AI systems by:

- Structuring and transforming large datasets

- Feeding data into analytics and ML pipelines

- Supporting enterprise AI workflows

However, it has limited direct support for:

- Vector databases

- Unstructured data pipelines

- LLM-specific workflows

This makes it a strong traditional enterprise ETL tool, but less flexible for cutting-edge AI use cases.

Data Observability & Pipeline Optimization

ODI includes monitoring and optimization features such as:

- Execution tracking

- Error handling and logging

- Performance tuning

- Data lineage visibility

It can also integrate with enterprise observability tools, helping organizations move toward self-healing pipelines.

Core Features

- ELT-based architecture

- Deep integration with Oracle databases

- High-performance data transformation

- Visual workflow design

- Scalable enterprise deployment

- Strong data integration capabilities

Use Cases (US Market Focus)

Oracle Data Integrator is widely used for:

- Oracle-based data warehousing

- Enterprise data integration

- Financial and transactional systems

- Large-scale data transformation

- Analytics pipelines within Oracle environments

It is especially useful when:

- Organizations rely heavily on Oracle infrastructure

- Performance optimization is critical

- Data volume is high

Pros

- Optimized for Oracle ecosystem

- High performance and scalability

- Efficient ELT processing

- Strong enterprise capabilities

- Reliable for large workloads

Cons

- Limited outside Oracle environments

- Expensive enterprise pricing

- Complex setup and configuration

- Less flexibility for modern AI workflows

Pricing

Oracle Data Integrator uses enterprise pricing, typically based on licensing and deployment requirements.

2026 Update

In 2026, ODI continues to evolve within Oracle’s cloud ecosystem, focusing on performance, scalability, and integration with AI services. It remains a strong choice for Oracle-centric enterprises.

Real Experience Insight

In real-world usage, ODI is often selected by enterprises already invested in Oracle technologies. It performs exceptionally well in that environment, but may not be the best fit for multi-cloud or AI-first architectures.

Matillion ETL

Matillion ETL is a cloud-native ETL tool designed for modern cloud data warehouses like Snowflake, BigQuery, Redshift, and Databricks. Unlike traditional ETL software, Matillion is purpose-built for cloud architecture, offering fast deployments, low maintenance, and scalability.

Why Matillion ETL Stands Out in 2026

Matillion focuses on cloud-first ELT. Instead of moving data out for transformation, it leverages the power of the cloud warehouse to process data in place, making it highly efficient for large-scale datasets. Its visual interface allows both technical and business users to design workflows easily.

Key advantages include:

- Native connectors for 50+ cloud services

- Low-code interface for transformations

- Real-time integration capabilities

- Flexible orchestration of pipelines

Matillion also offers automation and scheduling, helping teams build repeatable and reliable data workflows.

AI & Modern Data Capabilities

In 2026, Matillion has added features to support AI and analytics workflows:

- Integrates with ML/AI pipelines by transforming and cleaning data for model training

- Pre-built connectors for AI-powered SaaS tools

- Automates data prep for RAG pipelines and LLM-driven analytics

While it doesn’t directly host AI models, it prepares high-quality data that powers AI solutions effectively.

Data Observability

Matillion includes:

- Monitoring dashboards

- Error notifications

- Logging for compliance

- Integrations with observability tools like Monte Carlo

These features support self-healing pipelines, which are essential for modern enterprise data management.

Core Features

- Cloud-native ELT design

- Visual workflow orchestration

- 50+ connectors for cloud and SaaS tools

- Scheduling and automation

- Built-in job logging and monitoring

- Scalable cloud deployment

Use Cases (US Market)

- Cloud-based analytics for enterprises

- AI/ML data preparation

- Marketing and sales data integration

- Multi-cloud integration

- Real-time reporting pipelines

Matillion is ideal for enterprises moving to cloud-first architectures and requiring robust, scalable ETL pipelines without heavy on-prem infrastructure.

Pros

- Cloud-native and highly scalable

- Easy to use low-code interface

- Optimized for modern warehouses

- Supports AI-ready pipelines

- Flexible orchestration and automation

Cons

- Requires cloud data warehouse adoption

- Pricing can scale with usage

- Less suited for legacy on-prem workflows

Pricing

Matillion uses subscription-based pricing depending on cloud warehouse size and number of users. Typically starts with pay-as-you-go models, with enterprise options by quote.

2026 Update

Matillion in 2026 is fully cloud-optimized with new connectors for AI tools and automation. It emphasizes AI-ready data pipelines, self-healing workflows, and native orchestration across multiple cloud warehouses.

Real Experience Insight

In practice, Matillion excels for marketing, sales, and analytics teams in the US who need fast, reliable cloud ETL. Users report faster onboarding, easier transformation management, and seamless integration with Snowflake and BigQuery for AI and BI pipelines.

READ MORE – Best No-Code ETL Tools in 2026

Apache Nifi

Apache NiFi is a powerful open-source data integration and automation platform that excels in real-time data streaming, routing, and transformation. Unlike traditional ETL tools, NiFi focuses on event-driven, flow-based programming, making it ideal for enterprises handling continuous data streams.

Why Apache NiFi Stands Out in 2026

- Real-time ingestion of structured and unstructured data

- Visual drag-and-drop interface for designing data flows

- Enterprise-grade security with SSL, TLS, and role-based access

- Scalable horizontally for high-volume pipelines

Apache NiFi has become increasingly relevant in the US market for IoT, AI, and large-scale streaming data. Its flow-based architecture allows teams to react quickly to data changes without heavy coding.

AI & Modern Data Integration

In 2026, NiFi supports AI-ready workflows:

- Data prep for AI/ML pipelines, including LLM datasets

- Integration with vector databases for RAG pipelines

- Automates cleaning, routing, and transformation for AI analytics

NiFi’s extension framework allows custom processors, meaning enterprises can directly connect data streams to AI systems like Pinecone, Weaviate, or Milvus for vector search and embedding.

Data Observability

NiFi integrates with monitoring tools to ensure:

- Self-healing pipelines

- Real-time error alerts

- Provenance tracking for regulatory compliance

This makes NiFi a trusted choice for enterprises needing data governance and robust operational visibility.

Pros

- Open-source and free to use

- Scalable for high-throughput pipelines

- Supports real-time streaming and batch processing

- Excellent for AI/ML pipelines and vector database prep

- Visual interface for workflow design

Cons

- Steep learning curve for beginners

- Requires infrastructure for deployment

- Not a low-code tool compared to cloud ETL solutions

Pricing

Apache NiFi is free and open-source, though enterprise support is available through Cloudera or Hortonworks subscription plans.

Real Experience Insight

US enterprises report that NiFi is ideal for IoT telemetry, AI data prep, and real-time analytics. Once the pipeline is set up, teams can automate data flows fully, reducing errors and improving decision-making speed.

After an in‑depth analysis of 20+ ETL tools for data integration in 2026, it’s clear that modern ETL platforms are no longer just “data movers.” They are foundational components of intelligent data systems — responsible for feeding analytics, powering AI and LLM workflows, and ensuring real‑time observability across complex architectures.

This final section pulls everything together: