जय भरोजी बाबा

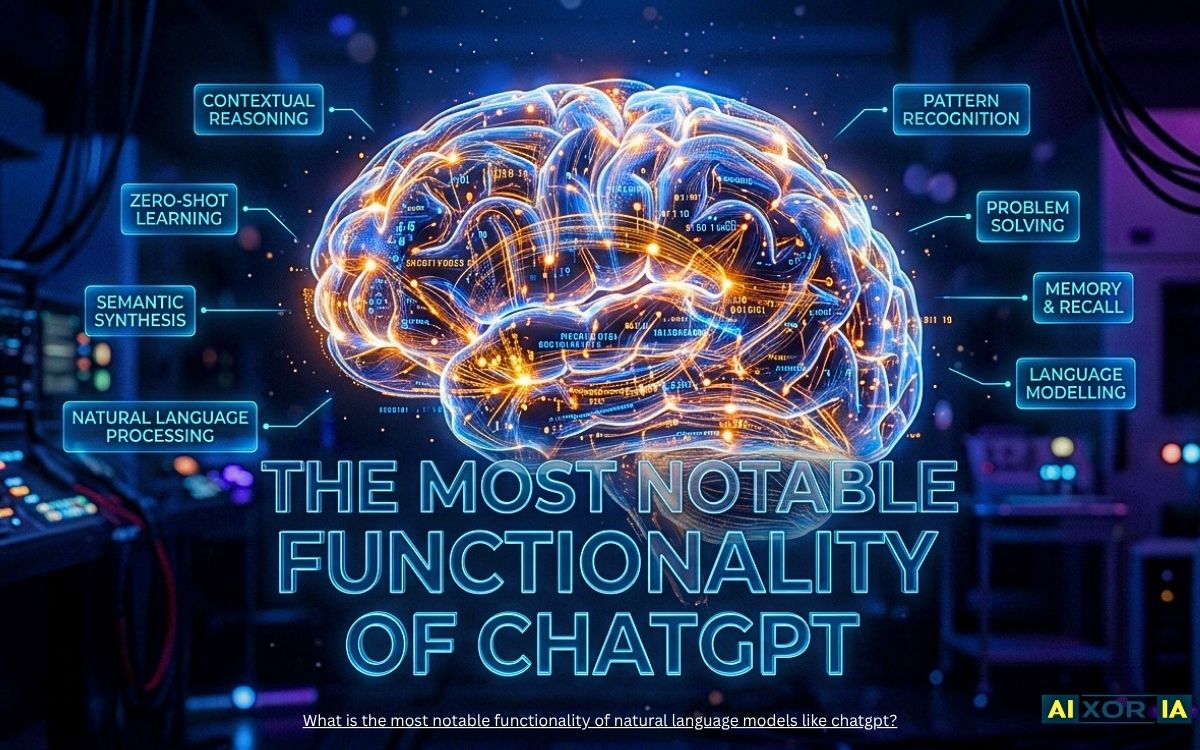

The digital landscape of 2026 is no longer just about searching for information; it is about the synthesis of knowledge. At the heart of this revolution are Natural Language Models (LLMs) like ChatGPT. While many see them as mere “chatbots,” their technical core offers something far more profound.

But what exactly is the most notable functionality of these models? It isn’t just the ability to mimic human speech—it is Contextual Reasoning and Emergent Intelligence.

Table of Contents

1. Contextual Synthesis: Beyond Keyword Matching

The most significant leap from traditional AI to modern LLMs is the transition from “matching” to “understanding.” Traditional systems looked for keywords; LLMs look for intent.

Through a mechanism known as Self-Attention, these models weigh the importance of different words in a sentence regardless of their distance from each other. This allows the AI to maintain a “thread of thought” over thousands of words.

The Mathematical Edge of Attention

To understand why ChatGPT feels so “human,” we look at the Attention mechanism. In technical terms, the model calculates the relationship between words using this formula:

$$Attention(Q, K, V) = softmax\left(\frac{QK^T}{\sqrt{d_k}}\right)V$$

This allows the model to realize that in the sentence “The bank was closed because the river flooded,” the word “bank” refers to geography, not finance.

2. Emergent Zero-Shot Learning

Perhaps the most “notable” functionality that surprised even its creators is Zero-Shot Learning.

This is the ability of a model to perform a task it was never explicitly trained to do. For example, if you ask a 2026-era LLM to “Explain quantum physics in the style of a 1920s jazz musician,” it doesn’t look up a database of jazz-physics hybrids. It reasons the stylistic constraints of jazz and the factual constraints of physics simultaneously.

3. The 2026 Performance Matrix: LLM Capabilities

In the current market, not all LLMs are created equal. Here is how the top models stack up in terms of their core functionalities:

| Functionality | GPT-4o / GPT-5 | Claude 4 | Gemini 3 Flash |

| Context Window | 200k+ Tokens | 300k+ Tokens | 1M+ Tokens |

| Reasoning Depth | Extremely High | High (Nuanced) | High (Fast) |

| Multimodal Native | Yes (Audio/Video) | Yes (Vision) | Yes (Video/Music) |

| Primary Strength | Creative Reasoning | Safety & Ethics | Speed & Integration |

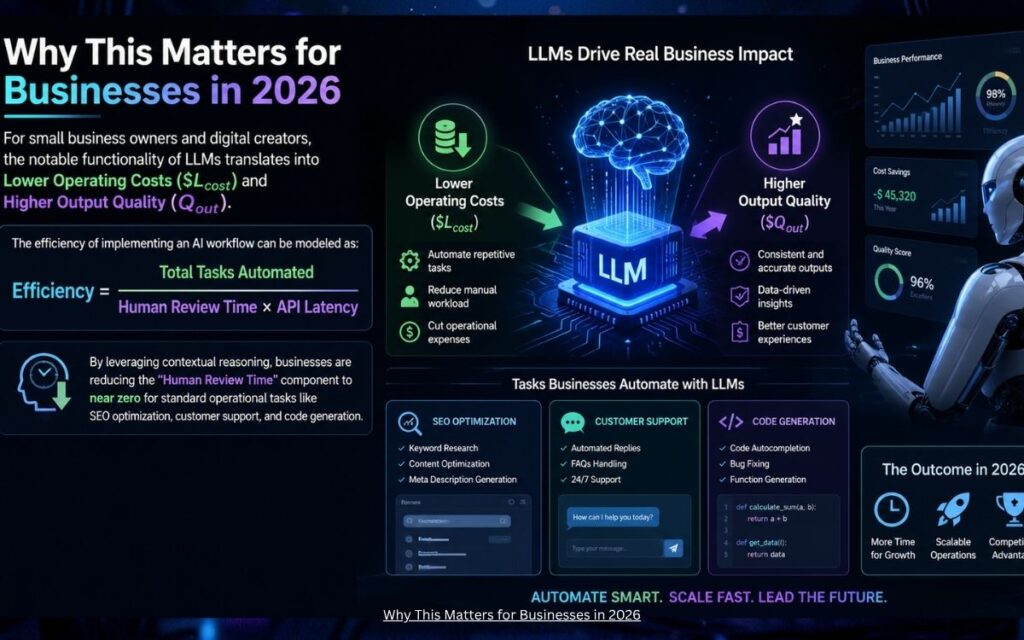

4. Why This Matters for Businesses in 2026

For small business owners and digital creators, the notable functionality of LLMs translates into Lower Operating Costs ($L_{cost}$) and Higher Output Quality ($Q_{out}$).

The efficiency of implementing an AI workflow can be modeled as:

$$Efficiency = \frac{Total\,Tasks\,Automated}{Human\,Review\,Time \times API\,Latency}$$

By leveraging contextual reasoning, businesses are reducing the “Human Review Time” component to near zero for standard operational tasks like SEO optimization, customer support, and code generation.

5. Final Verdict: The Power of “In-Context” Adaptation

The most notable functionality of ChatGPT and its peers is their adaptability. Unlike fixed software, an LLM is a “fluid” intelligence. It becomes whatever the prompt requires—a coder, a poet, a strategist, or a translator.

In 2026, the real winners aren’t those who have the best AI, but those who know how to trigger this contextual reasoning to solve real-world problems.

Frequently Asked Questions (FAQs)

1. What is the most notable functionality of ChatGPT and other LLMs?

The most notable functionality is Contextual Reasoning powered by the Transformer Architecture. Unlike previous AI that looked for keywords, LLMs understand the relationship between words in a sequence. This allows them to maintain a “thread of thought” and provide highly relevant, human-like responses based on the intent of the prompt rather than just matching text.

2. How do Natural Language Models understand human context?

LLMs use a mechanism called Self-Attention. This mathematical process assigns different “weights” to words in a sentence to determine their significance.

3. What is “Emergent Ability” in Large Language Models?

Emergent abilities are tasks that a model can perform even though it wasn’t explicitly trained for them. This includes:

Zero-Shot Learning: Solving a problem with no prior examples.

Logical Reasoning: Breaking down complex math or coding problems step-by-step (Chain of Thought).

4. How do LLMs differ from traditional Search Engines?

A search engine like Google (traditional) is an Information Retrieval system; it points you to a source that already exists. An LLM is a Generative Synthesis system; it processes vast amounts of data to create a unique, original response tailored specifically to your query.

Key Difference: Search engines give you a list of links; LLMs give you a direct, synthesized answer.

5. Can LLMs like ChatGPT truly “think” or “reason”?

While it feels like they are thinking, LLMs actually perform Probabilistic Inference. They predict the most likely “next token” (word piece) based on the patterns they learned during training. However, in 2026, the complexity of these predictions has reached a level where the output is functionally indistinguishable from human reasoning for most professional tasks.

जय भरोजी बाबा