A Practical Explanation with Real-World Examples, Technical Insight, and Modern AI Workflows

Artificial intelligence has evolved from systems that simply analyze data to systems that can create entirely new content. This shift is largely driven by generative AI models, which power modern tools used for writing, design, coding, and multimedia creation.

But many people still ask a fundamental question:

What is the primary goal of a generative AI model?

Right after understanding the concept, the answer becomes surprisingly simple.

The primary goal of a generative AI model is to learn patterns and structures from massive datasets and then generate new, original content that closely resembles real-world data—such as human-written text, realistic images, music, or functional code.

Instead of just identifying patterns, generative AI models recreate those patterns in new forms, allowing machines to simulate creativity and assist humans in producing work faster and more efficiently.

Tools developed by organizations like OpenAI and Google have made generative AI accessible to millions of users worldwide.

To truly understand the primary goal of generative AI, we need to explore how these models work, their technical foundations, and how they are used in real-world workflows.

Understanding the Core Goal of Generative AI

At its core, a generative AI model attempts to replicate the underlying structure of real-world data.

When a model is trained, it studies millions or billions of examples—articles, images, voice recordings, or pieces of software code. From those examples, it learns patterns such as:

- grammar and writing style

- visual composition in images

- rhythm and tone in audio

- logical structures in programming

Once those patterns are learned, the model can generate new outputs that follow the same patterns but are not exact copies of the training data.

This is why generative AI can:

- write essays

- generate artwork

- produce music

- assist with coding

- simulate conversations

The key objective is not memorization—it is pattern learning and pattern recreation.

The Mathematical Goal of Generative AI

From a technical perspective, generative AI models aim to approximate the true probability distribution of real-world data.

In machine learning terminology, the real data distribution is represented as:

p_data(x)

The model tries to learn a new distribution:

p_θ(x)

The objective is to make the model distribution as close as possible to the real data distribution.

Mathematically, this process is often expressed by minimizing the Kullback-Leibler divergence:θminDKL(pdata(x)∥pθ(x))

This equation represents the effort to reduce the statistical difference between:

- real-world data

- AI-generated data

In simpler terms:

The model repeatedly adjusts its internal parameters until the difference between real content and generated content becomes extremely small.

When the difference approaches zero, the generated output appears natural and realistic to humans.

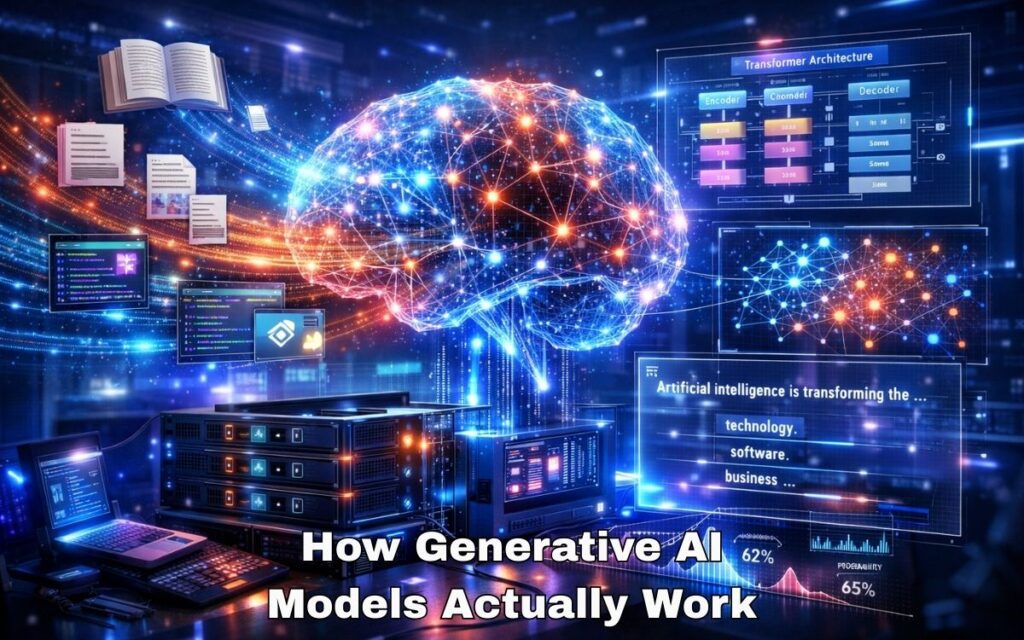

How Generative AI Models Actually Work

While different architectures exist, most generative AI systems follow three fundamental stages.

1. Data Collection and Training

The first stage involves training the model on extremely large datasets.

For text models, this may include:

- books

- research papers

- websites

- programming repositories

During training, the system learns statistical relationships between words, sentences, and ideas.

In image models, the training process involves learning patterns such as:

- shapes

- lighting

- colors

- textures

The training stage is computationally expensive and often requires powerful GPUs or AI clusters.

2. Pattern Recognition and Representation

After exposure to large datasets, the model begins to build internal representations of patterns.

Modern generative AI models rely heavily on architectures from the field of Machine Learning, especially transformer networks.

These architectures allow the system to understand context, relationships, and dependencies across large sequences of data.

For example, a text model learns that in a sentence like:

“Artificial intelligence is transforming the ___ industry.”

Words such as technology, software, or business are statistically more likely to appear than unrelated words.

This probability-based reasoning enables the model to generate coherent outputs.

3. Content Generation

Once training is complete, the model can generate new outputs based on user prompts.

For instance, when someone asks a chatbot to explain quantum computing or write marketing copy, the system predicts the most probable next tokens in a sequence.

Over thousands of iterations per second, the model constructs full paragraphs or entire articles.

A well-known example is ChatGPT, which generates human-like responses by predicting language patterns learned during training.

Generative AI Inputs vs Outputs

Generative AI systems can work with multiple types of input data. The outputs vary depending on the training dataset and model architecture.

| Type of Input Data | What Generative AI Can Create | Popular AI Tools (2026) |

|---|---|---|

| Text Data | Articles, emails, research summaries, stories | ChatGPT, Claude, Gemini |

| Image Data | AI artwork, photorealistic images, illustrations | Midjourney, DALL-E |

| Audio Data | Voice cloning, podcasts, music tracks | ElevenLabs, Suno |

| Code Repositories | Debugging, software scripts, HTML/CSS | GitHub Copilot, Cursor |

Each category represents a different application of the same fundamental goal: generating realistic outputs from learned patterns.

A Real-World Example: How Generative AI Accelerates a Content Workflow

To understand the practical goal of generative AI, consider the workflow of a website owner or digital content creator.

A few years ago, creating a long-form article involved several time-consuming steps:

- researching sources

- outlining the article

- writing the draft

- editing and optimizing content

- formatting the final post

This process could take three to six hours per article.

Today, generative AI tools dramatically reduce the time required.

For example, a creator might use:

- AI tools to brainstorm topic ideas

- a language model to generate a structured outline

- automated tools to summarize research papers

- AI assistants to refine grammar and readability

The result is not fully automated writing—it is AI-assisted productivity.

Instead of replacing human creativity, generative AI compresses repetitive tasks, allowing creators to focus on strategy, originality, and expertise.

My Short Real Experience Using Generative AI Tools

Over the past year, I experimented with several generative AI tools while building and managing online content.

One of the most noticeable differences came when I started using ChatGPT to organize article outlines.

Previously, creating a well-structured blog outline required manually reviewing multiple articles and summarizing their ideas. With AI assistance, the outline could be generated within minutes.

However, the most valuable part was not the automated writing—it was idea expansion.

For instance, when exploring topics about artificial intelligence tools, I could quickly test different angles such as:

- beginner tutorials

- technical explanations

- real-world use cases

Another interesting experiment involved testing image generation platforms for blog illustrations. Using AI-generated images allowed rapid creation of unique visual concepts without relying on stock photos.

Despite these advantages, the best results still required human editing, fact-checking, and personal insights.

The real benefit of generative AI was speed: tasks that previously took several hours could often be completed in 30–40 minutes.

Major Types of Generative AI Models

Generative AI systems rely on different architectures depending on the type of content being produced.

Transformer Models

Transformer-based architectures dominate modern AI systems.

They power conversational AI, text generation, and coding assistants.

These models are highly effective at understanding long-range relationships within sequences of data.

Generative Adversarial Networks (GANs)

GANs are widely used for image generation.

They consist of two competing neural networks:

- a generator that creates images

- a discriminator that evaluates them

Through continuous competition, the generator improves until the images become highly realistic.

Variational Autoencoders (VAEs)

VAEs are another generative approach used to create variations of existing data.

They are often used in:

- image generation

- anomaly detection

- data simulation

Why Generative AI Is Transforming Digital Industries

Generative AI has gained massive attention because it significantly enhances productivity across many sectors.

Content Creation

Writers and marketers can generate drafts, headlines, and summaries faster.

Software Development

AI coding assistants help developers write and debug code more efficiently.

Design and Creative Work

Artists and designers can rapidly explore new visual ideas.

Education

AI tools can explain complex topics and generate study materials.

Scientific Research

Researchers can simulate chemical structures and accelerate discovery processes.

These capabilities demonstrate how generative AI acts as a creative accelerator rather than a replacement for human expertise.

Limitations and Challenges of Generative AI

Despite its capabilities, generative AI also presents several challenges.

Accuracy Problems

AI models sometimes generate incorrect or misleading information because they rely on statistical prediction rather than true understanding.

Ethical Concerns

The technology can be misused to create deepfakes or misinformation.

Data Bias

If training data contains bias, generated outputs may also reflect those biases.

Addressing these issues requires responsible development, transparency, and improved evaluation techniques.

The Future of Generative AI

Generative AI is still evolving rapidly.

Researchers are working on models capable of producing:

- full-length films

- entire software systems

- complex scientific simulations

- realistic virtual environments

Future models will likely integrate text, images, audio, and video into unified systems capable of generating multi-modal experiences.

As these technologies mature, generative AI will likely become an essential tool across nearly every digital profession.

Key Takeaways

The primary goal of a generative AI model is to learn patterns from real-world data and generate new content that reflects those patterns.

By approximating the statistical distribution of training data, generative AI can produce text, images, audio, and code that appear realistic and useful.

While the technology continues to improve, its most powerful role today is augmenting human creativity and productivity, allowing individuals and businesses to work faster while exploring new ideas.

Generative AI does not replace human thinking—it amplifies it.

1 thought on “What Is the Primary Goal of a Generative AI Model?”