A decade ago, most artificial intelligence systems were designed to analyze and classify existing information. Search engines ranked pages, spam filters categorized emails, and recommendation systems predicted what users might want next.

In 2026, however, the paradigm has shifted dramatically.

Instead of simply organizing data, modern AI systems are increasingly focused on creating new information. This is the defining capability of generative models within the field of Artificial Intelligence.

The primary goal of a generative AI model is to learn the underlying probability distribution of a dataset and generate new outputs that follow the same patterns, structure, and logic as the original data.

This capability enables machines to produce:

- human-like text

- realistic images

- software code

- music and audio

- 3D models

- synthetic datasets

Tools such as ChatGPT, Midjourney, and Claude demonstrate how generative models can transform simple prompts into sophisticated creative outputs.

But to truly understand the goal of generative AI, we must examine the mathematical objectives, architectural principles, and real-world implementation strategies behind these systems.

READ MORE – What Is the Ziptie AI Search Performance Tool?

The Mathematical Objective of Generative AI

At its core, generative AI is not magic or creativity in the human sense. It is a statistical modeling process.

The central objective is to approximate the true probability distribution of data.

If we represent the real-world dataset distribution as:P(x)

and the model’s predicted distribution as:Q(x)

the training objective is to minimize the divergence between these two distributions.

One commonly used measurement is the Kullback–Leibler divergence:DKL(P∣∣Q)=x∈X∑P(x)logQ(x)P(x)

The closer this value approaches zero, the more accurately the model replicates the structure of real-world data.

When this happens successfully, the model becomes capable of generating outputs that appear indistinguishable from human-created content.

However, this mathematical objective is only one layer of the broader generative AI goal.

Latent Space: The Hidden Map of Concepts

A crucial concept behind generative models is latent space representation.

Latent space is essentially a compressed mathematical map of the dataset.

Instead of memorizing every example, the model learns abstract relationships between features.

For example:

- in text models, words with similar meaning appear close together

- in image models, similar visual features cluster in nearby regions

- in music models, similar tonal patterns form relationships

Within this space, concepts become vectors rather than raw data.

For instance:

King - Man + Woman ≈ Queen

This vector arithmetic illustrates how generative models understand semantic relationships rather than isolated tokens.

The goal of generative AI is therefore not only to store data, but to navigate latent space efficiently to synthesize new combinations of learned patterns.

The Three Core Pillars of Generative AI Goals

Although generative AI appears to perform many tasks, its objectives can be grouped into three fundamental pillars.

1. Probability Distribution Modeling

The most fundamental goal is predicting the most likely continuation of a pattern.

For text models, this means predicting the next token in a sequence.

For image models, it means predicting pixel structures and textures.

For example, when using ChatGPT, the system is not retrieving pre-written sentences. Instead, it calculates the most statistically probable word sequence based on its training data.

In simplified terms, the model repeatedly estimates:P(tokent∣token1…t−1)

This process allows language models to generate coherent paragraphs, maintain context, and mimic human writing styles.

2. Dimensionality Reduction and Reconstruction

Modern datasets contain enormous complexity.

Images may contain millions of pixel values. Text documents contain thousands of tokens. Video contains both spatial and temporal data.

Generative AI systems therefore compress data into lower dimensional latent representations, then reconstruct them.

This approach is used by architectures such as Variational Autoencoders (VAEs).

The encoder compresses information:x→z

The decoder reconstructs it:z→x^

The generative goal is to ensure:x≈x^

This process allows models to capture essential features while ignoring irrelevant noise.

3. Creative Synthesis

Unlike traditional databases, generative AI does not simply retrieve stored content.

Instead, it combines learned concepts to synthesize new outputs.

For example, if an image model understands:

- “cyberpunk aesthetic”

- “Paris architecture”

it can produce a novel concept such as Cyberpunk Paris — a city that does not exist but appears visually plausible.

This ability to blend conceptual representations is the foundation of AI-driven creativity.

Agentic Workflows: The New Goal of Generative AI Systems

In 2026, generative AI is evolving beyond single responses.

A new paradigm called agentic workflows is emerging.

In these systems, generative models operate as autonomous agents capable of planning multi-step tasks.

Instead of answering a single prompt, the AI can:

- understand a goal

- break it into subtasks

- execute each step

- verify results

For instance, an AI coding assistant might:

- analyze a software repository

- detect outdated dependencies

- rewrite sections of code

- run simulated tests

- produce a final report

This shift moves generative AI closer to problem-solving systems rather than simple content generators.

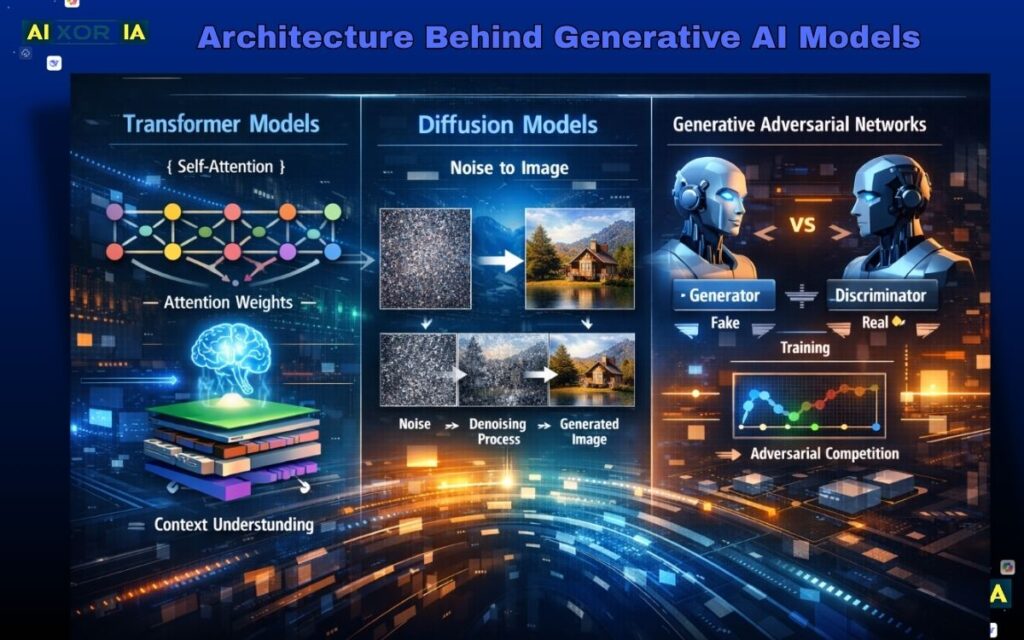

Architecture Behind Generative AI Models

To achieve these goals, generative AI relies on several powerful neural network architectures.

Transformer Models

Most modern language models are based on the Transformer architecture introduced in 2017.

Transformers rely on a mechanism known as self-attention.

Self-attention allows the model to determine which words in a sequence are most important when generating the next token.

This mechanism calculates relationships between tokens using attention weights.

In practice, this allows models to understand context across long sequences.

For example, when reading a paragraph, the model can still reference information introduced several sentences earlier.

Diffusion Models

Diffusion models power many modern image generators.

Their goal is to reverse the process of noise.

Training involves gradually adding noise to an image until it becomes random static.

The model then learns to reverse the process step by step.

At generation time, the model begins with pure noise and gradually reconstructs a structured image.

This technique produces highly detailed and photorealistic visuals.

Generative Adversarial Networks

GANs operate using two competing neural networks.

The generator creates synthetic samples.

The discriminator attempts to determine whether the samples are real or artificial.

Through repeated competition, the generator improves until the discriminator can no longer distinguish the fake content from real data.

This adversarial training strategy encourages extremely realistic outputs.

Real-World Experience With Leading Generative AI Tools

Understanding theory is important, but practical interaction with generative systems reveals their real capabilities.

Below are brief observations from using several leading tools.

Experience Using ChatGPT

One interesting experiment involved generating structured research summaries.

Instead of asking the model for a simple explanation, I tested its reasoning ability by providing fragmented research notes and asking it to synthesize a structured report.

The model successfully identified relationships between unrelated notes and organized them into coherent sections.

The most noticeable advantage was contextual reasoning across long prompts, something traditional AI systems struggled to achieve.

However, the system occasionally introduced assumptions that were not explicitly present in the data, highlighting the importance of human verification.

Experience Using Midjourney

When experimenting with Midjourney, I attempted to generate consistent branding visuals.

The challenge was maintaining a consistent color palette and lighting style across multiple images.

By iteratively refining prompts and referencing earlier outputs, the system produced a set of images that shared a coherent visual identity.

What stood out most was the model’s ability to translate abstract descriptions into visual form, such as turning phrases like “minimalist cybernetic architecture” into complex imagery.

Experience Using Claude

Claude demonstrated a slightly different strength compared with other models.

In tests involving narrative writing and conversational responses, it tended to produce more nuanced emotional tone.

When asked to generate a fictional brand origin story, the model avoided many common AI writing patterns and produced a narrative that felt closer to human storytelling.

The experience suggested that different generative models optimize for different aspects of language generation, such as reasoning, creativity, or stylistic flow.

READ MORE – What Is the Main Goal of Generative AI?

Enterprise Applications of Generative AI

Generative AI is rapidly transforming how organizations approach productivity and innovation.

Some of the most significant applications include:

Synthetic Data Generation

Financial institutions and healthcare organizations often require large datasets to train machine learning models.

However, real-world data may contain sensitive personal information.

Generative models can create synthetic datasets that preserve statistical patterns without exposing private data.

Rapid Design Prototyping

Architects and product designers can now generate dozens of concept designs within minutes.

Instead of manually sketching each idea, designers can explore a wide design space and refine the most promising options.

Personalized Content Systems

Streaming platforms and digital media companies increasingly rely on generative models to produce personalized content recommendations and automated descriptions.

These systems adapt outputs based on user behavior patterns, time of day, and contextual signals.

The Hallucination Problem

Despite their impressive capabilities, generative AI systems are not flawless.

One major challenge is hallucination, where the model generates confident but incorrect information.

This issue occurs because generative models prioritize probability over factual verification.

If an incorrect statement appears statistically plausible, the model may generate it.

Researchers are currently exploring several solutions:

- retrieval-augmented generation

- verification models

- improved training datasets

Reducing hallucinations remains a major research focus.

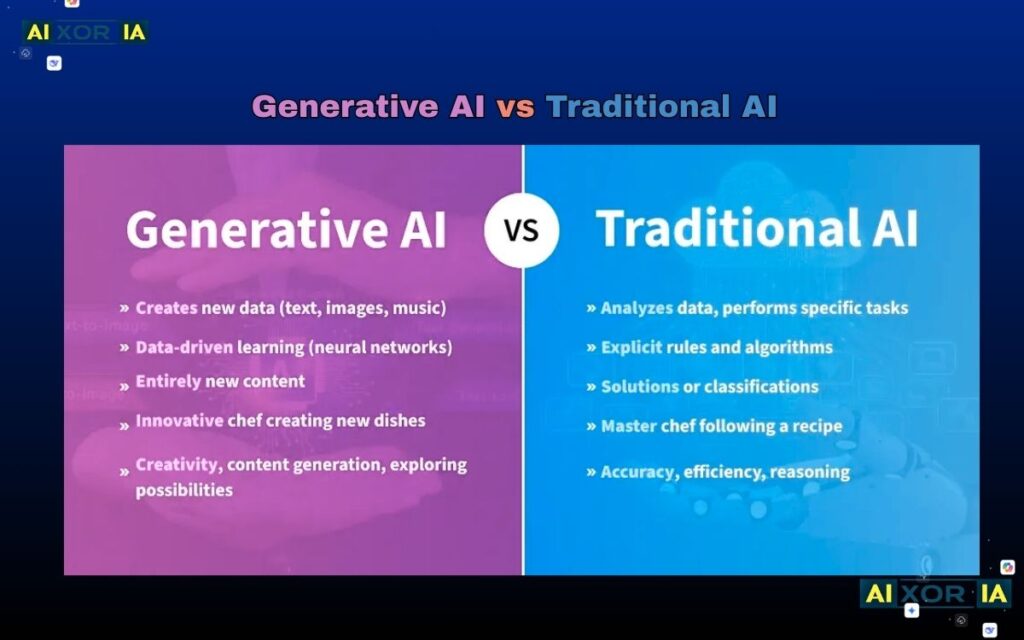

Generative AI vs Traditional AI

To better understand generative AI goals, it is helpful to compare them with earlier AI approaches.

| Feature | Traditional AI | Generative AI |

|---|---|---|

| Primary Objective | Classification and prediction | Content generation |

| Mathematical Focus | Conditional probability | Joint probability modeling |

| Output Type | Labels or scores | New content |

| Data Interaction | Analyze existing data | Create synthetic data |

| Complexity | Moderate | Extremely high |

Traditional AI systems might determine whether an email is spam.

Generative AI could write the email itself.

The Long-Term Goal: Human–AI Creative Convergence

Looking toward the future, the ultimate goal of generative AI is not simply automation.

Instead, it is creative convergence between human intelligence and machine-generated synthesis.

In this model:

- humans provide intent, imagination, and judgment

- AI systems provide computational creativity and scale

The result is a collaborative environment where machines handle repetitive creative tasks while humans focus on strategic thinking and innovation.

Rather than replacing human creativity, generative AI expands the range of ideas that individuals can explore.

Frequently Asked Questions

What is the primary goal of a generative AI model?

The primary goal is to learn the statistical patterns of a dataset and generate new outputs that follow those patterns while remaining coherent and contextually relevant.

How does generative AI differ from predictive AI?

Predictive AI analyzes data to forecast outcomes or classify information, while generative AI produces entirely new data such as text, images, audio, or code.

Why is latent space important in generative AI?

Latent space acts as an internal conceptual map where relationships between features are stored. By navigating this space, generative models can synthesize new combinations of learned patterns.

Why do generative models sometimes produce incorrect information?

Because these systems generate outputs based on probability rather than factual verification, they may occasionally produce plausible but inaccurate statements.

2 thoughts on “What Is the Main Goal of Generative AI?”