Digital banking in 2026 is no longer about mobile interfaces or API integrations.

It is about agentic AI workflows operating inside core banking systems — autonomous systems that detect fraud, adjust risk models, personalize credit offers, and trigger compliance reviews without manual orchestration.

In boardroom conversations over the last 18 months, I’ve seen a clear shift:

- 2022: “Should we experiment with AI?”

- 2024: “How do we deploy safely?”

- 2026: “How do we measure operational intelligence efficiency?”

For fintech and Tier-1 banking leaders, the conversation has matured beyond feature comparisons. Today, AI tools are evaluated using measurable efficiency, explainability, latency, compliance posture, and integration depth.

This guide examines the best AI tools enhancing digital banking in 2026 — through a strategic lens that includes:

- Risk efficiency modeling

- Agentic workflow readiness

- Explainable AI (XAI) maturity

- EU AI Act high-risk compliance

- ISO/IEC 42001 AI governance alignment

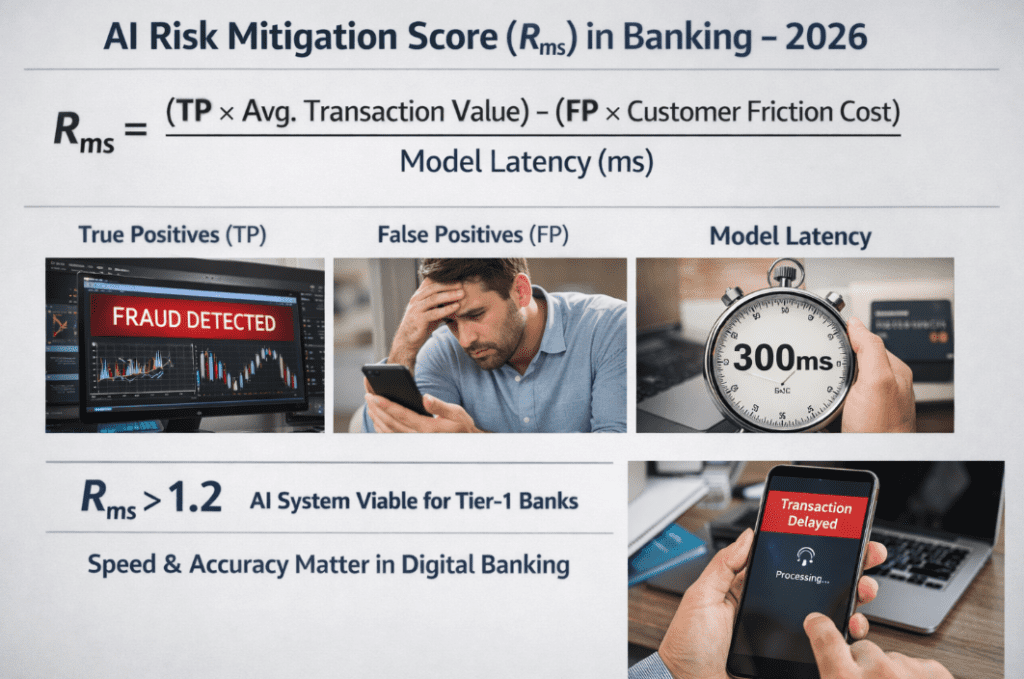

The 2026 Evaluation Logic: AI Risk Mitigation Score (Rₘₛ)

Enterprise banks now quantify AI ROI using a performance-weighted efficiency metric.

In fraud and risk evaluation committees, the following operational model is increasingly referenced:

AI Risk Mitigation Score (Rₘₛ)

“In 2026, enterprise banks evaluate AI tool ROI using the following mathematical model:”Rms=Model Latency (ms)(TP×Avg. Transaction Value)−(FP×Customer Friction Cost)

Where:

- TP = True Positives (correct fraud detections)

- FP = False Positives (legitimate transactions blocked)

- Customer Friction Cost = churn probability, call center overhead, brand trust impact

- Model Latency (ms) = decisioning delay

If:Rms>1.2

The AI system is considered operationally viable for Tier-1 institutions.

Why latency matters:

In digital banking, a 300ms delay can directly impact transaction approval flow. AI models that are accurate but slow degrade mobile experience and increase abandonment.

This formula reframes AI not as a feature — but as an efficiency engine.

READ MORE- Best AI Tools for Enhancing Digital Banking in 2026 for US Financial Institutions

1. AI for Fraud Detection & Risk Intelligence

Fraud remains the highest ROI AI use case in digital banking.

🛡️ Feedzai

Primary AI Logic: Behavioral biometrics + anomaly detection

Agentic Capability: Real-time transaction orchestration

Explainability: SHAP-based risk explanation layers

2026 Compliance Positioning: PSD3 alignment + EU AI Act High-Risk readiness

Feedzai’s architecture supports cross-channel behavioral profiling. Unlike legacy rule engines, it builds adaptive digital identity graphs.

Real Experience Insight:

During a fraud stack evaluation for a mid-sized EU bank, the biggest issue wasn’t fraud detection — it was false positives killing customer trust. After switching to adaptive behavioral scoring, the fraud team reduced customer complaint tickets by nearly 30% in six months. The gain wasn’t just financial — it was reputational.

Feedzai performs strongly under the Rₘₛ model because of its balance between precision and latency optimization.

🔍 FICO

Primary AI Logic: Hybrid statistical + ML risk modeling

Explainability: Strong legacy interpretability

2026 Compliance: Deep regulatory trust footprint

FICO remains dominant where regulatory conservatism matters. For institutions prioritizing audit transparency over experimental AI, it offers a safer deployment model.

Its Falcon platform integrates seamlessly with core banking systems and supports real-time decisioning environments.

📊 Visual Architecture: Fraud Intelligence Layer

[Transaction Event]

↓

[Streaming Engine (Kafka)]

↓

[AI Risk Model Layer]

↓

[Explainability Module (XAI)]

↓

[Decision Engine]

↓

[Core Banking Response]

This layered structure is now standard in Tier-1 deployments.

2. AI for AML & Adaptive Compliance

In 2026, simply saying “AML capable” is insufficient.

Banks must evaluate AI vendors against:

- EU AI Act High-Risk Category requirements

- ISO/IEC 42001 AI Management System standards

- Global AML/CTF monitoring frameworks

🏛️ Featurespace

Primary AI Logic: Adaptive behavioral analytics

Explainability: Transparent anomaly scoring

Compliance: Global AML/CTF regulatory support

Featurespace excels at detecting unknown typologies — a critical factor as financial crime evolves.

Under the EU AI Act, AML systems fall under high-risk AI. Featurespace’s monitoring and documentation capabilities support regulatory reporting.

🔎 ComplyAdvantage

Primary AI Logic: Real-time risk data intelligence

Compliance Focus: Sanctions screening + PEP monitoring

Governance: Strong audit trail capabilities

Particularly useful for challenger banks scaling cross-border operations.

3. Conversational AI & Agentic Banking Interfaces

Conversational AI is no longer chatbot-driven FAQ automation.

It is evolving into:

- Autonomous financial co-pilots

- Context-aware credit advisors

- Fraud resolution assistants

💬 Kasisto

Primary AI Logic: Financial intent recognition LLM

Explainability: Moderate

Compliance: SOC2 + GDPR aligned

Kasisto’s strength lies in domain-specific financial training, reducing hallucination risk.

🤖 IBM Watson Assistant

Primary AI Logic: Enterprise conversational AI

Deployment Flexibility: On-prem + hybrid

Compliance Positioning: ISO governance integration support

🧩 Visual Architecture: Conversational Agent Layer

[Customer Query]

↓

[NLP + Financial Intent Engine]

↓

[Policy & Compliance Filter]

↓

[Core Banking API]

↓

[Response Generator]

↓

[Audit Log Storage]

In 2026, audit logging for AI conversations is mandatory in regulated banking.

4. AI for Credit Risk & Fair Lending

Credit underwriting faces heightened scrutiny.

Explainable AI (XAI) is no longer optional — regulators are actively rejecting opaque black-box systems.

📈 Zest AI

Primary AI Logic: Fair lending ML

Explainability: Maximum (regulator-ready transparency)

Compliance: ECOA + Fair Housing + bias mitigation tools

Zest AI focuses heavily on bias detection and adverse action explanation reports — critical for lending compliance.

📊 Upstart

Primary AI Logic: Alternative data ML modeling

Strength: Expands approval rates responsibly

However, explainability and regulatory scrutiny remain ongoing challenges across alternative credit platforms.

READ MORE- Best AI Tools for Enhancing Digital Banking in 2026 for US Financial Institutions

5. Enterprise Comparison Matrix (2026)

| Tool | Primary AI Logic | Explainability (XAI) | 2026 Compliance Alignment |

|---|---|---|---|

| Feedzai | Behavioral Biometrics | High (SHAP/LIME) | PSD3 + EU AI Act Ready |

| Kasisto | Financial LLM | Moderate | SOC2 + GDPR |

| Zest AI | Fair Lending ML | Maximum | ECOA + Fair Housing |

| Featurespace | Adaptive Analytics | High | Global AML/CTF |

This matrix reflects operational readiness — not marketing claims.

Explainable AI (XAI): The 2026 Regulatory Imperative

Regulators now require:

- Decision traceability

- Bias documentation

- Model drift reporting

- Human oversight capability

Black-box models without interpretability layers risk regulatory rejection.

Explainable AI frameworks increasingly integrate:

- SHAP value breakdowns

- Counterfactual scenario testing

- Feature importance ranking

- Automated fairness audits

Under ISO/IEC 42001, AI governance documentation is becoming board-level responsibility.

Operational Deployment: Agentic Workflow Integration

The modern AI-enhanced digital bank architecture includes:

- Real-time event streaming

- Model scoring layer

- Explainability engine

- Governance dashboard

- Human override interface

- Audit storage

Agentic workflows allow AI systems to:

- Trigger fraud investigation tickets

- Recommend account freezes

- Auto-adjust risk thresholds

- Initiate AML review escalation

All without manual coordination.

Personal Reflection from Enterprise Evaluation

During a risk modernization project in late 2025, the board initially prioritized accuracy metrics.

But the compliance officer asked a more critical question:

“Can we defend this decision to a regulator?”

That single question changed vendor selection entirely.

The winning AI solution wasn’t the most aggressive fraud detector — it was the one that provided:

- Clear explanation logs

- Bias documentation

- Governance workflow controls

- Latency under 200ms

In 2026, AI is judged not only by intelligence — but by defensibility.

The Strategic Outlook

Digital banking AI maturity is entering its third phase:

Phase 1: Automation

Phase 2: Intelligence

Phase 3 (2026+): Governed Agentic Autonomy

The tools leading this transformation share four characteristics:

- Real-time decisioning

- Explainable outputs

- Regulatory alignment

- Integration flexibility

AI in digital banking is no longer about experimentation.

It is about building intelligent, compliant, and measurable financial ecosystems.

Institutions that align AI efficiency (Rₘₛ), explainability (XAI), and compliance governance (EU AI Act + ISO/IEC 42001) will define the next era of financial infrastructure.

And those that treat AI as a feature — rather than architecture — will struggle to compete in a system increasingly optimized by machines.