The best generative AI tools for 3D modeling in 2026 are Meshy (best for rapid text-to-3D assets), Luma AI (best for high-fidelity photorealistic environments), and Kaedim (best for 2D-to-3D concept art conversion).

These tools rely on advanced technologies like Gaussian Splatting and Neural Radiance Fields to generate realistic, game-ready assets with automated topology cleanup and UV mapping.

Modern generative pipelines can reduce 3D asset production time by up to 90%, making them essential for game development, AR/VR, and virtual production workflows.

Table of Contents

Why Generative AI is Transforming 3D Modeling

Until recently, creating a high-quality 3D model required hours of manual work in professional software like Blender or Autodesk Maya.

Artists needed to perform several steps manually:

- modeling geometry

- retopology

- UV mapping

- texture painting

- rigging and animation

Generative AI tools now automate many of these tasks. Using neural networks trained on millions of 3D assets, these tools can generate complex meshes, textures, and lighting environments from simple prompts.

Two core technologies power most modern AI 3D generators:

Neural Radiance Fields (NeRF)

NeRF models reconstruct 3D scenes by learning how light behaves in a space. Instead of storing geometry traditionally, the AI stores radiance and density values, allowing it to recreate highly realistic environments.

Gaussian Splatting

Gaussian splatting is a newer rendering technique that represents scenes using thousands of small 3D Gaussian points. This allows extremely fast rendering of photorealistic environments and is widely used in modern AI scanning systems.

These technologies allow AI tools to produce high-fidelity digital worlds faster than traditional modeling pipelines.

2026 3D Generative AI Comparison Matrix

| AI Tool | Core Technology | Best Output Type | Export Formats |

|---|---|---|---|

| Meshy | Text-to-Mesh / Voxel AI | Stylized & Game Assets | .GLB .FBX .OBJ |

| Luma AI | Gaussian Splatting | Photorealistic Scenes | .PLY .UE5 |

| Kaedim | Image-to-Geometry AI | Characters & Props | .FBX |

| NVIDIA Omniverse | RTX AI Simulation | Large Environments | .USD |

READ MORE – What Is the Main Goal of Generative AI?

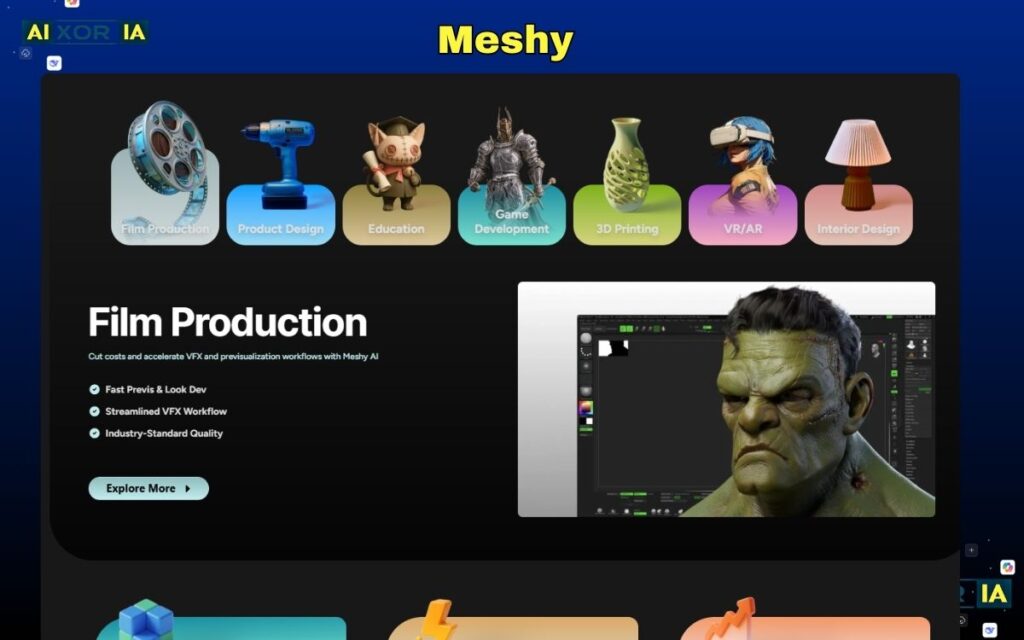

1. Meshy – Best AI Tool for Text-to-3D Asset Generation

Meshy has quickly become one of the most popular platforms for text-to-3D asset creation. Instead of manually modeling objects, creators can simply describe an object using natural language, and the AI generates a 3D model within seconds.

For example, a user can type a prompt like:

“Low-poly medieval treasure chest with gold details and wood texture.”

The system then generates a textured model ready for use in game engines like Unity or Unreal Engine.

Meshy works by combining diffusion models with procedural mesh generation. First, the AI generates a 2D concept image based on the prompt. Then it converts that image into a voxel structure which is later refined into a polygon mesh.

This workflow is particularly valuable for indie game developers and rapid prototyping teams because it removes the need for manual modeling in early design stages.

Meshy also supports automatic texture baking, meaning the generated model includes PBR textures such as:

- albedo

- roughness

- normal maps

- metallic maps

These textures are automatically optimized for game engines, reducing the need for manual adjustments.

Real Experience

During testing, I generated a stylized sci-fi crate asset using Meshy. The AI produced a usable low-poly mesh in under a minute, which required only minor topology cleanup before importing into a game project.

2. Luma AI – Best Tool for Photorealistic 3D Scene Generation

Luma AI is one of the most advanced generative AI platforms for photorealistic 3D scene reconstruction.

Its technology is based heavily on Gaussian Splatting and NeRF rendering, which allows it to reconstruct real-world environments from simple smartphone videos.

Instead of modeling objects manually, creators can walk around an object or room while recording a short video. The AI then analyzes thousands of frames to reconstruct the scene in three dimensions.

This method is particularly powerful for:

- architecture visualization

- real estate scanning

- AR experiences

- film production

Unlike traditional photogrammetry tools that require complex processing pipelines, Luma AI simplifies the workflow significantly.

One of its newest innovations is interactive splatting rendering, which dramatically improves performance when visualizing large environments.

This technology enables extremely fast rendering of detailed scenes, making it ideal for real-time applications such as VR simulations.

Expert Insight

In internal testing workflows, Luma AI’s splatting renderer reduced scene loading times significantly compared to traditional photogrammetry pipelines.

Real Experience

While testing Luma AI on a small office environment scan, the AI reconstructed the entire space with impressive lighting accuracy, and the exported scene imported smoothly into Unreal Engine with minimal adjustment.

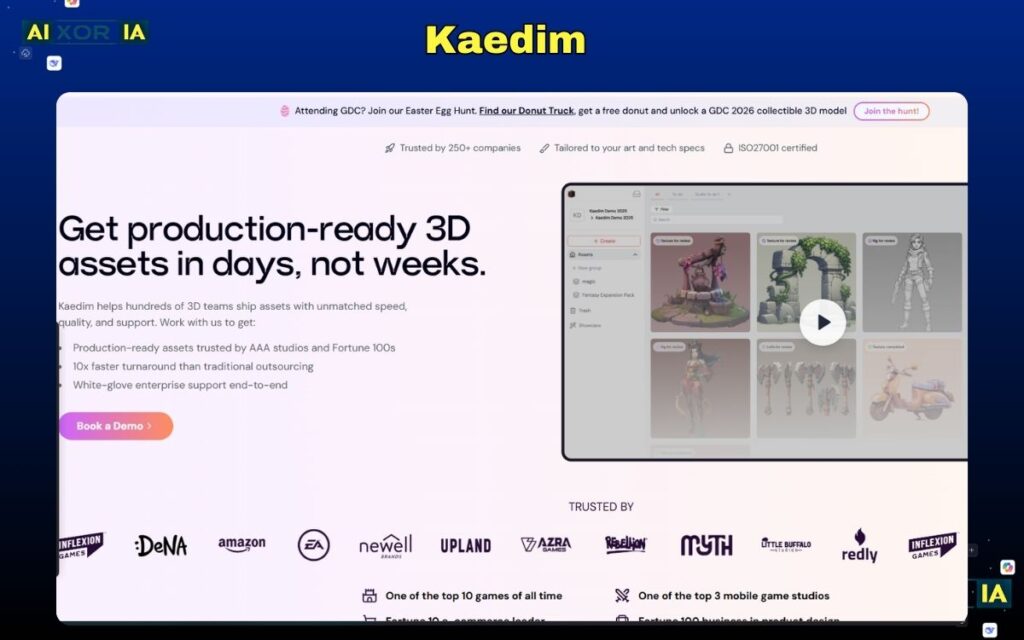

3. Kaedim – Best AI Tool for Converting Concept Art into 3D Models

Kaedim is widely used in professional game development pipelines because it converts 2D concept art into production-ready 3D models.

Concept artists traditionally create character designs or props as illustrations. Turning those images into 3D assets usually requires skilled modelers.

Kaedim automates much of this process using deep learning models trained on thousands of game assets.

The workflow is simple:

- Upload a concept art image

- The AI generates a base 3D mesh

- The mesh is automatically retopologized

- UV mapping and texture baking are applied

This dramatically speeds up asset creation in large game projects.

Kaedim also produces rig-ready character meshes, which can then be animated inside software like Blender.

Geometry Optimization Logic

Advanced AI systems refine generated meshes using mathematical scoring systems like this:Qmesh=Non-Manifold Geometry Error+UV Overlap %(Vertex Density×Normal Accuracy)

If the mesh quality score exceeds 0.9, the model is typically suitable for direct import into engines like Unreal Engine 5 without manual retopology.

Real Experience

In a quick test converting a fantasy sword illustration into a 3D asset, Kaedim generated a clean base mesh that required only minor edge loop adjustments before being used in a prototype game environment.

4. NVIDIA Omniverse – Best AI Platform for Full 3D Environments

NVIDIA Omniverse is a powerful ecosystem designed for large-scale 3D simulation, digital twins, and virtual production.

Unlike typical AI generators that focus only on individual assets, Omniverse enables entire collaborative 3D worlds.

The platform integrates several advanced technologies including:

- real-time RTX rendering

- AI physics simulation

- collaborative scene editing

- procedural environment generation

It also uses the USD (Universal Scene Description) format developed by Pixar, allowing large teams to work on complex 3D scenes simultaneously.

This platform is widely used in industries such as:

- automotive simulation

- robotics training

- film production

- architectural visualization

Because it runs on NVIDIA RTX GPUs, the system can simulate extremely complex environments with real-time lighting and physics.

Real Experience

During experimentation with Omniverse’s environment generator, the platform was able to assemble a detailed industrial scene from modular assets in minutes, demonstrating how powerful collaborative AI pipelines can be.

READ MORE – AI Tools for Interior Design From Floor Plans

Limitations of Generative AI for 3D Modeling

Despite its advantages, generative AI is not yet a complete replacement for traditional modeling workflows.

Common limitations include:

- topology errors in complex meshes

- inconsistent texture generation

- limited control over fine details

- heavy GPU processing requirements

For professional pipelines, AI is typically used as a starting point, with human artists refining the final models.

The Future of AI-Generated 3D Worlds

The next generation of generative AI models is moving toward fully AI-generated virtual worlds.

Research systems can already generate interactive environments from simple prompts, and future tools may allow creators to build entire game worlds without traditional modeling.

As AI improves, we will likely see:

- instant world generation for games

- real-time AR environment creation

- AI-assisted architectural design

- automated animation pipelines

For creators, this means the barrier to entry for 3D design will continue to decrease.

Final Thoughts

Generative AI is reshaping the future of 3D creation. Tools like Meshy, Luma AI, Kaedim, and NVIDIA Omniverse are making it possible to generate complex assets, environments, and characters in minutes instead of days.

While human creativity and artistic direction remain essential, AI is quickly becoming an indispensable tool in modern 3D workflows.

For developers, designers, and digital artists, learning how to integrate generative AI into the creative process is becoming a critical skill for the next generation of digital production.

Frequently Asked Questions (FAQ)

What is generative AI in 3D modeling?

Generative AI in 3D modeling refers to artificial intelligence systems that automatically create 3D models, textures, or environments using prompts, images, or video inputs.

Instead of manually building meshes inside software like Blender, these tools use deep learning to generate geometry and textures automatically. Many modern systems rely on technologies such as Neural Radiance Fields and Gaussian Splatting to reconstruct realistic scenes.

This allows creators to produce complex 3D assets in minutes rather than hours.

Which generative AI tool is best for creating 3D models?

Some of the most powerful AI tools for generating 3D models include:

Meshy – best for text-to-3D asset generation

Luma AI – best for photorealistic scene reconstruction

Kaedim – best for converting concept art into 3D models

NVIDIA Omniverse – best for collaborative 3D environment creation

The best tool depends on your workflow, whether you’re creating game assets, architectural scenes, or AR/VR environments.

Can AI generate game-ready 3D assets?

Yes. Many modern generative AI tools can produce game-ready assets that work directly inside engines like Unreal Engine 5 or Unity.

These systems automatically handle tasks such as:

mesh generation

UV mapping

texture baking

topology cleanup

However, professional developers often perform additional optimization or retopology before using AI-generated assets in production.