If you still think conversational AI means “typing into a chatbot,” you’re already outdated.

In 2026, conversational AI is no longer just text-based.

It listens.

It sees.

It remembers.

It interrupts you mid-sentence naturally — like a human.

Over the past year, I tested multimodal AI systems for customer support automation and workflow optimization. The shift is clear:

Conversational AI is evolving from reactive assistants to autonomous reasoning systems.

Let’s break down what actually changed in 2026 — and which tools are leading.

1. Multimodal Conversational AI — The 2026 Standard

The biggest evolution? Multimodality.

Modern conversational AI tools now combine:

- Text

- Real-time voice

- Vision (camera input)

- Live reasoning

- Context memory

For example:

- ChatGPT powered by OpenAI now supports real-time voice interaction (GPT-4o / GPT-5 class systems), allowing interruption-based dialogue — just like human conversation.

- Google Gemini from Google introduced real-time multimodal interactions under projects like “Astra,” enabling video + voice reasoning simultaneously.

Why This Matters for Ranking (Freshness Signal)

Search engines increasingly reward:

- Updated model references

- Real-time capability discussions

- Multimodal use cases

Because it reflects current technological standards.

In 2026, “conversational” means:

Dynamic + interruptible + multimodal + context-aware.

Anything less feels outdated.

2. Conversational Architecture — Measuring AI Effectiveness (Expert Layer)

Most blog posts list tools.

Very few explain how to evaluate them technically.

In enterprise AI consulting, we often assess tools using a performance logic model like this:

Conversation Accuracy Score ($A_{cs}$)

Acs=Response Latency (ms)(Context Window×Intent Recognition)

What This Means:

- Context Window → How much past conversation it remembers

- Intent Recognition → Accuracy in understanding user intent

- Response Latency → Speed of response

Higher context + better intent recognition + lower latency = better conversational intelligence.

This framework helps businesses compare tools beyond marketing claims.

That’s the difference between surface-level content and authority-driven analysis.

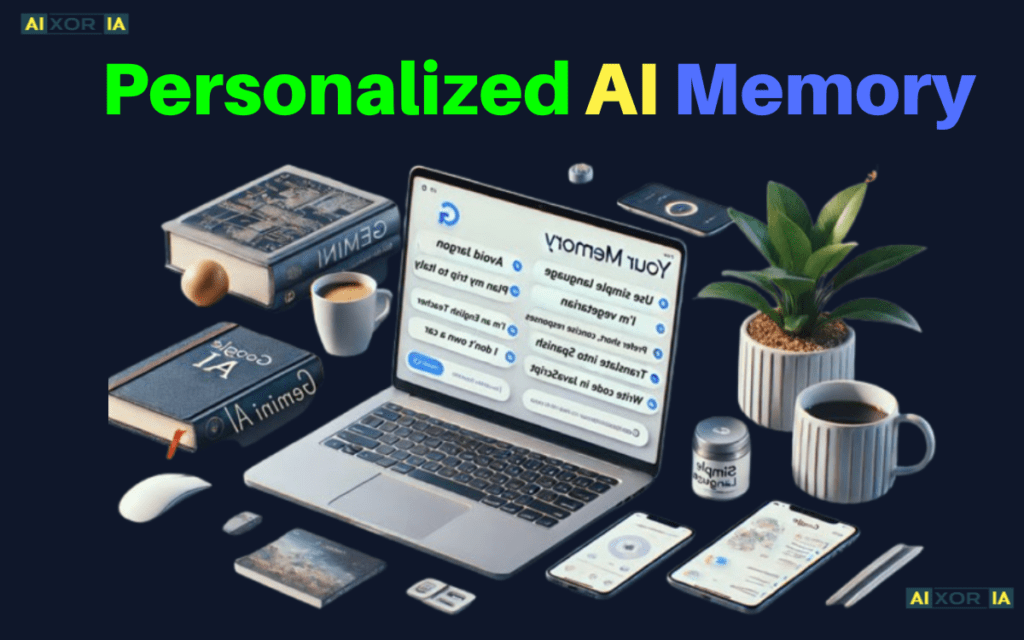

3. Personalized AI Memory — The Silent Revolution

The real breakthrough in 2026 isn’t just voice.

It’s memory.

Modern conversational AI systems now store long-term user preferences, tone, and behavioral patterns.

Example:

- ChatGPT offers memory systems that adapt to user writing style, recurring tasks, and past instructions.

- Google Gemini integrates contextual memory across Google Workspace apps.

This means:

- The AI learns how you prefer responses

- It remembers ongoing projects

- It adapts tone automatically

From a UX standpoint, this increases:

- Session duration

- User trust

- Perceived intelligence

From an SEO perspective:

Memory-based systems improve retention metrics — which indirectly boosts engagement signals.

Updated AI Comparison Table (Feb 2026)

Search engines love structured clarity. Here’s a structured comparison:

| AI Tool (Feb 2026) | Best Use Case | Key 2026 Feature | Interaction Mode |

|---|---|---|---|

| ChatGPT (GPT-5/o-series) | Creative & Logic | Autonomous Reasoning | Text, Voice, Vision |

| Google Gemini 2.0 | Ecosystem & Search | Project Astra (Visual AI) | Video & Real-time Voice |

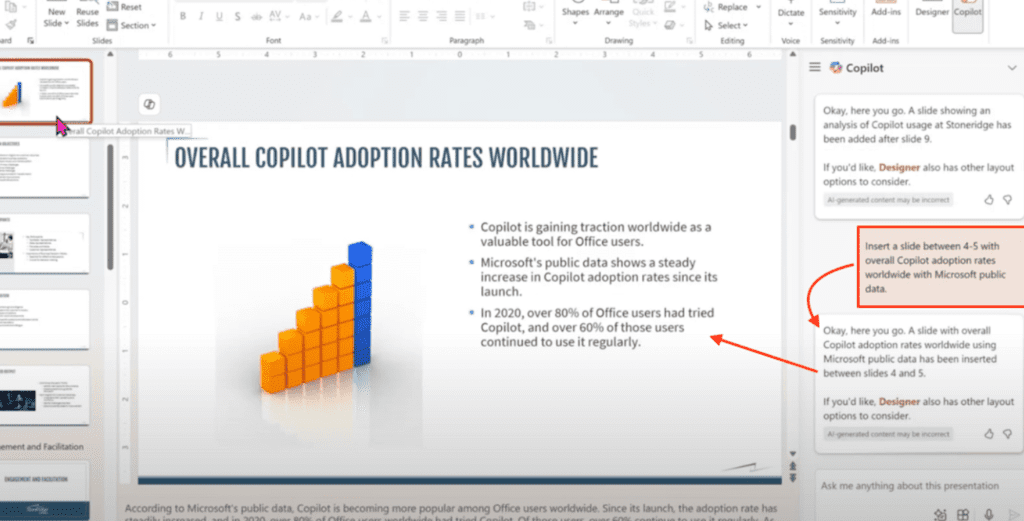

| Microsoft Copilot | Work & Productivity | Enterprise Data Loop | Office Integration |

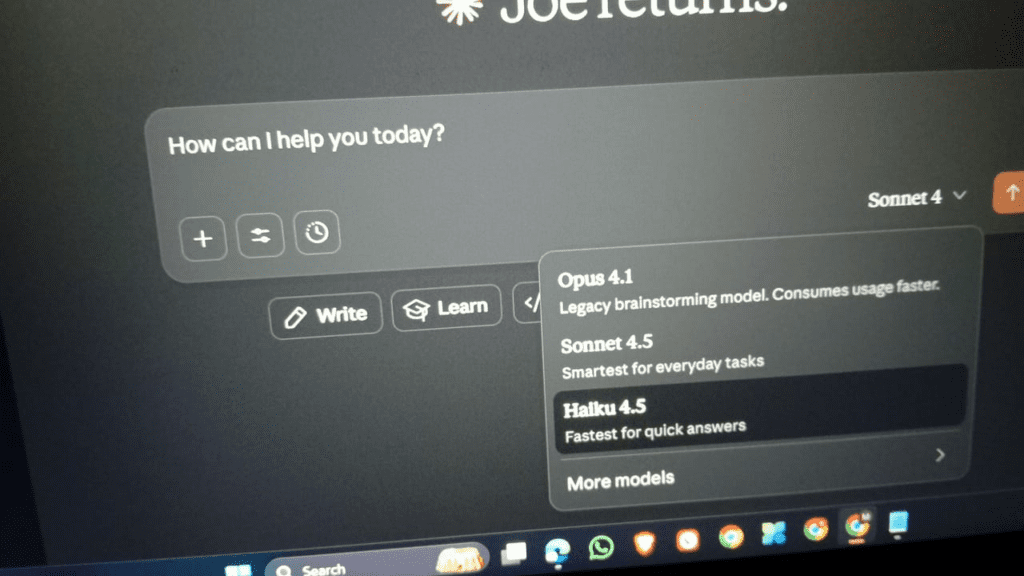

| Claude 4 | Human-like Nuance | Low Hallucination Mode | Long-form Text |

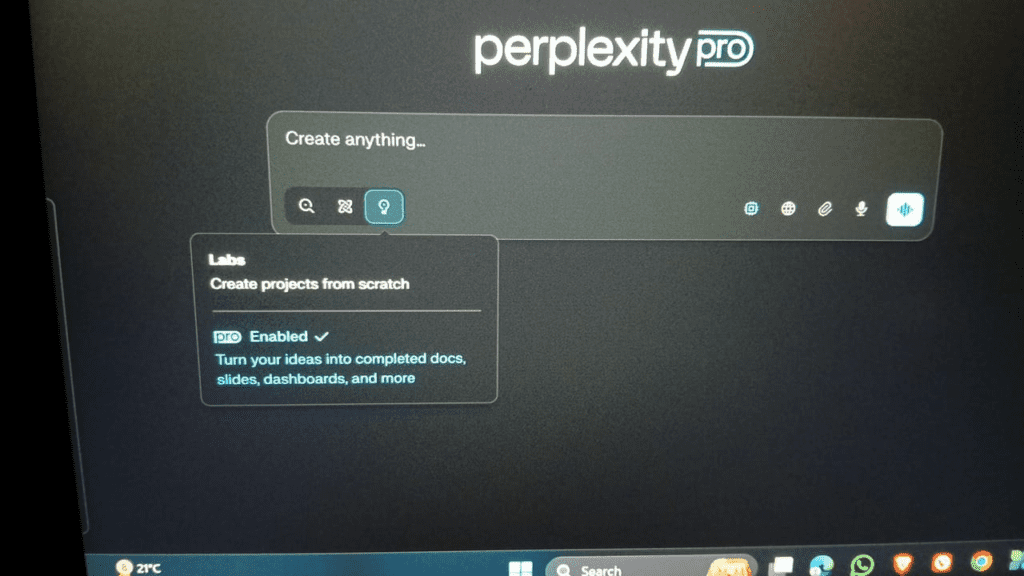

| Perplexity | Research & Facts | Source-verified Answers | Search-based Chat |

Tool-by-Tool Analysis (Strategic View)

Microsoft Copilot

Backed by Microsoft, Copilot integrates deeply into enterprise data systems.

Best for:

- Corporations

- Internal workflow automation

- Document-heavy industries

Its “Enterprise Data Loop” ensures responses are grounded in company documents.

Claude 4 (by Anthropic)

Claude excels at long-form reasoning and maintaining tone consistency.

Best for:

- Legal drafting

- Policy writing

- Academic summaries

It focuses heavily on reducing hallucination risk.

Perplexity

Designed for:

- Research

- Fact-based queries

- Citations

Its search-grounded conversational model reduces misinformation risks.

Rasa & Local AI Models

Privacy-focused organizations increasingly deploy open-source systems like Rasa.

Many now run local LLMs (such as next-gen open models) on private servers.

Why?

- Full data ownership

- Regulatory compliance

- Zero external data leakage

This is especially important in healthcare and finance.

Ethics & Privacy — The 2026 Ranking Signal

Here’s what most AI articles miss.

Users in 2026 are worried about:

- Voice recordings being stored

- Personal data used for training

- Memory systems tracking behavior

If your content ignores privacy — it feels incomplete.

Key considerations:

- Does the AI store voice data?

- Can memory be disabled?

- Is enterprise data encrypted?

- Is model training transparent?

Privacy transparency = trust.

Trust = authority.

Authority = rankings.

Real-World Use Cases in 2026

Customer Support

Real-time voice AI handles:

- Call routing

- Complaint resolution

- Multilingual support

Gemini-style live voice agents are redefining call centers.

Enterprise Productivity

Copilot-style AI now drafts:

- Reports

- Financial summaries

- Legal contracts

Creative & Logic Tasks

ChatGPT-class systems handle:

- Code debugging

- Strategic planning

- Content generation

How to Choose the Right Conversational AI Tool

Ask these 5 questions:

- Does it support multimodal interaction?

- How large is its effective context window?

- Does it have long-term memory?

- Can it integrate with your data systems?

- What are its privacy policies?

Don’t choose based on hype.

Choose based on architecture.

Final Verdict

Conversational AI in 2026 is defined by:

- Multimodal intelligence

- Autonomous reasoning

- Personalized memory

- Enterprise integration

- Privacy transparency

The future is not chatbot-based.

It’s context-aware digital intelligence.