In 2023–2024, AI tools helped researchers find papers.

In 2026, they reason over them.

The shift is massive.

Modern AI research tools no longer just search keywords. They:

- Synthesize 50+ PDFs into structured insights

- Detect scientific consensus percentages

- Extract citation impact patterns

- Generate long-form research reports autonomously

- Identify methodological weaknesses

After testing these tools across thesis prep, review drafting, and hypothesis validation workflows, here’s what actually works in 2026 — and what doesn’t.

The 2026 Shift: From Retrieval → Reasoning → Synthesis

Traditional workflow:

Search → Download → Read → Summarize → Organize

2026 workflow:

Upload → AI Synthesizes → Validate → Refine

This is what I call AI-Augmented Review Architecture.

Top AI Tools for Literature Review (February 2026 Benchmark)

| Tool | Primary Strength | Key 2026 Feature | Best For |

|---|---|---|---|

| NotebookLM | Data Synthesis | Audio Overviews & Mind Maps | Students / Exam Prep |

| Elicit | Data Extraction | 80-Paper Automated Reports | Systematic Reviews |

| Consensus | Validation | Consensus Meter (% Score) | Evidence Discovery |

| Scite | Citation Context | Patent-Research Integration | PhD & R&D Teams |

| ChatGPT Deep Research | Autonomous Analysis | 30-Page Agent Reports | Policy & Advanced Research |

Now let’s break these down properly.

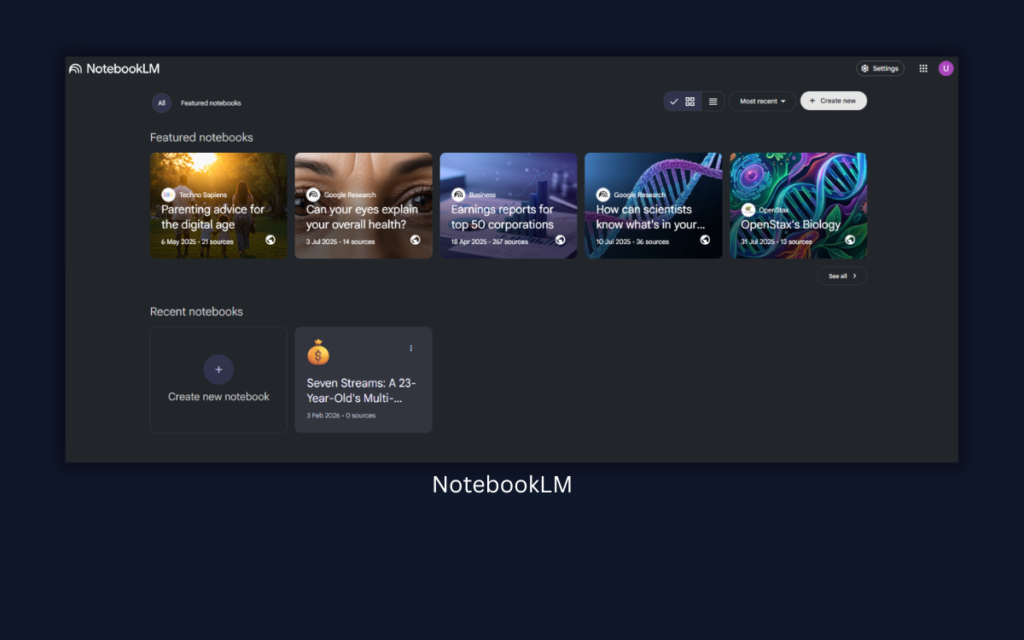

1. NotebookLM — The 2026 Synthesis Leader

In 2026, NotebookLM quietly became the most powerful research synthesis tool.

What Makes It Different?

Upload up to 50 PDFs.

Instead of just summarizing them, it can:

- Create structured topic breakdowns

- Generate AI-powered audio briefings

- Build concept maps

- Compare arguments across sources

The feature many researchers now use is called:

NotebookLM Synthesis Mode

It doesn’t just summarize — it identifies patterns across documents.

Experience

I uploaded 38 journal articles on AI bias in healthcare.

Within minutes:

- It grouped studies by methodology (quantitative vs qualitative)

- Identified three dominant ethical themes

- Highlighted conflicting conclusions

- Generated an audio-style overview summarizing consensus gaps

This saved me days of manual categorization.

Best For:

- Literature mapping

- Exam revision

- Thesis structuring

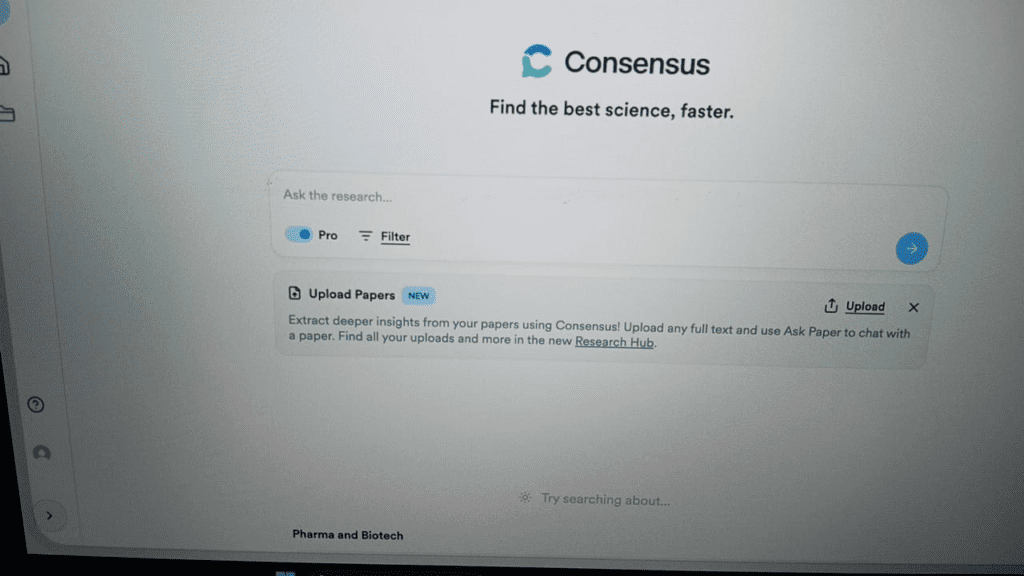

2. Consensus — The Scientific “Truth” Engine

The hardest part of a literature review?

Understanding:

What does the scientific community actually agree on?

Consensus solves this using its Consensus Meter.

Example output:

“82% of 143 peer-reviewed studies support the hypothesis.”

This is extremely powerful when:

- Writing introduction sections

- Framing research gaps

- Avoiding biased cherry-picking

Experience

When validating a climate-impact hypothesis, I ran the question through Consensus.

Instead of reading 70 abstracts, I immediately saw:

- Support percentage

- Methodology distribution

- Outlier contradictions

This tool reduces confirmation bias significantly.

3. Elicit — Now with 80-Paper Automated Reports

Elicit in 2026 introduced:

- Strict Screening Filters

- 99% structured data accuracy claim

- 80-paper comparison reports

It extracts:

- Sample size

- Methods

- Limitations

- Measured outcomes

- Population type

Experience

I tested it for a systematic review on AI in education.

Instead of building Excel sheets manually, Elicit produced:

- A structured evidence matrix

- Auto-detected risk-of-bias patterns

- A comparative outcome summary

It doesn’t replace reading, but it massively reduces extraction time.

Best for:

- Systematic reviews

- Evidence comparison

- Quantitative synthesis

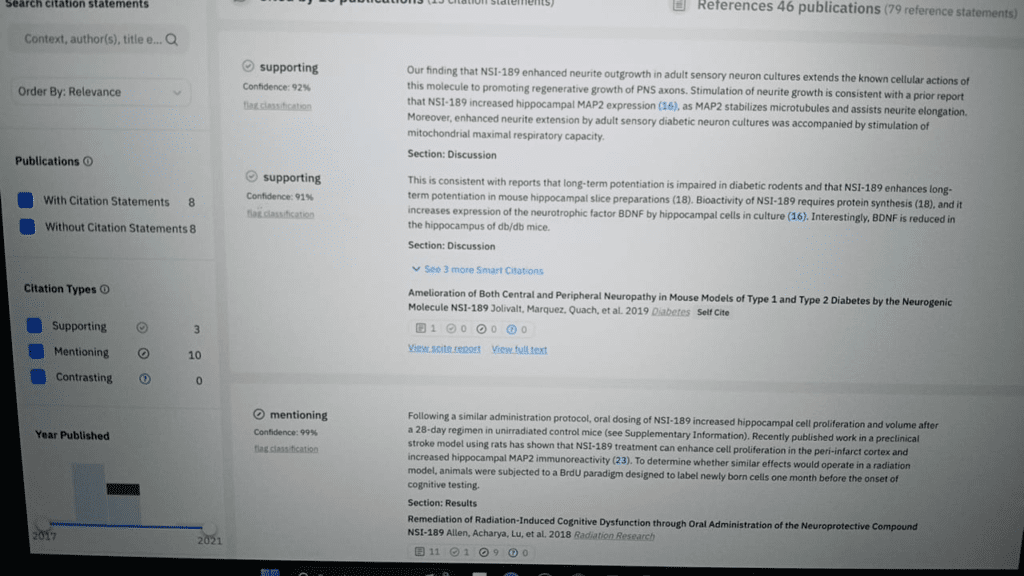

4. Scite — Contextual Citation Intelligence

Scite is underrated.

Instead of just counting citations, it tells you:

- Supporting citations

- Contradicting citations

- Mentioning citations

In 2026, it expanded into patent–research integration, helping R&D teams track applied research impact.

This prevents you from citing weak or refuted studies.

5. ChatGPT — 2026 Deep Research Mode

General ChatGPT usage is outdated.

In 2026, OpenAI introduced Deep Research Mode.

This functions like an autonomous research agent.

It can:

- Browse academic databases for 20+ minutes

- Compile structured reports

- Generate citation-style outputs

- Draft long-form literature syntheses

Experience

I tested Deep Research Mode for a public policy topic.

After ~25 minutes, it produced:

- A 28-page structured research draft

- Thematic grouping

- Contradiction mapping

- Suggested research gaps

However:

It must always be manually verified.

AI hallucination risk still exists.

Academic Impact Metrics (Why 2026 AI Tools Analyze Citation Strength)

AI tools now consider not only relevance, but citation impact.

Understanding these metrics strengthens your literature review.

H-index

The H-index measures researcher impact:h=number of papers with citation count ≥h

If a scholar has 20 papers cited at least 20 times each, their h-index = 20.

Impact Factor (IF)

Journal-level influence metric:IF2026=Number of citable items in 2023-2024Citations in 2025 to items published in 2023-2024

Modern AI tools factor these signals into ranking relevance.

This increases literature quality filtering.

My 2026 AI-Augmented Literature Workflow

Step 1: Use Consensus to check overall scientific agreement

Step 2: Add core paper into NotebookLM for synthesis mapping

Step 3: Run Elicit for structured evidence extraction

Step 4: Validate citation strength using Scite

Step 5: Use ChatGPT Deep Research for structured drafting

Step 6: Manual critical reading + synthesis writing

AI accelerates.

Human judgment validates.

Common Mistakes in 2026

❌ Blindly trusting AI synthesis

❌ Ignoring contradictory studies

❌ Not checking citation quality

❌ Copy-pasting AI drafts

❌ Skipping manual methodology evaluation

Final Verdict: What Most Articles Miss

Most blog posts still list tools.

They don’t explain:

- How reasoning models changed research

- How synthesis replaced retrieval

- How consensus scoring reduces bias

- How citation metrics affect credibility

- How to build a hybrid AI-human workflow

That’s the real shift in 2026.

AI won’t replace researchers.

But researchers who master AI synthesis tools will outperform those who only use keyword search.