Extract, Transform, Load (ETL) is the backbone of modern data workflows. As organizations scale their data operations, the need for flexible, programmable, and automation-ready ETL solutions becomes critical. Python has emerged as the dominant language in this space due to its simplicity, massive ecosystem, and compatibility with data engineering, analytics, and AI workflows.

For teams in the US and globally, choosing the right Python-based ETL tool is not just about moving data—it’s about building reliable pipelines, scaling efficiently, integrating with modern data stacks, and increasingly, leveraging AI to optimize workflows.

This guide breaks down the best Python-based ETL tools in 2026, including their strengths, limitations, and where AI is reshaping how ETL pipelines are built and managed.

What Makes the Best Python-Based ETL Tool?

Not all ETL tools are equal—especially when Python is involved. The best platforms combine flexibility with production-grade reliability.

Python-native or Python-friendly architecture

The tool should allow direct use of Python for transformations, logic, and orchestration.

Scalability and orchestration

From small scripts to enterprise-grade pipelines, the tool should scale without breaking.

Automation and scheduling

Built-in scheduling, retries, and monitoring are essential for production use.

Integration ecosystem

Support for databases, APIs, cloud services, and data warehouses is critical.

AI-assisted pipeline development

Modern ETL tools now integrate AI to help generate pipelines, detect anomalies, optimize queries, and reduce manual work.

The Best Python-Based ETL Tools at a Glance

| Tool | Best For | Key Strength |

|---|---|---|

| Apache Airflow | Workflow orchestration | Scalable DAG-based pipelines |

| Prefect | Modern orchestration | Developer-friendly + flexible |

| Luigi | Simplicity | Lightweight dependency management |

| Dagster | Data engineering teams | Strong data observability |

| Apache Beam | Large-scale processing | Batch + streaming pipelines |

| PySpark | Big data ETL | Distributed processing |

| Kedro | Production-ready pipelines | Clean architecture + reproducibility |

Best Python-Based ETL Tool for Workflow Orchestration

Apache Airflow

Apache Airflow remains one of the most widely adopted ETL orchestration tools in the industry. It uses Directed Acyclic Graphs (DAGs) to define workflows, allowing engineers to manage complex dependencies and schedule pipelines efficiently.

Airflow’s Python-first approach allows developers to define tasks and workflows programmatically, making it highly flexible for custom ETL logic. It integrates with almost every major data platform, including cloud services, databases, and APIs.

AI is beginning to play a growing role in Airflow ecosystems. While Airflow itself is not natively AI-driven, many teams integrate it with AI tools to automate pipeline generation, optimize scheduling, and detect failures proactively. For example, AI-assisted monitoring systems can analyze historical pipeline runs to predict bottlenecks or failures before they happen.

This makes Airflow not just an orchestration tool, but a central control layer for intelligent data workflows when combined with AI.

Best for: Large-scale orchestration and enterprise pipelines

Limitation: Steeper learning curve and setup complexity

Best Python-Based ETL Tool for Modern Development

Prefect

Prefect was built to address many of the limitations of traditional orchestration tools like Airflow. It offers a more modern, Pythonic approach to building and managing workflows, with a strong focus on developer experience.

Unlike Airflow, Prefect allows dynamic workflows and simpler error handling, making it easier to build complex ETL pipelines without rigid structures.

Prefect has started incorporating AI capabilities into its ecosystem, particularly around observability and automation. AI can assist in identifying pipeline inefficiencies, suggesting optimizations, and even generating workflow code from high-level descriptions. This reduces development time and helps teams focus on logic rather than boilerplate code.

Additionally, Prefect’s cloud offering provides advanced monitoring, alerts, and automation, making it a strong choice for teams that want flexibility without sacrificing control.

Best for: Developer-friendly ETL pipelines

Limitation: Smaller community compared to Airflow

Best Lightweight Python ETL Tool

Luigi

Luigi is a lightweight Python library developed for building simple ETL pipelines with dependency management. It focuses on task execution and ensuring that workflows run in the correct order.

While Luigi lacks the advanced UI and features of modern tools, it remains a solid choice for smaller projects or teams that prefer simplicity over complexity.

AI integration in Luigi environments is typically external. Developers often combine Luigi with machine learning models or AI monitoring tools to enhance pipeline intelligence. For example, anomaly detection models can be used alongside Luigi to validate data quality or detect unusual patterns during ETL processes.

Although it doesn’t offer built-in AI features, Luigi’s flexibility allows teams to integrate AI wherever needed.

Best for: Simple and lightweight workflows

Limitation: Limited scalability and modern features

Best Python ETL Tool for Data Observability

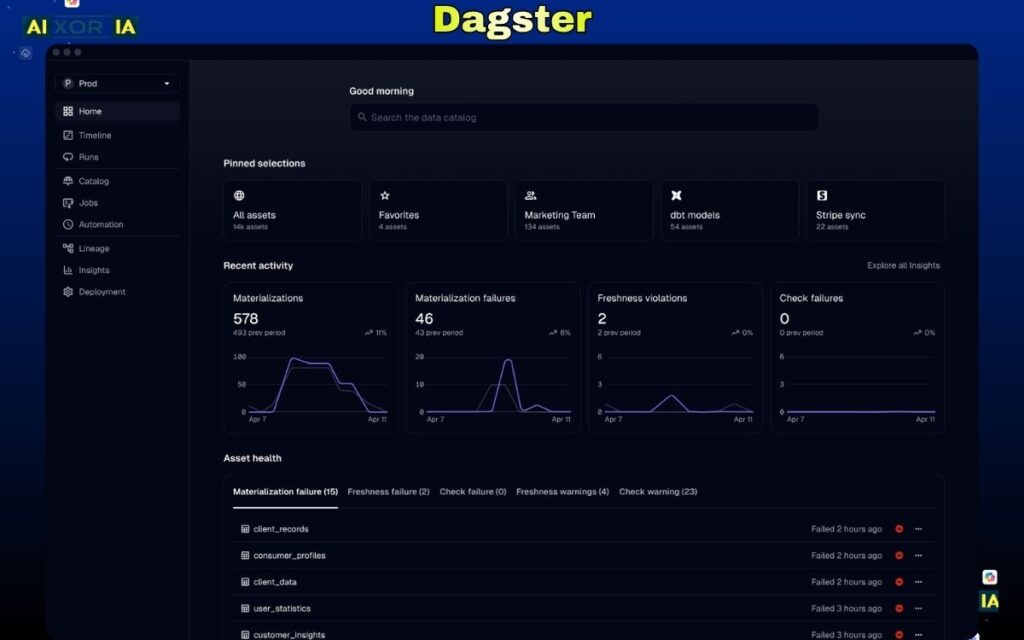

Dagster

Dagster is a newer entrant that focuses heavily on data quality, observability, and developer productivity. It introduces a more structured approach to data pipelines, emphasizing data assets rather than just tasks.

Dagster’s architecture makes it easier to track data lineage, debug pipelines, and ensure reliability. This is particularly valuable for teams working with complex data systems.

AI plays a significant role in modern Dagster workflows. It can be used to monitor data quality, detect anomalies, and even suggest improvements in pipeline design. Some implementations use AI to automatically flag inconsistencies in datasets or predict failures based on historical runs.

This focus on intelligent data management makes Dagster a strong choice for teams that prioritize reliability and transparency.

Best for: Data observability and reliability

Limitation: Learning curve for new users

Best Python ETL Tool for Large-Scale Processing

Apache Beam

Apache Beam is designed for building both batch and streaming data pipelines at scale. It provides a unified programming model that works across multiple execution engines like Apache Flink and Google Cloud Dataflow.

Beam supports Python through its SDK, allowing developers to write scalable ETL pipelines that can handle massive datasets.

AI integration with Beam often focuses on real-time data processing. For example, AI models can be embedded within streaming pipelines to analyze data as it flows, enabling use cases like fraud detection, personalization, and predictive analytics.

This makes Beam particularly powerful for organizations that require both ETL and real-time intelligence.

Best for: Large-scale and streaming ETL

Limitation: Complex setup and infrastructure requirements

Best Python ETL Tool for Big Data

PySpark

PySpark is the Python interface for Apache Spark, one of the most widely used big data processing frameworks. It enables distributed data processing across clusters, making it ideal for handling massive datasets.

With PySpark, developers can perform ETL operations at scale, using Python while leveraging Spark’s performance capabilities.

AI is deeply integrated into the Spark ecosystem. PySpark can be used alongside machine learning libraries to build intelligent ETL pipelines that not only process data but also generate insights. For example, data can be transformed and immediately fed into machine learning models for predictions or classifications.

This combination of ETL and AI makes PySpark a critical tool for data-driven organizations.

Best for: Big data ETL and distributed processing

Limitation: Requires cluster setup and management

Best Python ETL Tool for Clean Architecture

Kedro

Kedro is designed for building maintainable and production-ready data pipelines. It enforces a clean architecture, separating data, logic, and configuration.

This makes Kedro particularly useful for teams that want reproducibility, scalability, and collaboration in their ETL workflows.

AI integration in Kedro is seamless, as it is often used in machine learning pipelines. It allows teams to structure workflows that include data preprocessing, model training, and deployment within a single framework. AI can also assist in automating pipeline generation and optimizing workflows.

Kedro is especially valuable for organizations that treat ETL as part of a larger data science and AI lifecycle.

Best for: Production-grade pipelines and data science workflows

Limitation: Requires structured approach and setup

How to Choose the Right Python-Based ETL Tool

The best tool depends on your use case:

- For orchestration → Apache Airflow

- For modern workflows → Prefect

- For simplicity → Luigi

- For observability → Dagster

- For streaming and scale → Apache Beam

- For big data → PySpark

- For structured pipelines → Kedro

Many organizations use multiple tools together—for example, Airflow for orchestration and PySpark for processing.

The Role of AI in Python-Based ETL (2026)

AI is transforming ETL from a manual engineering process into an intelligent system.

Modern ETL workflows can now:

- Generate pipelines from natural language

- Detect anomalies in real time

- Optimize performance automatically

- Predict failures before they occur

This shift reduces the need for manual intervention and allows data teams to focus on strategy rather than maintenance.

Final Thoughts

Python-based ETL tools have evolved far beyond simple data pipelines. They now serve as the foundation for data engineering, analytics, and AI-driven decision-making.

The best tool for your organization depends on:

- Scale of data

- Complexity of workflows

- Team expertise

- Need for AI integration

In many cases, the most effective approach is a combination of tools that work together to handle orchestration, processing, and intelligence.